## Line Chart: Performance Metrics vs. Training Steps

### Overview

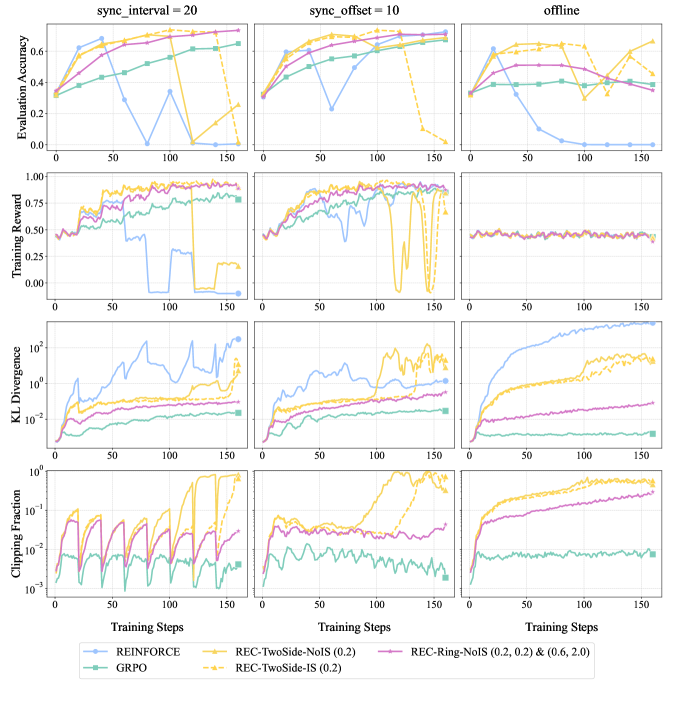

This image presents a series of line charts comparing the performance of different reinforcement learning algorithms under varying synchronization intervals and an offline setting. The charts display Evaluation Accuracy, Training Reward, KL Divergence, and Clipping Fraction as functions of Training Steps. There are three columns representing different experimental conditions: `sync_interval = 20`, `sync_offset = 10`, and `offline`. Each chart contains lines representing the performance of several algorithms: REINFORCE, REC-TwoSide-NoIS (0.2), REC-Ring-NoIS (0.2, 2.0), REC-TwoSide-IS (0.2), and GRPO.

### Components/Axes

* **X-axis:** Training Steps (ranging from 0 to 150)

* **Y-axis (Top Chart):** Evaluation Accuracy (ranging from 0 to 0.6)

* **Y-axis (Second Chart):** Training Reward (ranging from 0 to 1.0)

* **Y-axis (Third Chart):** KL Divergence (log scale, ranging from 10^-2 to 10^2)

* **Y-axis (Bottom Chart):** Clipping Fraction (log scale, ranging from 10^-2 to 10^0)

* **Legend (Bottom Center):**

* REINFORCE (Blue)

* REC-TwoSide-NoIS (0.2) (Orange)

* REC-Ring-NoIS (0.2, 2.0) (Green)

* REC-TwoSide-IS (0.2) (Red)

* GRPO (Light Blue)

* **Titles (Top):** `sync_interval = 20`, `sync_offset = 10`, `offline`

### Detailed Analysis or Content Details

**Evaluation Accuracy:**

* **sync\_interval = 20:** REINFORCE shows a slow, steady increase, reaching approximately 0.55 by step 150. REC-TwoSide-NoIS (0.2) starts low but increases rapidly, reaching around 0.6 by step 100 and remaining stable. REC-Ring-NoIS (0.2, 2.0) shows a similar trend to REC-TwoSide-NoIS (0.2), but with slightly lower values. REC-TwoSide-IS (0.2) starts around 0.2 and increases to approximately 0.4. GRPO fluctuates around 0.2.

* **sync\_offset = 10:** REINFORCE shows a similar slow increase as in the previous column, reaching approximately 0.5 by step 150. REC-TwoSide-NoIS (0.2) increases rapidly to around 0.6. REC-Ring-NoIS (0.2, 2.0) shows a similar trend, reaching around 0.55. REC-TwoSide-IS (0.2) increases to approximately 0.4. GRPO fluctuates around 0.2.

* **offline:** REINFORCE shows a fluctuating pattern, reaching around 0.4. REC-TwoSide-NoIS (0.2) increases rapidly to around 0.6. REC-Ring-NoIS (0.2, 2.0) shows a similar trend, reaching around 0.55. REC-TwoSide-IS (0.2) increases to approximately 0.4. GRPO fluctuates around 0.2.

**Training Reward:**

* **sync\_interval = 20:** All algorithms show an increasing trend. REC-TwoSide-NoIS (0.2) and REC-Ring-NoIS (0.2, 2.0) reach approximately 0.9. REINFORCE reaches around 0.7. REC-TwoSide-IS (0.2) and GRPO reach around 0.6.

* **sync\_offset = 10:** Similar to the previous column, REC-TwoSide-NoIS (0.2) and REC-Ring-NoIS (0.2, 2.0) reach approximately 0.9. REINFORCE reaches around 0.7. REC-TwoSide-IS (0.2) and GRPO reach around 0.6.

* **offline:** REC-TwoSide-NoIS (0.2) and REC-Ring-NoIS (0.2, 2.0) reach approximately 0.9. REINFORCE reaches around 0.7. REC-TwoSide-IS (0.2) and GRPO reach around 0.6.

**KL Divergence:**

* **sync\_interval = 20:** REINFORCE and GRPO show relatively stable KL Divergence around 10^-1. REC-TwoSide-NoIS (0.2) and REC-TwoSide-IS (0.2) show a rapid increase in KL Divergence, reaching around 10^1. REC-Ring-NoIS (0.2, 2.0) shows a moderate increase, reaching around 10^0.

* **sync\_offset = 10:** Similar trends as in the previous column.

* **offline:** Similar trends as in the previous column.

**Clipping Fraction:**

* **sync\_interval = 20:** REINFORCE and GRPO show relatively stable Clipping Fraction around 10^-1. REC-TwoSide-NoIS (0.2) and REC-TwoSide-IS (0.2) show a rapid increase in Clipping Fraction, reaching around 10^0. REC-Ring-NoIS (0.2, 2.0) shows a moderate increase, reaching around 10^-1.

* **sync\_offset = 10:** Similar trends as in the previous column.

* **offline:** Similar trends as in the previous column.

### Key Observations

* REC-TwoSide-NoIS (0.2) consistently outperforms other algorithms in terms of Evaluation Accuracy and Training Reward across all conditions.

* REC-Ring-NoIS (0.2, 2.0) performs similarly to REC-TwoSide-NoIS (0.2), but slightly lower.

* REINFORCE and GRPO show relatively stable but lower performance.

* REC-TwoSide-IS (0.2) shows moderate performance.

* KL Divergence and Clipping Fraction are significantly higher for REC-TwoSide-NoIS (0.2) and REC-TwoSide-IS (0.2), indicating potential issues with policy updates.

* The `sync_interval` and `sync_offset` parameters do not appear to have a significant impact on the overall performance trends.

### Interpretation

The data suggests that the REC-TwoSide-NoIS (0.2) algorithm is the most effective in achieving high evaluation accuracy and training reward. However, its high KL Divergence and Clipping Fraction suggest that it may be prone to large policy updates, which could lead to instability. The consistent performance across different synchronization settings indicates that the algorithms are relatively robust to these parameters. The offline setting does not significantly alter the relative performance of the algorithms. The high performance of REC-TwoSide-NoIS (0.2) could be attributed to its ability to effectively explore the environment and learn a robust policy, but further investigation is needed to understand the trade-offs between performance and stability. The relatively poor performance of REINFORCE and GRPO may indicate that they are less effective in this particular environment or require more careful tuning. The log scales on KL Divergence and Clipping Fraction highlight the magnitude of these values for certain algorithms, suggesting potential issues with their learning process.