TECHNICAL ASSET FINGERPRINT

901ef09ce7a8ea694b1055cb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

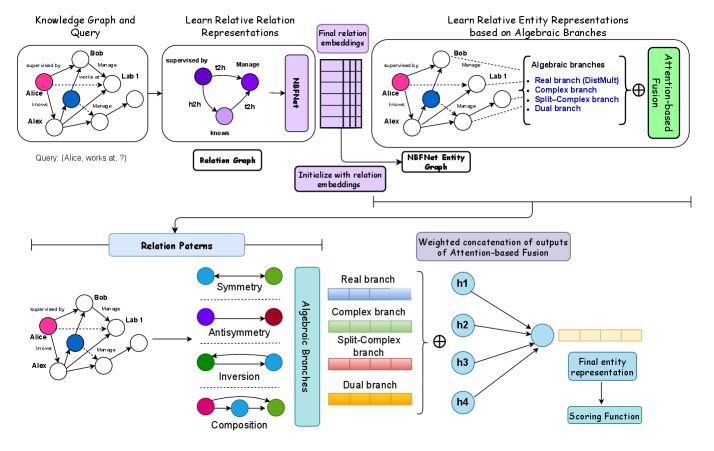

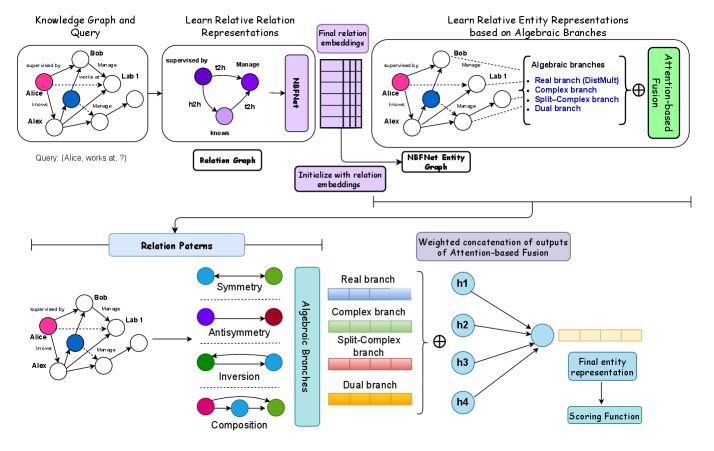

## Diagram: Knowledge Graph and Relative Representations

### Overview

The image is a diagram illustrating a process for learning relative entity representations based on algebraic branches, starting from a knowledge graph and query. It shows the flow of information and transformations through different stages, including relation representations, entity graphs, and algebraic branches, culminating in a final entity representation and scoring function.

### Components/Axes

* **Header:** Contains the titles of the different stages of the process.

* "Knowledge Graph and Query" (top-left)

* "Learn Relative Relation Representations" (top-center)

* "Learn Relative Entity Representations based on Algebraic Branches" (top-right)

* **Knowledge Graph and Query:** A graph showing entities (Alice, Bob, Alex, Lab 1) and their relationships (supervised by, works at, knows, Manage). A query "(Alice, works at, ?)" is posed.

* **Relation Graph:** A graph showing relations (t2h, Manage, h2h, knows).

* **NBFNet:** A block representing a neural network used for relation and entity embeddings.

* **Algebraic Branches:** A set of branches representing different types of relationships:

* Real branch (DistMult)

* Complex branch

* Split-Complex branch

* Dual branch

* **Relation Patterns:** Shows different relation patterns: Symmetry, Antisymmetry, Inversion, Composition.

* **Attention-based Fusion:** A process for combining information from different branches.

* **Final Entity Representation:** The output of the process, representing the entities in a learned space.

* **Scoring Function:** A function used to evaluate the final entity representation.

### Detailed Analysis or ### Content Details

1. **Knowledge Graph and Query:**

* Entities: Alice (pink), Bob (white), Alex (white), Lab 1 (white).

* Relations:

* Bob supervised by Alice.

* Bob works at Lab 1.

* Alice knows Alex.

* Bob manages Alice.

* Alex manages Alice.

* Query: (Alice, works at, ?)

2. **Learn Relative Relation Representations:**

* Relation Graph:

* Nodes: t2h (purple), Manage (purple), h2h (purple), knows (purple).

* Edges: t2h -> Manage, t2h -> h2h.

* NBFNet block transforms the relation graph into "Final relation embeddings".

3. **Learn Relative Entity Representations based on Algebraic Branches:**

* NBFNet Entity Graph: Initialized with relation embeddings.

* Algebraic Branches:

* Real branch (DistMult) - light blue

* Complex branch - light green

* Split-Complex branch - light red

* Dual branch - light orange

* Attention-based Fusion: Combines the outputs of the algebraic branches.

4. **Relation Patterns:**

* Symmetry: Blue node connected to green node with a double-headed dotted arrow.

* Antisymmetry: Purple node connected to red node with a double-headed solid arrow.

* Inversion: Green node connected to blue node with a double-headed solid arrow.

* Composition: Pink node connected to green node with a curved arrow.

5. **Weighted concatenation of outputs of Attention-based Fusion:**

* h1, h2, h3, h4 (light blue circles) are concatenated and fed into the "Final entity representation".

* The "Final entity representation" is then used by the "Scoring Function".

### Key Observations

* The diagram illustrates a multi-stage process for learning entity representations.

* The process involves transforming a knowledge graph into relation and entity graphs.

* Algebraic branches are used to capture different types of relationships.

* Attention-based fusion is used to combine information from different branches.

### Interpretation

The diagram presents a method for learning entity representations that leverages knowledge graphs, relation representations, and algebraic branches. The process aims to capture complex relationships between entities by considering different types of relations and combining them using attention-based fusion. The final entity representation can then be used for various downstream tasks, such as link prediction or entity classification. The use of algebraic branches allows the model to capture different types of relationships, such as symmetry, antisymmetry, and composition. The attention-based fusion mechanism allows the model to selectively attend to the most relevant information from each branch.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: NBFNet Entity Representation Learning

### Overview

This diagram illustrates the process of learning entity representations using the NBFNet (Neural-Symbolic Fusion Network) architecture, leveraging knowledge graphs and algebraic branches. The diagram is structured into three main sections: Knowledge Graph and Query, Learning Relative Representations, and Relation Patterns. It shows how a knowledge graph is used to learn relation embeddings, which are then used to generate entity representations based on algebraic branches, and finally fused using an attention mechanism.

### Components/Axes

The diagram consists of several interconnected components:

* **Knowledge Graph and Query:** Depicts a knowledge graph with entities (Alice, Bob, Lab 1) and relations (managed by, works at). A query "Alice, works at, ?" is shown.

* **Relation Graph:** Represents the learned relation embeddings (h1, h2).

* **NBFNet Entity Graph:** Shows the entity graph with learned embeddings.

* **Algebraic Branches:** Illustrates the four algebraic branches: Real branch (DistMult), Complex branch, Split-Complex branch, and Dual branch.

* **Attention-based Fusion:** Represents the fusion of outputs from the algebraic branches.

* **Relation Patterns:** Displays relation patterns like Symmetry, Antisymmetry, Inversion, and Composition.

* **Weighted concatenation of outputs of Attention-based Fusion:** Shows the final entity representation and scoring function.

### Detailed Analysis or Content Details

**Section 1: Knowledge Graph and Query**

* The knowledge graph contains three entities: Alice (represented by a white circle), Bob (represented by a blue circle), and Lab 1 (represented by a green circle).

* Relations are represented by arrows:

* Alice is "managed by" Bob.

* Bob is "managed by" Lab 1.

* Alice "works at" Lab 1.

* The query is: "Alice, works at, ?"

**Section 2: Learn Relative Relation Representations**

* The Relation Graph shows two relation embeddings: h1 (blue) and h2 (green).

* The NBFNet Entity Graph shows the same knowledge graph as Section 1, but with the entities now having associated embeddings.

* The "Initialize with relation embeddings" arrow indicates that the entity graph is initialized using the relation embeddings.

* The "Final relation embeddings" box represents the output of this stage.

**Section 3: Learn Relative Entity Representations based on Algebraic Branches**

* The Algebraic Branches section shows four branches:

* Real branch (DistMult) - colored light orange.

* Complex branch - colored light green.

* Split-Complex branch - colored light blue.

* Dual branch - colored yellow.

* Each branch receives input from the NBFNet Entity Graph.

* The outputs of the four branches (h1, h2, h3, h4) are fed into the "Attention-based Fusion" block.

**Section 4: Relation Patterns**

* The Relation Patterns section shows the knowledge graph again, with different colors representing different relation patterns:

* Symmetry - blue circles and arrows.

* Antisymmetry - green circles and arrows.

* Inversion - magenta circles and arrows.

* Composition - cyan circles and arrows.

**Section 5: Weighted concatenation of outputs of Attention-based Fusion**

* The outputs of the algebraic branches (h1, h2, h3, h4) are concatenated and fed into a "Final entity representation" block.

* The final entity representation is then used by a "Scoring Function".

### Key Observations

* The diagram highlights the use of algebraic branches to capture different aspects of relations.

* The attention-based fusion mechanism allows for combining the outputs of the different branches.

* The relation patterns section demonstrates how the learned embeddings can capture different relational properties.

* The diagram shows a clear flow of information from the knowledge graph to the final entity representation.

### Interpretation

The diagram illustrates a novel approach to entity representation learning by leveraging knowledge graphs and algebraic branches within the NBFNet framework. The core idea is to decompose relations into their algebraic components, learn embeddings for each component, and then fuse them using an attention mechanism to obtain a comprehensive entity representation. This approach allows the model to capture a richer understanding of relations and improve the quality of entity embeddings. The use of different relation patterns (Symmetry, Antisymmetry, Inversion, Composition) suggests that the model can learn to distinguish between different types of relations and encode this information into the entity representations. The final scoring function indicates that the learned entity representations can be used for downstream tasks such as link prediction or entity classification. The diagram effectively communicates the architecture and flow of information within the NBFNet model, providing a clear understanding of its key components and their interactions.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Diagram]: Knowledge Graph Processing Pipeline for Relation and Entity Representation Learning

### Overview

The image is a technical flowchart illustrating a two-stage machine learning pipeline for processing knowledge graphs. The first stage (top half) focuses on learning relative relation representations using a graph neural network (NBFNet). The second stage (bottom half) focuses on learning relative entity representations based on algebraic branches and attention-based fusion. The overall goal is to generate a final entity representation for a scoring function, likely for tasks like link prediction or query answering.

### Components/Axes

The diagram is organized into two primary horizontal sections, connected by a flow arrow.

**Top Section: Learn Relative Relation Representations**

* **Leftmost Box:** Titled "Knowledge Graph and Query". Contains a small knowledge graph diagram with entities (nodes) and relations (edges). A query is shown below: `Query: {Alice, works at, ?}`.

* **Middle Box:** Titled "Learn Relative Relation Representations". Contains a "Relation Graph" with nodes labeled `e1h`, `e2h`, `e1t`, `e2t` and edges labeled `supervises by`, `Manage`, `known`. An arrow points to a block labeled "NBFNet".

* **Rightmost Box:** Titled "Learn Relative Entity Representations based on Algebraic Branches". Contains a larger knowledge graph diagram and a list of "Algebraic branches": `Real branch (DistMult)`, `Complex branch`, `Split-Complex branch`, `Dual branch`. These feed into a green block labeled "Attention-based Fusion".

* **Connecting Elements:**

* An arrow labeled "Final relation embeddings" connects the NBFNet output to the entity representation box.

* A purple box labeled "Initialize with relation embeddings" points to a block labeled "NBFNet Entity Graph".

**Bottom Section: Relation Patterns and Entity Representation**

* **Left Side:** A knowledge graph diagram feeds into a block titled "Relation Patterns". This block lists four patterns with corresponding colored node pairs: `Symmetry` (blue-green), `Antisymmetry` (purple-red), `Inversion` (green-blue), `Composition` (pink-green).

* **Central Flow:** The "Relation Patterns" feed into a vertical block labeled "Algebraic Branches". This block contains four horizontal bars representing the branches: `Real branch` (blue), `Complex branch` (green), `Split-Complex branch` (red), `Dual branch` (orange).

* **Right Side:** The outputs of the four branches (labeled `h1`, `h2`, `h3`, `h4`) are shown as circles. They feed into a summation symbol (⊕) and then into a block labeled "Weighted concatenation of outputs of Attention-based Fusion". This produces a vector (yellow segmented bar) labeled "Final entity representation", which then points to a "Scoring Function".

### Detailed Analysis

The diagram describes a sequential and parallel processing flow:

1. **Input:** A knowledge graph and a query (e.g., `{Alice, works at, ?}`).

2. **Relation Learning Stage:**

* A relation graph is constructed from the knowledge graph.

* The NBFNet (Neural Bellman-Ford Network) processes this graph to produce "Final relation embeddings".

3. **Entity Learning Stage:**

* The learned relation embeddings are used to initialize an "NBFNet Entity Graph".

* Entity representations are learned using multiple "Algebraic branches" (Real/DistMult, Complex, Split-Complex, Dual). These branches likely apply different mathematical operations to model relation patterns.

* The outputs of these branches are combined via an "Attention-based Fusion" mechanism.

4. **Pattern Integration:** The bottom section elaborates that the model explicitly considers fundamental "Relation Patterns" (Symmetry, Antisymmetry, Inversion, Composition) which are processed by the respective algebraic branches.

5. **Final Output:** The outputs (`h1` to `h4`) from the branches are weighted, concatenated, and fused to produce a "Final entity representation". This representation is then used by a "Scoring Function" to evaluate the query.

### Key Observations

* **Modular Design:** The pipeline is clearly modular, separating relation learning from entity learning and employing multiple parallel algebraic branches.

* **Pattern-Aware:** The model explicitly incorporates known relational logic patterns (Symmetry, etc.) into its architecture via the dedicated "Relation Patterns" block.

* **Hybrid Approach:** It combines graph neural networks (NBFNet) with algebraic embedding models (DistMult, ComplEx, etc.) and an attention mechanism.

* **Flow Direction:** The primary data flow is from left to right and top to bottom, starting with the raw graph/query and ending with a scored representation.

### Interpretation

This diagram outlines a sophisticated knowledge graph embedding model designed to answer complex queries. The key innovation appears to be the **decoupled learning of relation and entity representations** using a graph neural network (NBFNet) as a backbone, enhanced by **multi-branch algebraic processing** to capture diverse relational semantics.

* **What it suggests:** The model aims to be more expressive than single-branch models (like pure DistMult or ComplEx) by using an ensemble of algebraic branches, each potentially specializing in different relation types (e.g., the Real branch for symmetric relations, the Complex branch for antisymmetric ones). The attention-based fusion allows the model to dynamically weigh the importance of each branch's output for a given entity or query context.

* **How elements relate:** The "Relation Patterns" block acts as a conceptual guide for why multiple algebraic branches are needed. The NBFNet provides a powerful, message-passing-based method to propagate information through the graph, which is then refined by the algebraic branches. The final scoring function uses the fused, rich entity representation to make predictions.

* **Notable aspects:** The explicit mention of "Initialize with relation embeddings" for the entity graph suggests a tight coupling between the two learning stages, where relation information directly conditions entity representation learning. The overall architecture is complex, indicating it is designed for challenging knowledge graph completion tasks where capturing nuanced relational patterns is critical.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Entity Representation Learning System Architecture

### Overview

The diagram illustrates a multi-stage system for learning relative entity representations using algebraic branches and attention-based fusion. It combines knowledge graph analysis, relation pattern recognition, and algebraic branch processing to generate final entity representations through weighted concatenation and scoring.

### Components/Axes

1. **Left Section (Knowledge Graph & Query)**

- Entities: Alice (pink), Bob (blue), Alex (red), Lab 1 (gray)

- Relations: "supervised by", "manages", "works at", "knows"

- Query: "Alice works at ?"

2. **Middle Section (Relation Learning)**

- Process: Learn Relative Relation Representations → Final Relation Embeddings

- Tools: NBFNet (Neural Branch Fusion Network)

3. **Right Section (Entity Representation)**

- NBFNet Entity Graph with Algebraic Branches:

- Real branch (DistMult)

- Complex branch

- Split-Complex branch

- Dual branch

- Attention-based Fusion

4. **Bottom Section (Algebraic Branches)**

- Relation Patterns:

- Symmetry (blue)

- Antisymmetry (purple)

- Inversion (green)

- Composition (red)

- Corresponding Algebraic Branches:

- Real (blue)

- Complex (green)

- Split-Complex (red)

- Dual (yellow)

### Detailed Analysis

1. **Knowledge Graph Processing**

- Initial query resolution using entity relations

- Relation patterns identified through graph traversal

2. **Algebraic Branch Processing**

- Four distinct branches handle different relation properties:

- Real branch (blue): Basic numerical operations

- Complex branch (green): Handles antisymmetry

- Split-Complex branch (red): Manages inversion

- Dual branch (yellow): Processes composition

3. **Attention-Based Fusion**

- Four attention heads (h1-h4) process branch outputs

- Weighted concatenation combines branch representations

- Final entity representation generated through scoring function

### Key Observations

1. **Color-Coded Branches**

- Real branch (blue) consistently connects to symmetry patterns

- Complex branch (green) links to antisymmetry

- Split-Complex branch (red) associates with inversion

- Dual branch (yellow) correlates with composition

2. **Flow Progression**

- Top-to-bottom processing: Query → Relation Learning → Entity Representation

- Bottom-up integration: Relation patterns → Algebraic branches → Final representation

3. **Attention Mechanism**

- Four attention heads suggest multi-perspective processing

- Weighted combination implies dynamic importance weighting

### Interpretation

This architecture demonstrates a sophisticated approach to knowledge graph embedding by:

1. **Multi-perspective Analysis**: Using four algebraic branches to capture different relation properties simultaneously

2. **Dynamic Attention**: Implementing attention mechanisms to weigh branch contributions contextually

3. **Pattern Recognition**: Explicitly modeling relation patterns (symmetry, antisymmetry, etc.) through specialized branches

The system appears designed for complex knowledge graph tasks requiring nuanced relation understanding, with particular strength in handling inverse and composed relations through specialized algebraic structures. The attention-based fusion suggests an adaptive approach to combining different relation perspectives based on query context.

DECODING INTELLIGENCE...