## Diagram: Knowledge Graph and Relative Representations

### Overview

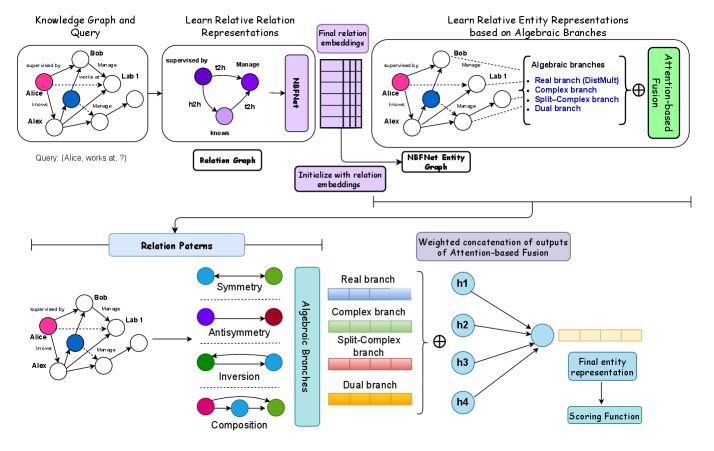

The image is a diagram illustrating a process for learning relative entity representations based on algebraic branches, starting from a knowledge graph and query. It shows the flow of information and transformations through different stages, including relation representations, entity graphs, and algebraic branches, culminating in a final entity representation and scoring function.

### Components/Axes

* **Header:** Contains the titles of the different stages of the process.

* "Knowledge Graph and Query" (top-left)

* "Learn Relative Relation Representations" (top-center)

* "Learn Relative Entity Representations based on Algebraic Branches" (top-right)

* **Knowledge Graph and Query:** A graph showing entities (Alice, Bob, Alex, Lab 1) and their relationships (supervised by, works at, knows, Manage). A query "(Alice, works at, ?)" is posed.

* **Relation Graph:** A graph showing relations (t2h, Manage, h2h, knows).

* **NBFNet:** A block representing a neural network used for relation and entity embeddings.

* **Algebraic Branches:** A set of branches representing different types of relationships:

* Real branch (DistMult)

* Complex branch

* Split-Complex branch

* Dual branch

* **Relation Patterns:** Shows different relation patterns: Symmetry, Antisymmetry, Inversion, Composition.

* **Attention-based Fusion:** A process for combining information from different branches.

* **Final Entity Representation:** The output of the process, representing the entities in a learned space.

* **Scoring Function:** A function used to evaluate the final entity representation.

### Detailed Analysis or ### Content Details

1. **Knowledge Graph and Query:**

* Entities: Alice (pink), Bob (white), Alex (white), Lab 1 (white).

* Relations:

* Bob supervised by Alice.

* Bob works at Lab 1.

* Alice knows Alex.

* Bob manages Alice.

* Alex manages Alice.

* Query: (Alice, works at, ?)

2. **Learn Relative Relation Representations:**

* Relation Graph:

* Nodes: t2h (purple), Manage (purple), h2h (purple), knows (purple).

* Edges: t2h -> Manage, t2h -> h2h.

* NBFNet block transforms the relation graph into "Final relation embeddings".

3. **Learn Relative Entity Representations based on Algebraic Branches:**

* NBFNet Entity Graph: Initialized with relation embeddings.

* Algebraic Branches:

* Real branch (DistMult) - light blue

* Complex branch - light green

* Split-Complex branch - light red

* Dual branch - light orange

* Attention-based Fusion: Combines the outputs of the algebraic branches.

4. **Relation Patterns:**

* Symmetry: Blue node connected to green node with a double-headed dotted arrow.

* Antisymmetry: Purple node connected to red node with a double-headed solid arrow.

* Inversion: Green node connected to blue node with a double-headed solid arrow.

* Composition: Pink node connected to green node with a curved arrow.

5. **Weighted concatenation of outputs of Attention-based Fusion:**

* h1, h2, h3, h4 (light blue circles) are concatenated and fed into the "Final entity representation".

* The "Final entity representation" is then used by the "Scoring Function".

### Key Observations

* The diagram illustrates a multi-stage process for learning entity representations.

* The process involves transforming a knowledge graph into relation and entity graphs.

* Algebraic branches are used to capture different types of relationships.

* Attention-based fusion is used to combine information from different branches.

### Interpretation

The diagram presents a method for learning entity representations that leverages knowledge graphs, relation representations, and algebraic branches. The process aims to capture complex relationships between entities by considering different types of relations and combining them using attention-based fusion. The final entity representation can then be used for various downstream tasks, such as link prediction or entity classification. The use of algebraic branches allows the model to capture different types of relationships, such as symmetry, antisymmetry, and composition. The attention-based fusion mechanism allows the model to selectively attend to the most relevant information from each branch.