\n

## Diagram: NBFNet Entity Representation Learning

### Overview

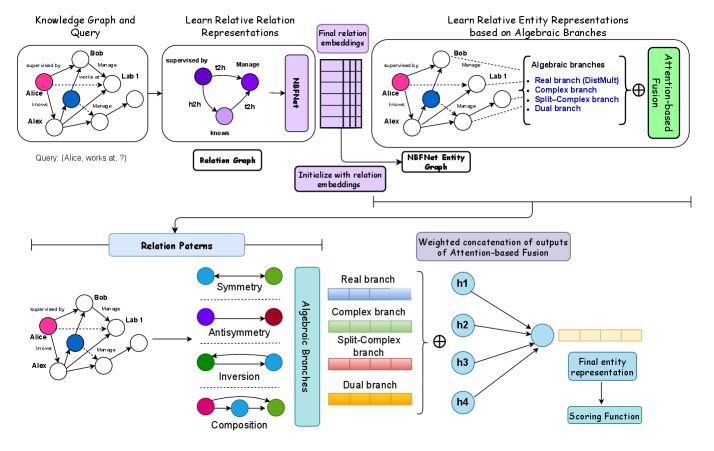

This diagram illustrates the process of learning entity representations using the NBFNet (Neural-Symbolic Fusion Network) architecture, leveraging knowledge graphs and algebraic branches. The diagram is structured into three main sections: Knowledge Graph and Query, Learning Relative Representations, and Relation Patterns. It shows how a knowledge graph is used to learn relation embeddings, which are then used to generate entity representations based on algebraic branches, and finally fused using an attention mechanism.

### Components/Axes

The diagram consists of several interconnected components:

* **Knowledge Graph and Query:** Depicts a knowledge graph with entities (Alice, Bob, Lab 1) and relations (managed by, works at). A query "Alice, works at, ?" is shown.

* **Relation Graph:** Represents the learned relation embeddings (h1, h2).

* **NBFNet Entity Graph:** Shows the entity graph with learned embeddings.

* **Algebraic Branches:** Illustrates the four algebraic branches: Real branch (DistMult), Complex branch, Split-Complex branch, and Dual branch.

* **Attention-based Fusion:** Represents the fusion of outputs from the algebraic branches.

* **Relation Patterns:** Displays relation patterns like Symmetry, Antisymmetry, Inversion, and Composition.

* **Weighted concatenation of outputs of Attention-based Fusion:** Shows the final entity representation and scoring function.

### Detailed Analysis or Content Details

**Section 1: Knowledge Graph and Query**

* The knowledge graph contains three entities: Alice (represented by a white circle), Bob (represented by a blue circle), and Lab 1 (represented by a green circle).

* Relations are represented by arrows:

* Alice is "managed by" Bob.

* Bob is "managed by" Lab 1.

* Alice "works at" Lab 1.

* The query is: "Alice, works at, ?"

**Section 2: Learn Relative Relation Representations**

* The Relation Graph shows two relation embeddings: h1 (blue) and h2 (green).

* The NBFNet Entity Graph shows the same knowledge graph as Section 1, but with the entities now having associated embeddings.

* The "Initialize with relation embeddings" arrow indicates that the entity graph is initialized using the relation embeddings.

* The "Final relation embeddings" box represents the output of this stage.

**Section 3: Learn Relative Entity Representations based on Algebraic Branches**

* The Algebraic Branches section shows four branches:

* Real branch (DistMult) - colored light orange.

* Complex branch - colored light green.

* Split-Complex branch - colored light blue.

* Dual branch - colored yellow.

* Each branch receives input from the NBFNet Entity Graph.

* The outputs of the four branches (h1, h2, h3, h4) are fed into the "Attention-based Fusion" block.

**Section 4: Relation Patterns**

* The Relation Patterns section shows the knowledge graph again, with different colors representing different relation patterns:

* Symmetry - blue circles and arrows.

* Antisymmetry - green circles and arrows.

* Inversion - magenta circles and arrows.

* Composition - cyan circles and arrows.

**Section 5: Weighted concatenation of outputs of Attention-based Fusion**

* The outputs of the algebraic branches (h1, h2, h3, h4) are concatenated and fed into a "Final entity representation" block.

* The final entity representation is then used by a "Scoring Function".

### Key Observations

* The diagram highlights the use of algebraic branches to capture different aspects of relations.

* The attention-based fusion mechanism allows for combining the outputs of the different branches.

* The relation patterns section demonstrates how the learned embeddings can capture different relational properties.

* The diagram shows a clear flow of information from the knowledge graph to the final entity representation.

### Interpretation

The diagram illustrates a novel approach to entity representation learning by leveraging knowledge graphs and algebraic branches within the NBFNet framework. The core idea is to decompose relations into their algebraic components, learn embeddings for each component, and then fuse them using an attention mechanism to obtain a comprehensive entity representation. This approach allows the model to capture a richer understanding of relations and improve the quality of entity embeddings. The use of different relation patterns (Symmetry, Antisymmetry, Inversion, Composition) suggests that the model can learn to distinguish between different types of relations and encode this information into the entity representations. The final scoring function indicates that the learned entity representations can be used for downstream tasks such as link prediction or entity classification. The diagram effectively communicates the architecture and flow of information within the NBFNet model, providing a clear understanding of its key components and their interactions.