\n

## Diagram: Reasoning and Policy Enhancement Pipeline

### Overview

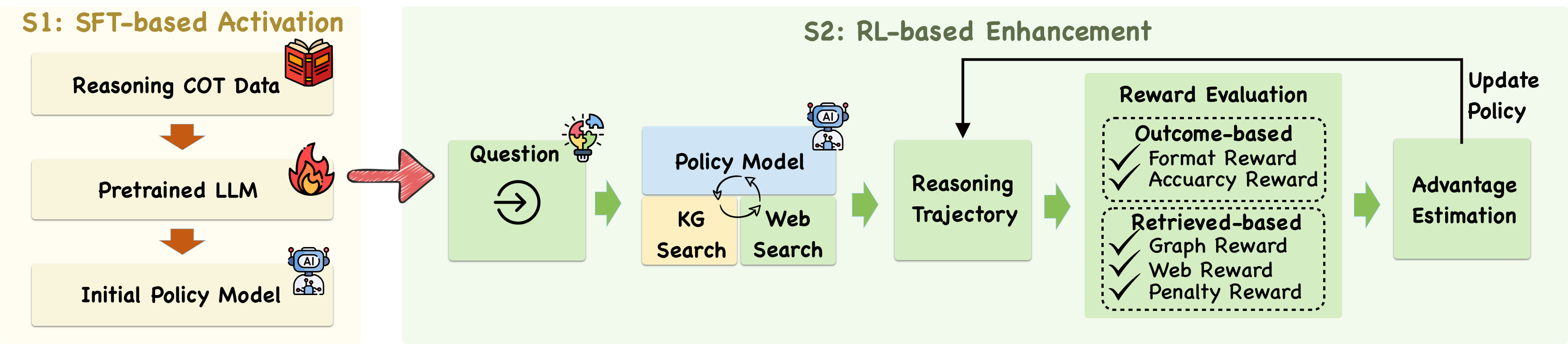

The image depicts a two-stage pipeline for enhancing a policy model using Supervised Fine-Tuning (SFT) followed by Reinforcement Learning (RL). The pipeline takes an initial policy model, refines it through reasoning and question answering, and then further improves it using reward evaluation and policy updates. The diagram is horizontally oriented, with the pipeline flowing from left to right.

### Components/Axes

The diagram is divided into two main stages labeled "S1: SFT-based Activation" and "S2: RL-based Enhancement". Within each stage, several components are interconnected by arrows indicating the flow of information. Key components include: Reasoning COT Data, Pretrained LLM, Initial Policy Model, Question, Policy Model, KG Search, Web Search, Reasoning Trajectory, Reward Evaluation, Outcome-based, Format Reward, Accuracy Reward, Retrieved-based, Graph Reward, Web Reward, Penalty Reward, Advantage Estimation, and Update Policy.

### Detailed Analysis or Content Details

**Stage 1: SFT-based Activation**

* **Initial Policy Model:** Represented by a robot icon, this is the starting point of the pipeline.

* **Pretrained LLM:** An oval shape containing the text "Pretrained LLM". An arrow points from the Initial Policy Model to the Pretrained LLM.

* **Reasoning COT Data:** A book icon labeled "Reasoning COT Data". An arrow points from the Pretrained LLM to the Reasoning COT Data.

* **Question:** A magnifying glass with a question mark inside, labeled "Question". An arrow points from Reasoning COT Data to the Question.

* **Policy Model:** A brain icon labeled "Policy Model". Two arrows point to the Policy Model: one from the Question and another from "KG Search" and "Web Search".

* **KG Search:** Labeled "KG Search".

* **Web Search:** Labeled "Web Search".

**Stage 2: RL-based Enhancement**

* **Reasoning Trajectory:** Labeled "Reasoning Trajectory". An arrow points from the Policy Model to the Reasoning Trajectory.

* **Reward Evaluation:** A dashed box labeled "Reward Evaluation". Inside the box are several reward components:

* **Outcome-based:** Labeled "Outcome-based" with a checkmark.

* **Format Reward:** Labeled "Format Reward" with a checkmark.

* **Accuracy Reward:** Labeled "Accuracy Reward" with a checkmark.

* **Retrieved-based:** Labeled "Retrieved-based" with a checkmark.

* **Graph Reward:** Labeled "Graph Reward" with a checkmark.

* **Web Reward:** Labeled "Web Reward" with a checkmark.

* **Penalty Reward:** Labeled "Penalty Reward" with a checkmark.

* **Advantage Estimation:** Labeled "Advantage Estimation". An arrow points from the Reward Evaluation to the Advantage Estimation.

* **Update Policy:** Labeled "Update Policy". An arrow points from the Advantage Estimation to the Update Policy, completing the loop.

### Key Observations

The diagram highlights a cyclical process where the policy is continuously updated based on reward evaluation. The use of both KG Search and Web Search suggests a hybrid approach to information retrieval. The Reward Evaluation component is quite detailed, indicating a multifaceted reward system.

### Interpretation

This diagram illustrates a sophisticated approach to training a policy model. The initial SFT stage leverages a pretrained LLM and reasoning data to create a foundational policy. The subsequent RL stage refines this policy through interaction and reward feedback. The detailed reward evaluation system suggests a focus on not only the correctness of the outcome but also the format, retrieval sources, and potential penalties. The pipeline emphasizes the importance of reasoning and knowledge integration (KG Search, Web Search) in achieving a robust and effective policy. The cyclical nature of the RL stage implies continuous learning and improvement. The diagram doesn't provide specific data or numerical values, but rather a conceptual framework for the training process.