## Bar Chart: MetaQA Hit@1 Scores (Mean ± Std) for Our Method and Baselines

### Overview

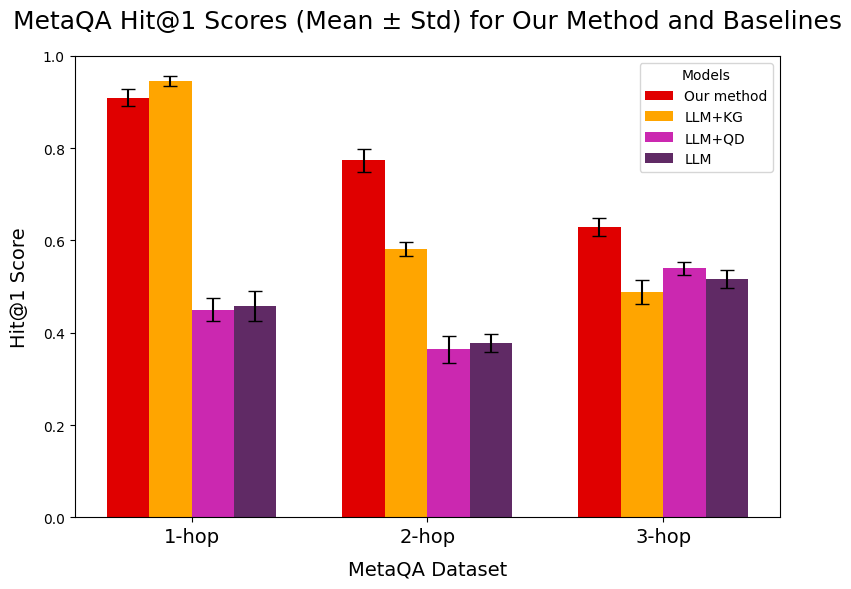

This bar chart displays the Hit@1 scores, presented as mean values with standard deviation error bars, for four different models across three "hop" categories of the MetaQA dataset. The models are "Our method", "LLM+KG", "LLM+QD", and "LLM". The x-axis represents the MetaQA Dataset categories ("1-hop", "2-hop", "3-hop"), and the y-axis represents the Hit@1 Score, ranging from 0.0 to 1.0.

### Components/Axes

* **Title:** "MetaQA Hit@1 Scores (Mean ± Std) for Our Method and Baselines"

* **X-axis Title:** "MetaQA Dataset"

* **X-axis Labels:** "1-hop", "2-hop", "3-hop"

* **Y-axis Title:** "Hit@1 Score"

* **Y-axis Scale:** 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Legend:** Located in the top-right corner of the chart.

* **Title:** "Models"

* **Entries:**

* Red square: "Our method"

* Orange square: "LLM+KG"

* Magenta square: "LLM+QD"

* Purple square: "LLM"

### Detailed Analysis

The chart presents grouped bar charts for each "hop" category. Within each category, there are four bars representing the four models.

**1-hop:**

* **Our method (Red):** The bar reaches approximately 0.93. The error bar extends from approximately 0.91 to 0.95.

* **LLM+KG (Orange):** The bar reaches approximately 0.96. The error bar extends from approximately 0.95 to 0.97.

* **LLM+QD (Magenta):** The bar reaches approximately 0.45. The error bar extends from approximately 0.43 to 0.47.

* **LLM (Purple):** The bar reaches approximately 0.47. The error bar extends from approximately 0.45 to 0.49.

**2-hop:**

* **Our method (Red):** The bar reaches approximately 0.78. The error bar extends from approximately 0.77 to 0.79.

* **LLM+KG (Orange):** The bar reaches approximately 0.59. The error bar extends from approximately 0.58 to 0.60.

* **LLM+QD (Magenta):** The bar reaches approximately 0.37. The error bar extends from approximately 0.36 to 0.38.

* **LLM (Purple):** The bar reaches approximately 0.39. The error bar extends from approximately 0.38 to 0.40.

**3-hop:**

* **Our method (Red):** The bar reaches approximately 0.63. The error bar extends from approximately 0.62 to 0.64.

* **LLM+KG (Orange):** The bar reaches approximately 0.50. The error bar extends from approximately 0.49 to 0.51.

* **LLM+QD (Magenta):** The bar reaches approximately 0.54. The error bar extends from approximately 0.53 to 0.55.

* **LLM (Purple):** The bar reaches approximately 0.52. The error bar extends from approximately 0.51 to 0.53.

### Key Observations

* **Overall Performance:** "Our method" consistently achieves the highest Hit@1 scores across all three "hop" categories.

* **Performance Drop with Hops:** For "Our method" and "LLM+KG", there is a noticeable decrease in Hit@1 scores as the number of hops increases from 1 to 2, and then a further decrease from 2 to 3.

* **LLM-based Models:** The "LLM+QD" and "LLM" models show significantly lower performance compared to "Our method" and "LLM+KG" in the 1-hop and 2-hop categories.

* **3-hop Anomaly:** In the 3-hop category, "LLM+QD" and "LLM" show a slight increase in performance compared to the 2-hop category, and their scores become closer to "LLM+KG". "Our method" still maintains the lead.

* **Standard Deviation:** The standard deviations (indicated by error bars) are relatively small across all models and categories, suggesting consistent performance within each group.

### Interpretation

This chart demonstrates the effectiveness of "Our method" in achieving higher Hit@1 scores on the MetaQA dataset compared to the baseline models, particularly as the complexity of the questions (indicated by the number of hops) increases. The significant performance gap between "Our method" and the LLM-based baselines in the 1-hop and 2-hop scenarios suggests that "Our method" is better at handling these types of questions.

The observed drop in performance for "Our method" and "LLM+KG" with increasing hops is a common challenge in question-answering systems, as multi-hop reasoning is inherently more difficult. The slight improvement or stabilization of "LLM+QD" and "LLM" in the 3-hop category, while still lagging behind, might indicate different strengths or weaknesses in their underlying architectures or training data.

The data suggests that "Our method" offers a superior approach for MetaQA tasks, especially for simpler question structures, and maintains a competitive edge even with more complex reasoning requirements. The error bars indicate a reasonable level of confidence in the reported mean scores.