\n

## Diagram: AI Agent Architecture

### Overview

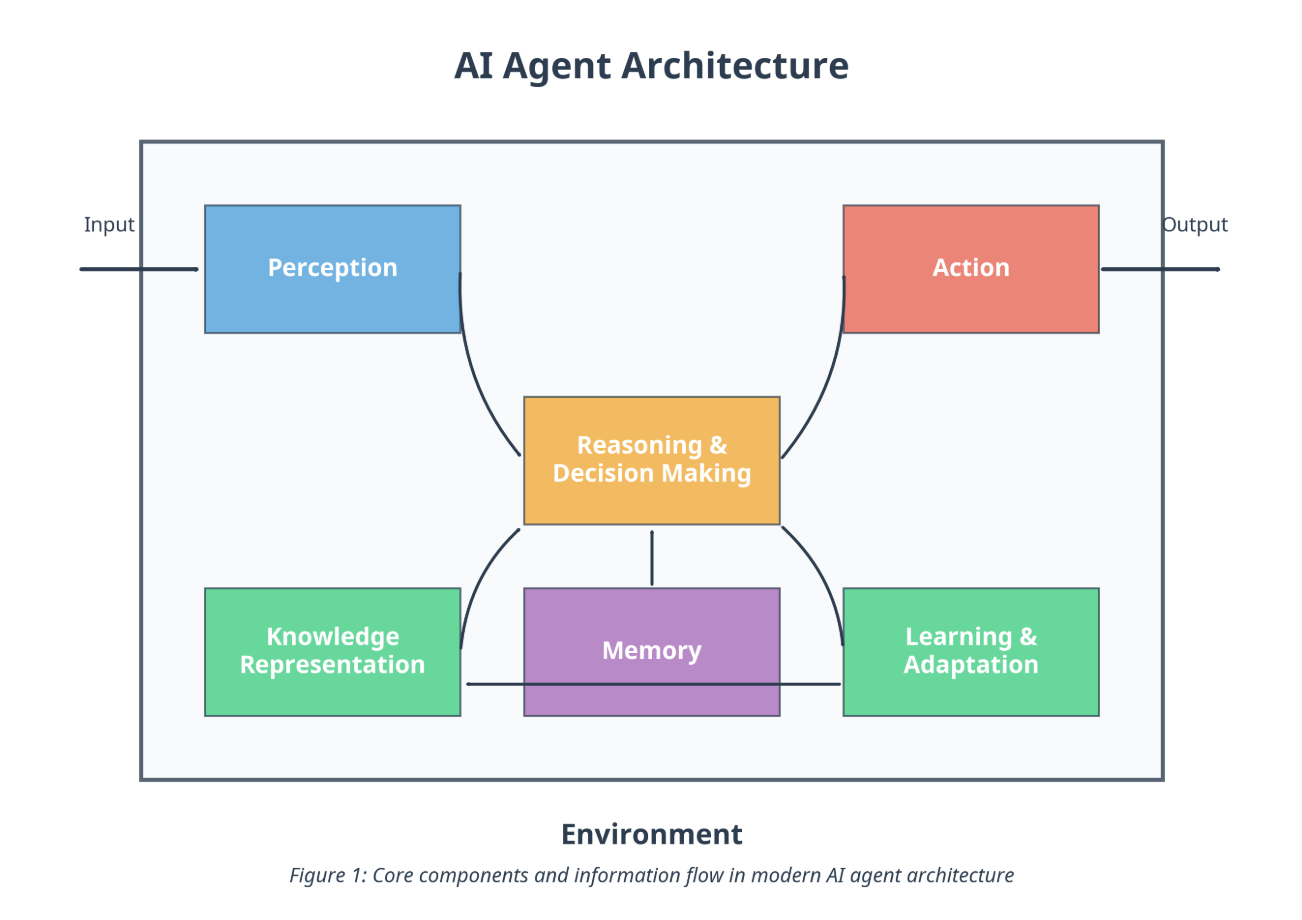

The image is a technical block diagram illustrating the core components and information flow within a modern AI agent architecture. The diagram is enclosed within a large rectangular boundary representing the agent's "Environment." It depicts six primary functional modules connected by lines indicating data or control flow, with external input and output points.

### Components/Axes

**Title:** "AI Agent Architecture" (centered at the top).

**Boundary:** A large rectangle labeled "Environment" at the bottom center.

**Internal Components (Boxes):**

1. **Perception** (Blue box, top-left quadrant).

2. **Action** (Red box, top-right quadrant).

3. **Reasoning & Decision Making** (Orange box, center).

4. **Knowledge Representation** (Green box, bottom-left quadrant).

5. **Memory** (Purple box, bottom-center).

6. **Learning & Adaptation** (Green box, bottom-right quadrant).

**External Interfaces:**

* **Input:** An arrow pointing from the left edge of the "Environment" boundary into the "Perception" box.

* **Output:** An arrow pointing from the "Action" box to the right edge of the "Environment" boundary.

**Caption:** "Figure 1: Core components and information flow in modern AI agent architecture" (centered below the diagram).

### Detailed Analysis

**Component Layout and Connections:**

* The **Perception** module (top-left) receives external **Input**. A curved line connects it downward to the central **Reasoning & Decision Making** module.

* The **Action** module (top-right) generates external **Output**. A curved line connects it downward from the central **Reasoning & Decision Making** module.

* The **Reasoning & Decision Making** module (center) is the central hub. It has bidirectional connections (curved lines) to both **Perception** and **Action**. It also has a direct vertical line connecting it downward to the **Memory** module.

* The three lower modules are interconnected:

* **Knowledge Representation** (bottom-left) is connected by a horizontal line to **Memory** (bottom-center).

* **Memory** (bottom-center) is connected by a horizontal line to **Learning & Adaptation** (bottom-right).

* Additionally, **Reasoning & Decision Making** has curved lines connecting it directly to both **Knowledge Representation** and **Learning & Adaptation**.

**Flow Direction:** The primary flow is from Input → Perception → Reasoning & Decision Making → Action → Output. The lower modules (Knowledge, Memory, Learning) form a supportive subsystem that interacts bidirectionally with the central reasoning module and with each other.

### Key Observations

1. **Central Hub:** The "Reasoning & Decision Making" module is the architectural core, directly interfacing with all other internal modules.

2. **Symmetry:** The diagram has a symmetrical layout with Perception/Action at the top and Knowledge/Learning at the bottom, flanking the central Memory and Reasoning modules.

3. **Bidirectional Communication:** Most connections are represented by lines without arrowheads, implying bidirectional data flow or interaction rather than a strict one-way pipeline.

4. **Color Coding:** Components are color-coded by function: Blue (Perception), Red (Action), Orange (Reasoning), Green (Knowledge & Learning), Purple (Memory).

### Interpretation

This diagram presents a modular, cognitive architecture for an autonomous AI agent. It conceptualizes the agent as a system that perceives its environment, processes that information using reasoning and decision-making faculties supported by knowledge, memory, and learning capabilities, and then acts upon the environment.

The architecture emphasizes that intelligent behavior is not a simple input-output mapping but involves an internal state (Memory, Knowledge) that is continuously updated (Learning & Adaptation) to inform future decisions. The central placement of "Reasoning & Decision Making" suggests it is the integrative component that synthesizes perceptual data with internal knowledge and memory to choose actions. The bidirectional links indicate constant feedback; for example, actions can change the environment, which is then re-perceived, and learning can update knowledge and memory based on outcomes.

The "Environment" boundary is crucial, defining the agent's operational context. The diagram abstracts away specific implementations (e.g., neural networks, symbolic logic) to focus on the high-level functional decomposition required for an agent to exhibit goal-directed, adaptive behavior.