## Grouped Bar Chart: Normalized Runtime Comparison Across Neuro-Symbolic AI Approaches

### Overview

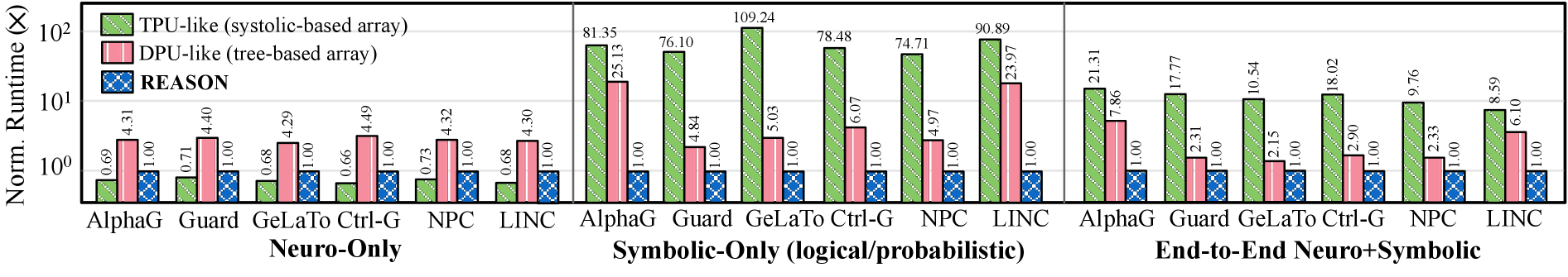

The image displays a grouped bar chart comparing the normalized runtime (in multiples, X) of three different computational architectures—TPU-like (systolic-based array), DPU-like (tree-based array), and REASON—across six different AI systems (AlphaG, Guard, GeLaTo, Ctrl-G, NPC, LINC). The comparison is segmented into three distinct task categories: Neuro-Only, Symbolic-Only (logical/probabilistic), and End-to-End Neuro+Symbolic. The y-axis uses a logarithmic scale.

### Components/Axes

* **Chart Title:** Not fully visible in the provided crop.

* **Y-Axis:**

* **Label:** `Norm. Runtime (X)`

* **Scale:** Logarithmic, ranging from `10^0` (1) to `10^2` (100).

* **Major Ticks:** `10^0`, `10^1`, `10^2`.

* **X-Axis:** Grouped by task category, with six AI systems listed under each.

* **Primary Groups (Bottom Labels):**

1. `Neuro-Only`

2. `Symbolic-Only (logical/probabilistic)`

3. `End-to-End Neuro+Symbolic`

* **Sub-categories (AI Systems):** `AlphaG`, `Guard`, `GeLaTo`, `Ctrl-G`, `NPC`, `LINC` (repeated under each primary group).

* **Legend (Top-Left):**

* **Green (diagonal hatch):** `TPU-like (systolic-based array)`

* **Pink (vertical hatch):** `DPU-like (tree-based array)`

* **Blue (cross-hatch):** `REASON`

* **Data Labels:** Each bar has its exact numerical value printed directly above it.

### Detailed Analysis

**1. Neuro-Only Tasks:**

* **Trend:** REASON bars are uniformly flat at the baseline (1.00). TPU-like and DPU-like bars show modest, similar runtimes slightly above 1.

* **Data Points (TPU-like / DPU-like / REASON):**

* AlphaG: 0.69 / 4.31 / 1.00

* Guard: 0.71 / 4.40 / 1.00

* GeLaTo: 0.68 / 4.29 / 1.00

* Ctrl-G: 0.66 / 4.49 / 1.00

* NPC: 0.73 / 4.32 / 1.00

* LINC: 0.68 / 4.30 / 1.00

**2. Symbolic-Only (logical/probabilistic) Tasks:**

* **Trend:** A dramatic increase in runtime for TPU-like architectures, with bars reaching near or above the `10^2` (100) mark. DPU-like runtimes also increase significantly but remain an order of magnitude lower than TPU-like. REASON remains constant at 1.00.

* **Data Points (TPU-like / DPU-like / REASON):**

* AlphaG: 81.35 / 25.13 / 1.00

* Guard: 76.10 / 4.84 / 1.00

* GeLaTo: 109.24 / 5.03 / 1.00

* Ctrl-G: 78.48 / 6.07 / 1.00

* NPC: 74.71 / 4.97 / 1.00

* LINC: 90.89 / 23.97 / 1.00

**3. End-to-End Neuro+Symbolic Tasks:**

* **Trend:** Runtimes for TPU-like and DPU-like architectures decrease compared to the Symbolic-Only category but remain higher than in the Neuro-Only category. REASON continues to hold at 1.00.

* **Data Points (TPU-like / DPU-like / REASON):**

* AlphaG: 21.31 / 7.86 / 1.00

* Guard: 17.77 / 2.31 / 1.00

* GeLaTo: 10.54 / 2.15 / 1.00

* Ctrl-G: 18.02 / 2.90 / 1.00

* NPC: 9.76 / 2.33 / 1.00

* LINC: 8.59 / 6.10 / 1.00

### Key Observations

1. **REASON's Consistency:** The REASON architecture (blue bars) maintains a normalized runtime of exactly 1.00 across all six AI systems and all three task categories. This serves as the baseline for comparison.

2. **Symbolic Task Performance Gap:** The most striking feature is the massive performance gap in the **Symbolic-Only** category. TPU-like architectures are 75-109x slower than the REASON baseline, while DPU-like architectures are 5-25x slower.

3. **Task Category Impact:** For both TPU-like and DPU-like architectures, the runtime order from fastest to slowest is consistently: Neuro-Only < End-to-End Neuro+Symbolic < Symbolic-Only.

4. **System-Specific Variance:** Within the Symbolic-Only category, the `GeLaTo` system shows the highest runtime for TPU-like (109.24), while `LINC` shows the highest for DPU-like (23.97). In the Neuro-Only category, runtimes are very uniform across systems for a given architecture.

### Interpretation

This chart demonstrates a clear architectural advantage for the REASON system in terms of computational efficiency, particularly for symbolic reasoning tasks. The data suggests that traditional systolic (TPU-like) and tree-based (DPU-like) arrays incur a severe performance penalty when handling purely logical or probabilistic workloads, with runtimes exploding by one to two orders of magnitude.

The "End-to-End" results indicate that combining neuro and symbolic components mitigates this penalty somewhat, but does not eliminate it. The consistent 1.00 runtime for REASON implies it is either the reference system against which others are normalized, or it possesses a fundamentally different and more efficient computational model for these mixed workloads. The chart effectively argues that for AI systems requiring significant symbolic reasoning, the REASON architecture offers substantial and consistent runtime benefits over the compared TPU-like and DPU-like alternatives.