\n

## Diagram: COPRA System Flowchart

### Overview

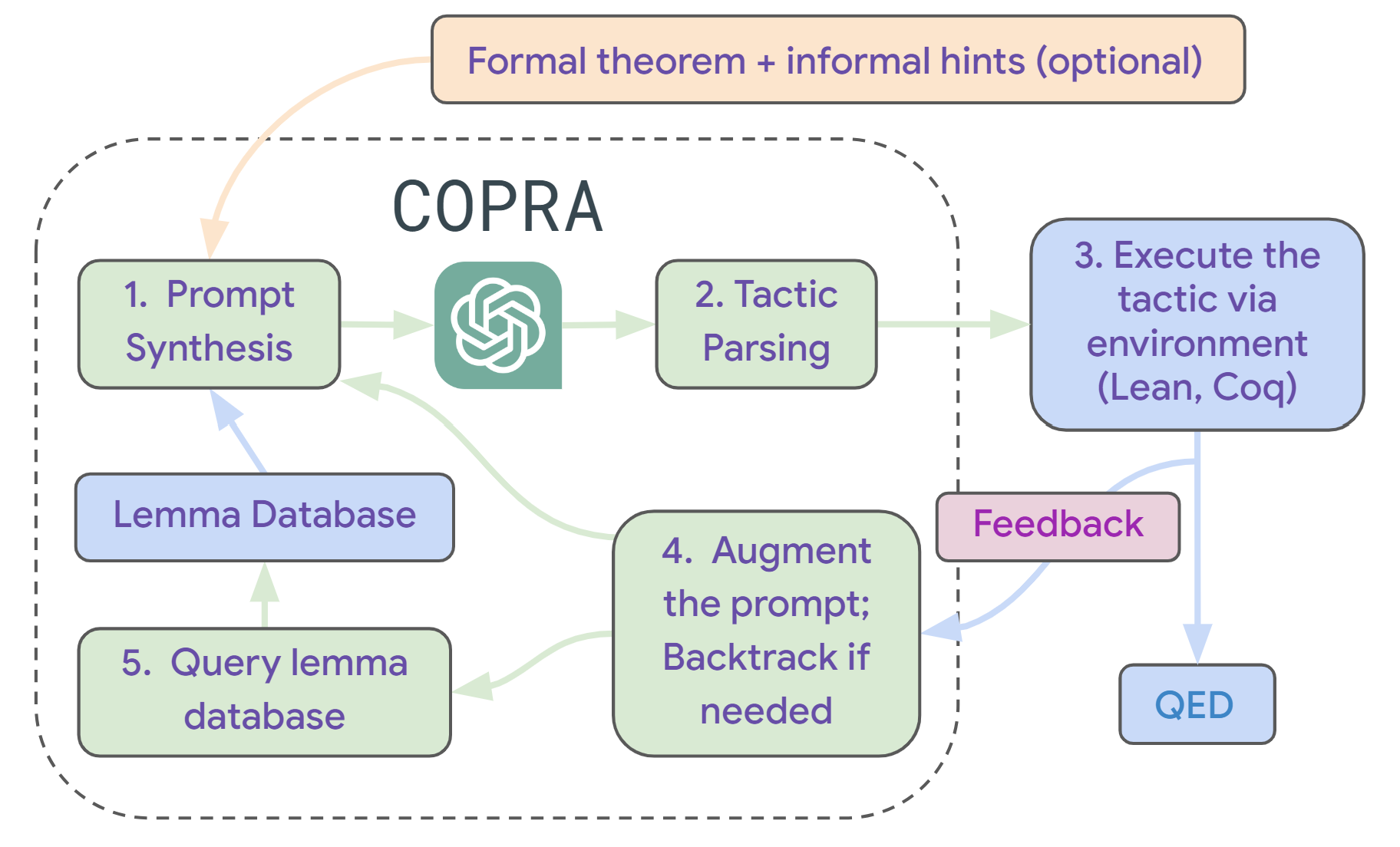

The image is a technical flowchart illustrating the architecture and workflow of a system named "COPRA." It depicts an iterative, feedback-driven process for automated theorem proving or tactic generation, likely leveraging a large language model (LLM). The system takes a formal theorem as input and aims to produce a proof (QED) by synthesizing, parsing, executing, and refining proof tactics.

### Components/Axes

The diagram is composed of labeled boxes (components) connected by directional arrows (flow). The components are color-coded and spatially arranged to show process stages and feedback loops.

**Primary Input (Top Center):**

* A light orange, rounded rectangle labeled: **"Formal theorem + informal hints (optional)"**.

* An orange arrow points from this box down into the main system boundary.

**Main System Boundary (Center):**

* A large, dashed-line rounded rectangle labeled **"COPRA"** at the top center. This encloses the core processing components.

**Components Inside the COPRA Boundary:**

1. **"1. Prompt Synthesis"** (Light green box, top-left). Receives input from the main theorem box and feedback from other components.

2. **LLM Icon** (Teal square with a white, stylized brain/flower symbol, center). Positioned between "Prompt Synthesis" and "Tactic Parsing." Arrows flow from "Prompt Synthesis" to the icon, and from the icon to "Tactic Parsing."

3. **"2. Tactic Parsing"** (Light green box, top-right). Receives input from the LLM icon.

4. **"Lemma Database"** (Light blue box, middle-left). Has a bidirectional relationship with "Prompt Synthesis" and "Query lemma database."

5. **"4. Augment the prompt; Backtrack if needed"** (Light green box, bottom-center). A central hub receiving feedback and connecting to other components.

6. **"5. Query lemma database"** (Light green box, bottom-left). Sends a query to the "Lemma Database" and receives information back.

**Components Outside the COPRA Boundary:**

1. **"3. Execute the tactic via environment (Lean, Coq)"** (Light blue box, right side). Receives the parsed tactic from "2. Tactic Parsing."

2. **"Feedback"** (Pink box, below the execution box). Receives output from the execution step.

3. **"QED"** (Light blue box, bottom-right). The final output, indicating a successful proof. An arrow points from the execution box directly to QED.

**Flow & Connections (Arrows):**

* **Main Input Flow:** Orange arrow from "Formal theorem..." to "1. Prompt Synthesis."

* **Forward Processing Chain:** Green arrows connect: "1. Prompt Synthesis" -> [LLM Icon] -> "2. Tactic Parsing" -> "3. Execute the tactic..."

* **Feedback Loop:** A light blue arrow flows from "Feedback" to "4. Augment the prompt...".

* **Internal Refinement Loop:** From "4. Augment the prompt...", a green arrow points back to "1. Prompt Synthesis." Another green arrow points to "5. Query lemma database."

* **Database Interaction:** A green arrow goes from "5. Query lemma database" to "Lemma Database." A light blue arrow returns from "Lemma Database" to "1. Prompt Synthesis."

* **Success Path:** A light blue arrow goes from "3. Execute the tactic..." directly to "QED."

### Detailed Analysis

The diagram outlines a five-step iterative cycle:

1. **Prompt Synthesis:** Constructs a prompt for an LLM, incorporating the target theorem, optional hints, and information from a lemma database.

2. **Tactic Parsing:** The LLM's output is parsed into a structured proof tactic.

3. **Execution:** The tactic is executed in an external proof assistant environment (explicitly naming **Lean** and **Coq** as examples).

4. **Feedback & Augmentation:** The result of the execution (success, error, state) is captured as feedback. This feedback is used to augment the original prompt or trigger backtracking.

5. **Lemma Query:** The system can query a database of lemmas to retrieve relevant theorems or definitions to improve subsequent prompt synthesis.

The process is not linear. It features a primary feedback loop (Steps 3 -> 4 -> 1) for iterative refinement based on execution results. A secondary loop involves the lemma database (Steps 4 -> 5 -> Database -> 1) for knowledge retrieval. The process terminates upon reaching "QED."

### Key Observations

* **Hybrid System:** COPRA combines a neural component (the LLM, represented by the icon) with symbolic components (the tactic parser, execution environment, and lemma database).

* **Iterative and Corrective:** The design emphasizes learning from failure. The "Backtrack if needed" instruction and the prominent feedback loop are central to the architecture.

* **Environment Agnostic:** While specifying Lean and Coq, the "via environment" phrasing suggests the core COPRA system could potentially interface with different proof assistants.

* **Knowledge-Augmented:** The inclusion of a "Lemma Database" indicates the system doesn't rely solely on the LLM's parametric knowledge but can perform explicit retrieval to ground its reasoning.

### Interpretation

This flowchart represents a sophisticated approach to **neuro-symbolic AI for formal verification**. The core problem it solves is bridging the gap between the informal, generative capabilities of large language models and the strict, formal requirements of proof assistants like Lean or Coq.

* **The Role of the LLM:** The LLM acts as a **tactic generator**, proposing potential proof steps based on a synthesized prompt. This leverages its pattern recognition and code/text generation abilities.

* **The Role of Feedback:** The execution environment acts as a **ground truth verifier**. Its feedback (e.g., error messages, new proof state) is crucial for correcting the LLM's hallucinations or logical errors, creating a self-improving cycle.

* **The Role of the Lemma Database:** This component addresses the LLM's limited and potentially outdated context window. By retrieving relevant formal statements, it provides the system with precise, up-to-date mathematical knowledge, making the generated tactics more likely to be valid and applicable.

* **Overall Significance:** The COPRA architecture suggests a move away from pure end-to-end neural proof generation towards **interactive, iterative refinement systems**. It positions the LLM as a powerful but fallible assistant within a larger, rigorous computational framework. The goal is to combine the flexibility of AI with the reliability of formal methods, potentially making automated theorem proving more accessible and powerful. The "QED" output is not a direct product of the LLM, but the result of a successfully verified process that the LLM helped to steer.