## Diagram: LLM Reasoning Extraction Template

### Overview

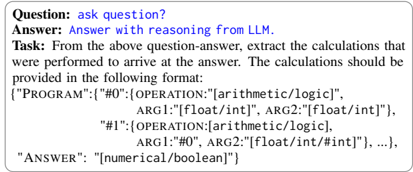

The image displays a structured template or schema for extracting and documenting the computational reasoning process of a Large Language Model (LLM). It presents a sample question-answer pair and specifies a required output format for detailing the calculations that led to the answer.

### Components/Axes

The diagram is organized into three distinct textual regions, arranged vertically:

1. **Header Region (Top):**

* **Question Label:** `Question:`

* **Question Content:** `ask question?`

* **Answer Label:** `Answer:`

* **Answer Content:** `Answer with reasoning from LLM.`

2. **Instruction Region (Middle):**

* **Task Label:** `Task:`

* **Instruction Text:** `From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:`

3. **Schema/Output Region (Bottom):**

* A JSON-like data structure defining the required output format. It contains the following key-value pairs:

* **Key:** `"PROGRAM"`

* **Value:** An array of operation objects.

* **Operation Object #0:**

* `"OPERATION"`: `["arithmetic/logic"]`

* `"ARG1"`: `[float/int]`

* `"ARG2"`: `[float/int]`

* **Operation Object #1:**

* `"OPERATION"`: `["arithmetic/logic"]`

* `"ARG1"`: `"#0"` (A reference to the result of Operation #0)

* `"ARG2"`: `[float/int/#int]` (Can be a number or a reference to another operation)

* **Key:** `"ANSWER"`

* **Value:** `[numerical/boolean]`

### Detailed Analysis

* **Text Transcription:** All text is in English. The complete transcription is as follows:

> Question: ask question?

> Answer: Answer with reasoning from LLM.

> Task: From the above question-answer, extract the calculations that were performed to arrive at the answer. The calculations should be provided in the following format:

> {"PROGRAM":[{"OPERATION":["arithmetic/logic"], ARG1:[float/int], ARG2:[float/int]}, {"OPERATION":["arithmetic/logic"], ARG1:"#0", ARG2:[float/int/#int]}, ...], "ANSWER":[numerical/boolean]}

* **Schema Structure:** The JSON schema defines a sequential program (`"PROGRAM"`) composed of discrete operations. Each operation has a type (`"arithmetic/logic"`) and two arguments (`ARG1`, ARG2`). Arguments can be literal values (`[float/int]`) or references to the results of previous operations (e.g., `"#0"`). The final `"ANSWER"` key holds the end result.

### Key Observations

* The template is generic, using placeholders like `[float/int]` and `["arithmetic/logic"]` instead of specific values or operation names.

* The structure implies a step-by-step, traceable reasoning chain where intermediate results can be referenced.

* The example uses a two-step program (`#0` and `#1`), but the ellipsis (`...`) indicates the program can be of variable length.

### Interpretation

This diagram serves as a **specification for creating interpretable audit trails of LLM reasoning**. It moves beyond providing just a final answer to demanding a transparent, structured log of the computational steps (arithmetic or logical operations) that an LLM ostensibly performed internally to derive that answer.

The schema enforces a form of **computational provenance**. By requiring arguments to be either raw inputs or references to prior steps (`"#0"`), it creates a directed acyclic graph (DAG) of dependencies. This allows for:

1. **Verification:** One can re-run the sequence of operations to check if the stated `"ANSWER"` is consistent with the provided `"PROGRAM"`.

2. **Debugging:** If the answer is incorrect, the specific faulty operation in the chain can be identified.

3. **Explanation:** The `"PROGRAM"` acts as a human-readable (or machine-verifiable) explanation of the reasoning process, translating the LLM's opaque internal activations into a sequence of discrete, understandable steps.

The template is a tool for **formalizing and constraining** the self-reported reasoning of an AI system, aiming to make its decision-making process more transparent, reliable, and accountable.