## Line Chart: Loss vs. Gradient Updates

### Overview

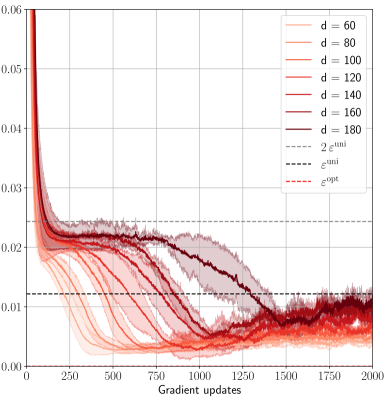

The image presents a line chart illustrating the relationship between loss (y-axis) and gradient updates (x-axis). Multiple lines represent different values of 'd', a parameter, alongside theoretical curves for uniform and optimal scenarios. The chart appears to demonstrate the convergence of loss as gradient updates increase, with varying rates depending on the value of 'd'.

### Components/Axes

* **X-axis:** "Gradient updates", ranging from 0 to 2000, with tick marks at 250, 500, 750, 1000, 1250, 1500, 1750.

* **Y-axis:** Loss, ranging from 0.00 to 0.06, with tick marks at 0.00, 0.02, 0.04, 0.06.

* **Legend:** Located in the top-right corner, containing the following labels and corresponding line styles/colors:

* d = 60 (light orange)

* d = 80 (orange)

* d = 100 (reddish-orange)

* d = 120 (red)

* d = 140 (dark red)

* d = 160 (very dark red)

* d = 180 (darkest red)

* 2 * e<sup>uni</sup> (dashed black)

* e<sup>uni</sup> (dotted black)

* e<sup>opt</sup> (solid black)

### Detailed Analysis

The chart displays several lines representing different values of 'd'. The lines generally exhibit a decreasing trend, indicating a reduction in loss as gradient updates increase.

* **d = 60 (light orange):** Starts at approximately 0.055 and decreases rapidly initially, then plateaus around 0.015-0.02.

* **d = 80 (orange):** Starts at approximately 0.05 and decreases rapidly, then plateaus around 0.015-0.02.

* **d = 100 (reddish-orange):** Starts at approximately 0.048 and decreases rapidly, then plateaus around 0.015-0.02.

* **d = 120 (red):** Starts at approximately 0.045 and decreases rapidly, then plateaus around 0.015-0.02.

* **d = 140 (dark red):** Starts at approximately 0.042 and decreases rapidly, then plateaus around 0.015-0.02.

* **d = 160 (very dark red):** Starts at approximately 0.04 and decreases rapidly, then plateaus around 0.015-0.02.

* **d = 180 (darkest red):** Starts at approximately 0.038 and decreases rapidly, then plateaus around 0.015-0.02.

* **2 * e<sup>uni</sup> (dashed black):** Starts at approximately 0.03 and decreases slowly, remaining above the other lines.

* **e<sup>uni</sup> (dotted black):** Starts at approximately 0.015 and decreases slowly, remaining above the other lines.

* **e<sup>opt</sup> (solid black):** Starts at approximately 0.008 and decreases slowly, remaining below the other lines.

All lines for different 'd' values converge towards a similar loss level around 0.015-0.02 after approximately 1000 gradient updates. The theoretical curves (dashed, dotted, and solid black) provide benchmarks for comparison.

### Key Observations

* The lines for different 'd' values initially diverge but converge as the number of gradient updates increases.

* Higher values of 'd' (160, 180) seem to exhibit a slightly faster initial decrease in loss compared to lower values (60, 80).

* The theoretical curve e<sup>opt</sup> (solid black) consistently represents the lowest loss value, indicating optimal performance.

* The theoretical curve e<sup>uni</sup> (dotted black) is consistently higher than e<sup>opt</sup>, and 2 * e<sup>uni</sup> (dashed black) is the highest of the three theoretical curves.

### Interpretation

The chart demonstrates the impact of the parameter 'd' on the convergence of a loss function during gradient updates. The convergence of the lines for different 'd' values suggests that, beyond a certain number of updates, the choice of 'd' becomes less critical. The theoretical curves provide a baseline for evaluating the performance of the algorithm. The solid black line (e<sup>opt</sup>) represents the optimal loss, while the other two theoretical lines (e<sup>uni</sup> and 2 * e<sup>uni</sup>) represent less optimal scenarios. The observed convergence towards a similar loss level for all 'd' values indicates that the algorithm is approaching a stable state, regardless of the specific value of 'd'. The initial differences in convergence rates suggest that 'd' may influence the speed of learning, but not necessarily the final outcome. The chart suggests that the algorithm is performing well, as the loss values are approaching the optimal theoretical curve.