TECHNICAL ASSET FINGERPRINT

912c00091fbf123c04043aa4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-3-flash-free VERSION 1

RUNTIME: nugit/gemini/gemini-3-flash-preview

INTEL_VERIFIED

# Technical Document Extraction: Comparison of Neural Network Architectures

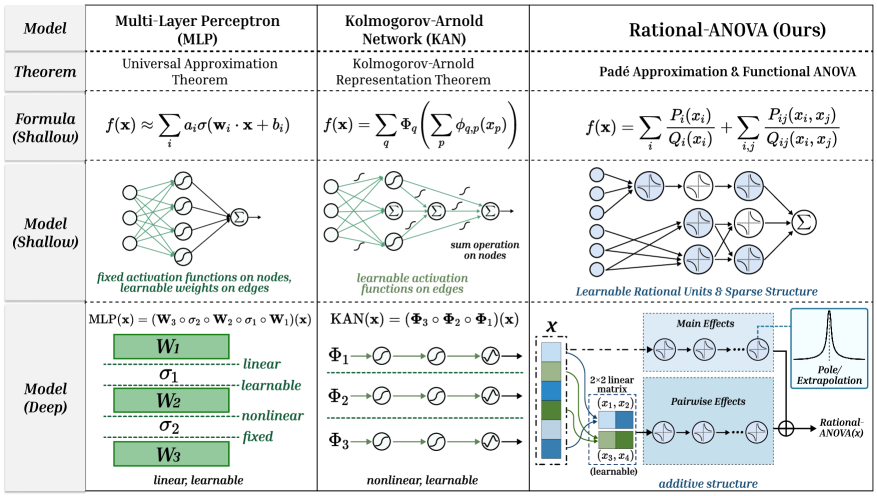

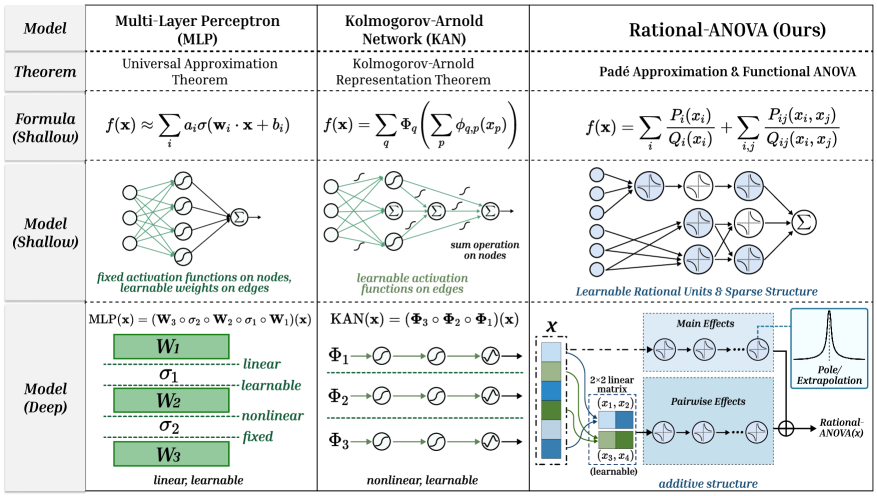

This document provides a comprehensive extraction of the data and structural comparisons presented in the provided image. The image is a technical table comparing three neural network models: **Multi-Layer Perceptron (MLP)**, **Kolmogorov-Arnold Network (KAN)**, and the proposed **Rational-ANOVA**.

---

## 1. High-Level Table Structure

The information is organized into a grid with five functional rows and three model columns.

| Row Category | Column 1: Multi-Layer Perceptron (MLP) | Column 2: Kolmogorov-Arnold Network (KAN) | Column 3: Rational-ANOVA (Ours) |

| :--- | :--- | :--- | :--- |

| **Model** | Multi-Layer Perceptron (MLP) | Kolmogorov-Arnold Network (KAN) | Rational-ANOVA (Ours) |

| **Theorem** | Universal Approximation Theorem | Kolmogorov-Arnold Representation Theorem | Padé Approximation & Functional ANOVA |

| **Formula (Shallow)** | $f(\mathbf{x}) \approx \sum_{i} a_i \sigma(\mathbf{w}_i \cdot \mathbf{x} + b_i)$ | $f(\mathbf{x}) = \sum_{q} \Phi_q \left( \sum_{p} \phi_{q,p}(x_p) \right)$ | $f(\mathbf{x}) = \sum_{i} \frac{P_i(x_i)}{Q_i(x_i)} + \sum_{i,j} \frac{P_{ij}(x_i, x_j)}{Q_{ij}(x_i, x_j)}$ |

| **Model (Shallow)** | Diagram of fixed activations on nodes | Diagram of learnable activations on edges | Diagram of learnable rational units |

| **Model (Deep)** | Stacked linear/nonlinear layers | Composition of nonlinear functions | Additive structure with Main/Pairwise effects |

---

## 2. Detailed Component Analysis

### Column 1: Multi-Layer Perceptron (MLP)

* **Theorem:** Relies on the **Universal Approximation Theorem**.

* **Formula (Shallow):** Approximates a function as a weighted sum of nonlinear activations of linear combinations of inputs.

* **Model (Shallow) Diagram:**

* **Structure:** Input nodes connect to a hidden layer of four nodes, which then converge to a single summation ($\Sigma$) output node.

* **Key Characteristic:** Text states: *"fixed activation functions on nodes, learnable weights on edges"*.

* **Model (Deep) Diagram:**

* **Logic:** Represented as a composition of matrices and activations: $\text{MLP}(\mathbf{x}) = (\mathbf{W}_3 \circ \sigma_2 \circ \mathbf{W}_2 \circ \sigma_1 \circ \mathbf{W}_1)(\mathbf{x})$.

* **Visual Stack:** Alternating green blocks ($W_1, W_2, W_3$) and dashed lines ($\sigma_1, \sigma_2$).

* **Labels:** $W$ layers are labeled as **linear** and **learnable**. $\sigma$ layers are labeled as **nonlinear** and **fixed**.

### Column 2: Kolmogorov-Arnold Network (KAN)

* **Theorem:** Relies on the **Kolmogorov-Arnold Representation Theorem**.

* **Formula (Shallow):** A nested summation where the inner functions $\phi_{q,p}$ and outer functions $\Phi_q$ are univariate.

* **Model (Shallow) Diagram:**

* **Structure:** Input nodes connect via edges containing activation function symbols to summation nodes ($\Sigma$).

* **Key Characteristic:** Text states: *"learnable activation functions on edges"* and *"sum operation on nodes"*.

* **Model (Deep) Diagram:**

* **Logic:** Represented as a composition of function layers: $\text{KAN}(\mathbf{x}) = (\mathbf{\Phi}_3 \circ \mathbf{\Phi}_2 \circ \mathbf{\Phi}_1)(\mathbf{x})$.

* **Visual Flow:** Three parallel horizontal tracks ($\Phi_1, \Phi_2, \Phi_3$), each showing a sequence of three learnable activation nodes.

* **Labels:** The layers are described as **nonlinear** and **learnable**.

### Column 3: Rational-ANOVA (Ours)

* **Theorem:** Combines **Padé Approximation** and **Functional ANOVA**.

* **Formula (Shallow):** Expresses the function as a sum of univariate rational functions (Main Effects) and bivariate rational functions (Pairwise Effects).

* **Model (Shallow) Diagram:**

* **Structure:** Input nodes connect to blue-shaded "Rational Units" (depicting rational function curves). The structure is sparse, with some inputs skipping directly to specific units.

* **Key Characteristic:** Text states: *"Learnable Rational Units & Sparse Structure"*.

* **Model (Deep) Diagram:**

* **Input $\mathcal{X}$:** A vertical vector of features.

* **Main Effects Block:** Features pass into a sequence of rational units.

* **Pairwise Effects Block:** Features are grouped via a "$2 \times 2$ linear matrix (learnable)" to create pairs like $(x_1, x_2)$ and $(x_3, x_4)$, which then pass through rational units.

* **Pole/Extrapolation:** A call-out box shows a graph with a sharp peak, labeled *"Pole/Extrapolation"*, indicating the model's ability to handle rational singularities.

* **Output:** The Main and Pairwise effects are combined at a summation node ($\oplus$) to produce **Rational-ANOVA(x)**.

* **Labels:** The overall architecture is described as an **additive structure**.

---

## 3. Summary of Comparative Trends

1. **Parameter Location:** MLP places learnable parameters on edges (weights); KAN places them on edges (functions); Rational-ANOVA uses learnable rational units in a structured additive format.

2. **Function Type:** MLP uses fixed nonlinearities; KAN uses learnable univariate functions; Rational-ANOVA uses learnable rational functions (fractions of polynomials $P/Q$).

3. **Interpretability:** Rational-ANOVA explicitly separates "Main Effects" and "Pairwise Effects," following the Functional ANOVA decomposition, which is typically more interpretable than the dense connectivity of MLPs.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Model Comparison Table

## Structure Overview

The image presents a comparative analysis of three computational models across four hierarchical sections:

1. **Model** (Header)

2. **Theorem** (Second row)

3. **Formula (Shallow)** (Third row)

4. **Model (Shallow)** (Fourth row)

5. **Model (Deep)** (Fifth row)

---

## Model-Specific Details

### 1. Multi-Layer Perceptron (MLP)

#### Theorem

- **Universal Approximation Theorem**

#### Formula (Shallow)

- \( f(\mathbf{x}) \approx \sum_i a_i\sigma(\mathbf{w}_i \cdot \mathbf{x} + b_i) \)

#### Model (Shallow)

- **Components**:

- Fixed activation functions on nodes

- Learnable weights on edges

- **Diagram**:

- Fully connected network with summation node

- Activation functions represented by σ symbols

#### Model (Deep)

- **Architecture**:

- Three layers (W₁, W₂, W₃)

- **Layer Properties**:

- W₁: Linear, learnable

- σ₁: Nonlinear, learnable

- W₂: Nonlinear, fixed

- σ₂: Nonlinear, fixed

- W₃: Linear, learnable

- **Formula**:

- \( \text{MLP}(\mathbf{x}) = (\mathbf{W}_3 \circ \sigma_2 \circ \mathbf{W}_2 \circ \sigma_1 \circ \mathbf{W}_1)(\mathbf{x}) \)

---

### 2. Kolmogorov-Arnold Network (KAN)

#### Theorem

- **Kolmogorov-Arnold Representation Theorem**

#### Formula (Shallow)

- \( f(\mathbf{x}) = \sum_q \Phi_q \left( \sum_p \phi_{q,p}(x_p) \right) \)

#### Model (Shallow)

- **Components**:

- Learnable activation functions on edges

- Sum operation on nodes

- **Diagram**:

- Nodes with Φ symbols

- Edge-based activation functions

#### Model (Deep)

- **Architecture**:

- Three sequential functions (Φ₁, Φ₂, Φ₃)

- **Function Properties**:

- Φ₁: Nonlinear, learnable

- Φ₂: Nonlinear, learnable

- Φ₃: Nonlinear, learnable

- **Formula**:

- \( \text{KAN}(\mathbf{x}) = (\Phi_3 \circ \Phi_2 \circ \Phi_1)(\mathbf{x}) \)

---

### 3. Rational-ANOVA (Ours)

#### Theorem

- **Padé Approximation & Functional ANOVA**

#### Formula (Shallow)

- \( f(\mathbf{x}) = \sum_i \frac{P_i(x_i)}{Q_i(x_i)} + \sum_{i,j} \frac{P_{ij}(x_i, x_j)}{Q_{ij}(x_i, x_j)} \)

#### Model (Shallow)

- **Components**:

- Learnable rational units

- Sparse structure

- **Diagram**:

- Nodes with rational function symbols

- Additive structure representation

#### Model (Deep)

- **Architecture**:

- **Main Effects**:

- 2D linear matrix (x₁, x₂)

- **Pairwise Effects**:

- 3D tensor (x₁, x₂, x₃)

- **Additive Structure**:

- Rational-ANOVA(x) with extrapolation component

- **Key Features**:

- Pole/Extrapolation handling

- Sparse connectivity pattern

---

## Diagram Component Analysis

### Spatial Grounding of Elements

1. **Legend Position**: Not explicitly visible in table format

2. **Color Coding**:

- MLP: Blue tones (W₁, W₂, W₃ blocks)

- KAN: Green tones (Φ₁, Φ₂, Φ₃ symbols)

- Rational-ANOVA: Red tones (rational function symbols)

### Trend Verification

- **MLP**: Layer complexity increases from shallow to deep

- **KAN**: Sequential function composition pattern

- **Rational-ANOVA**: Hierarchical effect decomposition (main → pairwise → extrapolation)

---

## Language Analysis

- **Primary Language**: English

- **Secondary Elements**:

- Mathematical notation (LaTeX)

- Technical terminology (ANOVA, perceptron, etc.)

---

## Data Table Reconstruction

| Model | Theorem | Formula (Shallow) | Model (Shallow) Components | Model (Deep) Architecture |

|----------------------|----------------------------------|-----------------------------------------------------------------------------------|-----------------------------------------------------|-------------------------------------------------------------------------------------------|

| Multi-Layer Perceptron (MLP) | Universal Approximation Theorem | \( f(\mathbf{x}) \approx \sum_i a_i\sigma(\mathbf{w}_i \cdot \mathbf{x} + b_i) \) | Fixed activations, learnable weights | 3-layer network with mixed linear/nonlinear functions |

| Kolmogorov-Arnold Network (KAN) | Kolmogorov-Arnold Representation Theorem | \( f(\mathbf{x}) = \sum_q \Phi_q \left( \sum_p \phi_{q,p}(x_p) \right) \) | Learnable edge activations, node summation | 3 sequential nonlinear learnable functions |

| Rational-ANOVA (Ours) | Padé Approximation & Functional ANOVA | \( f(\mathbf{x}) = \sum_i \frac{P_i(x_i)}{Q_i(x_i)} + \sum_{i,j} \frac{P_{ij}(x_i, x_j)}{Q_{ij}(x_i, x_j)} \) | Learnable rational units, sparse structure | Main effects (2D), pairwise effects (3D), extrapolation component |

---

## Critical Observations

1. **Functional Complexity**:

- MLP: Fixed nonlinearities with learnable weights

- KAN: Entirely learnable nonlinear functions

- Rational-ANOVA: Hybrid learnable rational functions with explicit effect decomposition

2. **Structural Differences**:

- MLP: Dense connectivity

- KAN: Sequential function composition

- Rational-ANOVA: Sparse additive structure with rational basis functions

3. **Theoretical Foundations**:

- MLP: Universal approximation guarantees

- KAN: Kolmogorov-Arnold representation

- Rational-ANOVA: Padé approximation theory combined with ANOVA decomposition

DECODING INTELLIGENCE...