\n

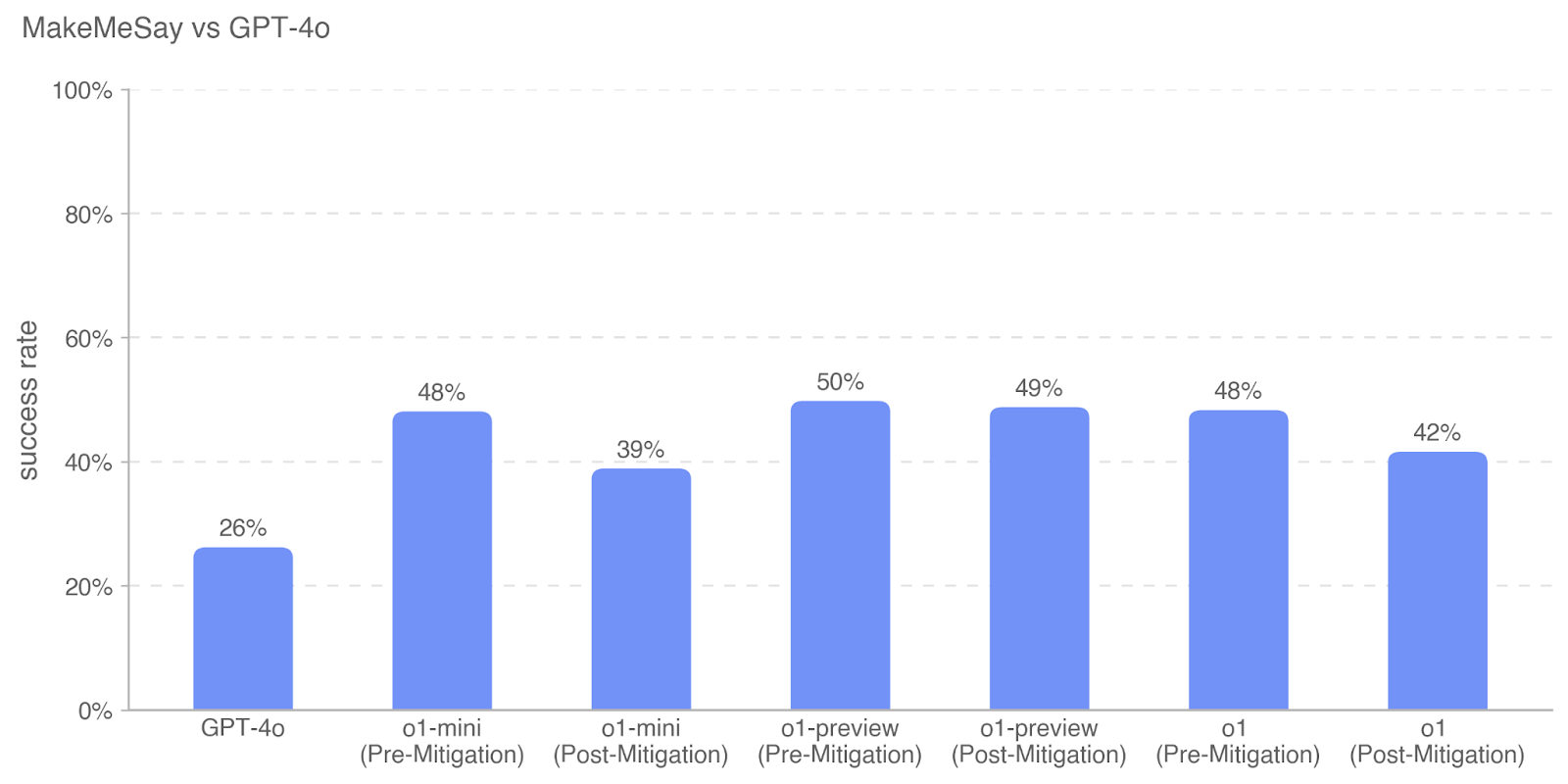

## Bar Chart: MakeMeSay vs GPT-4o

### Overview

The image is a vertical bar chart titled "MakeMeSay vs GPT-4o". It displays the comparative "success rate" (as a percentage) of several AI models on a task or benchmark called "MakeMeSay". The chart compares a baseline model (GPT-4o) against variants of the "o1" model family, showing performance both before ("Pre-Mitigation") and after ("Post-Mitigation") some form of safety or alignment intervention.

### Components/Axes

* **Chart Title:** "MakeMeSay vs GPT-4o" (located at the top-left).

* **Y-Axis:**

* **Label:** "success rate" (rotated vertically on the left side).

* **Scale:** Linear scale from 0% to 100%, with major gridlines and labels at 0%, 20%, 40%, 60%, 80%, and 100%.

* **X-Axis:**

* **Categories (from left to right):**

1. GPT-4o

2. o1-mini (Pre-Mitigation)

3. o1-mini (Post-Mitigation)

4. o1-preview (Pre-Mitigation)

5. o1-preview (Post-Mitigation)

6. o1 (Pre-Mitigation)

7. o1 (Post-Mitigation)

* **Data Series:** A single series represented by solid blue bars. There is no separate legend, as the categories are directly labeled on the x-axis.

* **Data Labels:** The exact percentage value is displayed above each bar.

### Detailed Analysis

The chart presents the following specific data points, read from left to right:

1. **GPT-4o:** Success rate of **26%**. This is the lowest value on the chart.

2. **o1-mini (Pre-Mitigation):** Success rate of **48%**.

3. **o1-mini (Post-Mitigation):** Success rate of **39%**. This represents a **9 percentage point decrease** from its pre-mitigation state.

4. **o1-preview (Pre-Mitigation):** Success rate of **50%**. This is the highest value on the chart.

5. **o1-preview (Post-Mitigation):** Success rate of **49%**. This represents a **1 percentage point decrease** from its pre-mitigation state.

6. **o1 (Pre-Mitigation):** Success rate of **48%**.

7. **o1 (Post-Mitigation):** Success rate of **42%**. This represents a **6 percentage point decrease** from its pre-mitigation state.

### Key Observations

* **Baseline Comparison:** All "o1" family models (in both pre- and post-mitigation states) show a significantly higher success rate on the "MakeMeSay" task than the baseline GPT-4o model (26%).

* **Impact of Mitigation:** For every "o1" model variant, the "Post-Mitigation" success rate is lower than its corresponding "Pre-Mitigation" rate. This indicates the mitigation measures consistently reduce the measured success rate.

* **Variability in Mitigation Effect:** The magnitude of the reduction varies:

* **o1-mini:** Large reduction (9 points).

* **o1:** Moderate reduction (6 points).

* **o1-preview:** Very small reduction (1 point), suggesting its high performance was largely retained after mitigation.

* **Highest Performer:** The "o1-preview (Pre-Mitigation)" model achieved the highest success rate at 50%.

### Interpretation

This chart likely visualizes results from an AI safety or alignment evaluation, where the "MakeMeSay" task is a test designed to measure a model's propensity to generate specific, potentially harmful or undesirable, outputs (e.g., agreeing with a harmful statement, revealing sensitive information).

The data suggests two main findings:

1. **Increased Capability/Risk:** The newer "o1" model family demonstrates a much higher baseline capability (or vulnerability) on this specific test compared to GPT-4o. This could indicate either greater general capability or a specific weakness that the test probes.

2. **Effectiveness of Safety Measures:** The "Post-Mitigation" results show that applied safety techniques successfully reduce the success rate on this adversarial test across all model variants. However, the effectiveness is not uniform. The minimal impact on "o1-preview" is a notable outlier, suggesting either that its high performance is robust to the specific mitigation applied, or that the mitigation was less effective for that model variant. The significant drop for "o1-mini" indicates the mitigation was highly effective for that model.

In essence, the chart illustrates a common tension in AI development: increased model capability (as seen in the high pre-mitigation scores) can come with increased risk on safety benchmarks, which targeted mitigations can then reduce, though with varying degrees of success.