## Bar Chart: MakeMeSay vs GPT-4o

### Overview

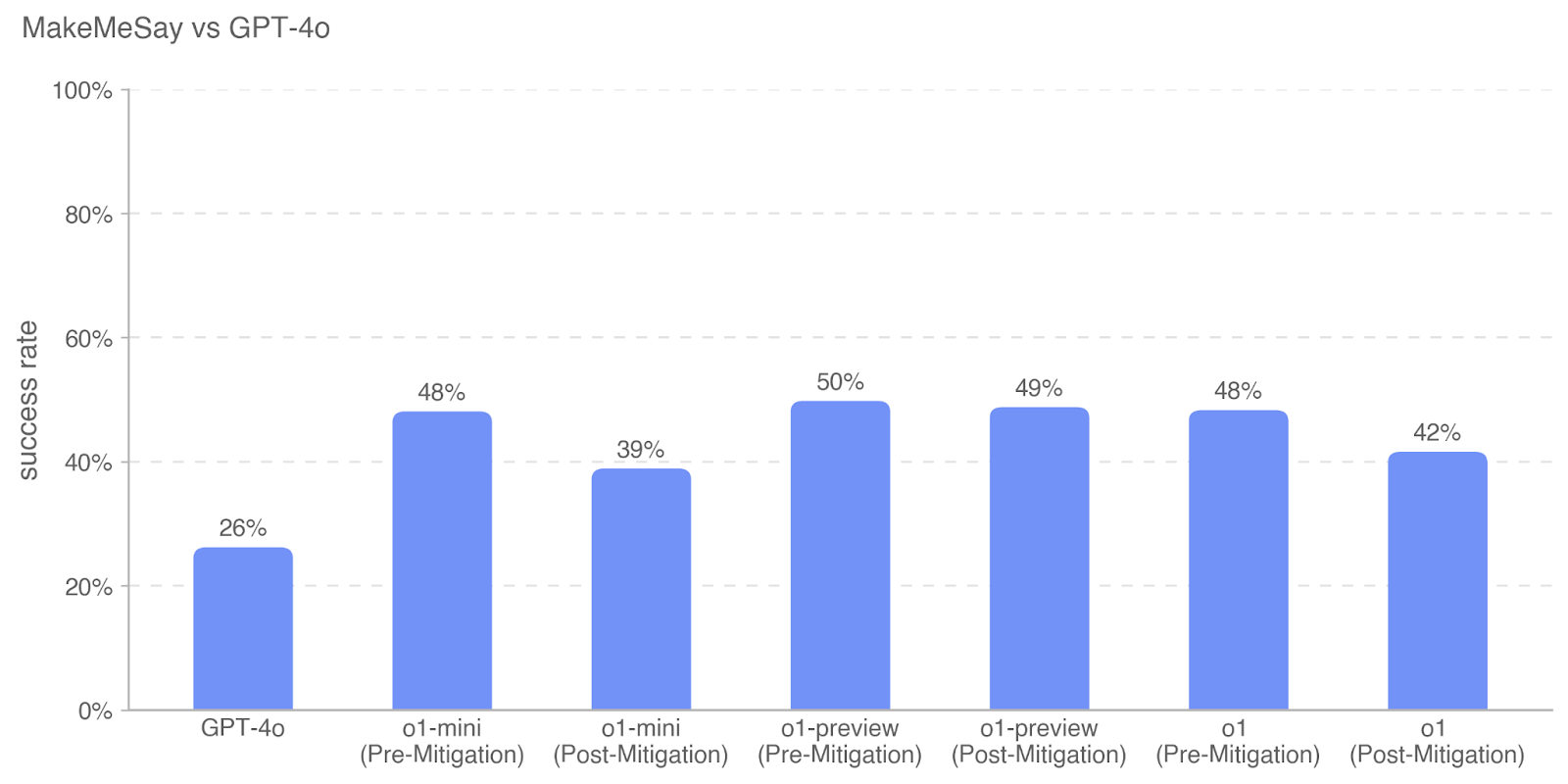

The chart compares the success rates of different AI models (GPT-4o, o1-mini, o1-preview, o1) under two conditions: "Pre-Mitigation" and "Post-Mitigation." Success rates are represented as percentages on the y-axis, with categories listed on the x-axis.

### Components/Axes

- **X-Axis (Categories)**:

- GPT-4o

- o1-mini (Pre-Mitigation)

- o1-mini (Post-Mitigation)

- o1-preview (Pre-Mitigation)

- o1-preview (Post-Mitigation)

- o1 (Pre-Mitigation)

- o1 (Post-Mitigation)

- **Y-Axis (Values)**: Success rate (0% to 100%, increments of 20%).

- **Legend**: No explicit legend is visible. All bars are blue, suggesting a single data series.

- **Axis Titles**:

- X-Axis: Unlabeled (categories inferred from labels).

- Y-Axis: "success rate."

### Detailed Analysis

- **GPT-4o**: 26% success rate (lowest among all categories).

- **o1-mini**:

- Pre-Mitigation: 48%

- Post-Mitigation: 39% (9% drop).

- **o1-preview**:

- Pre-Mitigation: 50% (highest overall).

- Post-Mitigation: 49% (1% drop).

- **o1**:

- Pre-Mitigation: 48%

- Post-Mitigation: 42% (6% drop).

### Key Observations

1. **Highest Performance**: o1-preview (Pre-Mitigation) achieves the highest success rate (50%).

2. **Mitigation Impact**:

- o1-mini and o1 show significant drops (9% and 6%, respectively) post-mitigation.

- o1-preview’s success rate remains nearly unchanged (1% drop).

3. **Lowest Performer**: GPT-4o (26%) underperforms all other models in both conditions.

4. **Consistency**: o1-preview maintains the highest post-mitigation rate (49%), suggesting resilience to mitigation.

### Interpretation

The data indicates that **pre-mitigation models generally outperform their post-mitigation counterparts**, with o1-preview demonstrating the most stability. The sharp decline in o1-mini and o1 post-mitigation suggests mitigation processes may inadvertently reduce their effectiveness. GPT-4o’s consistently low success rate highlights its inferior performance relative to other models. The near-identical pre- and post-mitigation rates for o1-preview imply that mitigation has minimal impact on its performance, potentially due to robust design or inherent stability. This could inform prioritization of mitigation efforts for models like o1-mini and o1, where mitigation appears counterproductive.