## Diagram and Chart: LLM Task Formulation and Performance Comparison

### Overview

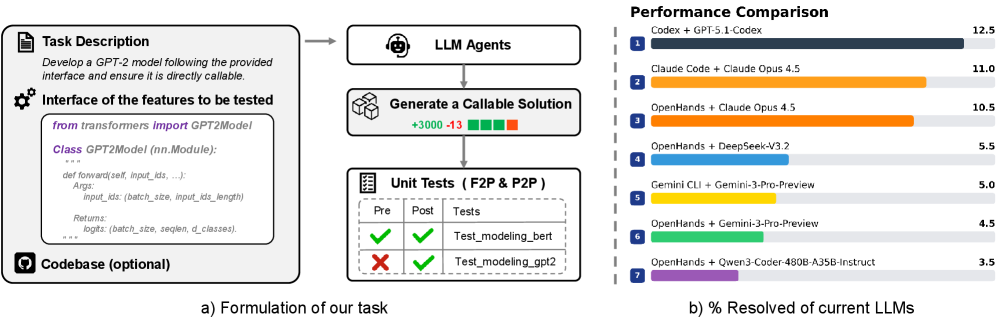

The image is a composite figure containing two distinct parts. On the left (labeled **a)**) is a flowchart diagram titled "Formulation of our task," which outlines a process for testing Large Language Model (LLM) agents on a code generation task. On the right (labeled **b)**) is a horizontal bar chart titled "Performance Comparison," which displays the performance scores of various LLM agent combinations on the described task.

### Components/Axes

**Left Diagram (a): Formulation of our task**

* **Structure:** A flowchart with boxes and arrows indicating a process flow.

* **Components (from top to bottom, left to right):**

1. **Task Description Box:** Contains the text: "Develop a GPT-2 model following the provided interface and ensure it is directly callable."

2. **Interface of the features to be tested Box:** Contains a Python code snippet:

```python

from transformers import GPT2Model

class GPT2Model(nn.Module):

...

def forward(self, input_ids, ...):

Args:

input_ids: (batch_size, input_ids_length)

...

Returns:

logits: (batch_size, seqlen, d_classes)

```

3. **Codebase (optional) Icon:** A GitHub logo icon with the label "Codebase (optional)" at the bottom left.

4. **LLM Agents Box:** A central box with a robot head icon, labeled "LLM Agents." An arrow points from the "Task Description" to this box.

5. **Generate a Callable Solution Box:** Below "LLM Agents," with a box icon. It contains the text "+3000 -13" followed by four small colored squares (green, green, green, red). An arrow points from "LLM Agents" to this box.

6. **Unit Tests (F2P & P2P) Box:** At the bottom of the flow, with a checklist icon. It contains a table with headers "Pre", "Post", and "Tests". The table rows are:

* Row 1: Green checkmark (Pre), Green checkmark (Post), "Test_modeling_bert"

* Row 2: Red X (Pre), Green checkmark (Post), "Test_modeling_gpt2"

* **Flow:** The overall flow is: Task Description -> LLM Agents -> Generate a Callable Solution -> Unit Tests.

**Right Chart (b): Performance Comparison**

* **Chart Type:** Horizontal bar chart.

* **Title:** "Performance Comparison"

* **Y-axis (Categories):** Lists 7 different LLM agent combinations, each preceded by a numbered blue square (1-7).

* **X-axis (Values):** Numerical scale from 0 to 12.5 (implied, as the highest value is 12.5). The axis is not explicitly labeled with a title, but the chart's subtitle is "% Resolved of current LLMs," indicating the values represent a percentage or a score related to task resolution.

* **Legend/Labels:** Each bar is a different color and has a text label to its right, followed by a numerical value.

### Detailed Analysis

**Left Diagram (a) - Process Details:**

The diagram formalizes a software testing or evaluation pipeline. The core task is to have an LLM agent generate a callable Python solution (a GPT-2 model class) that adheres to a specified interface. The "Generate a Callable Solution" step shows a net change metric (+3000 -13) and a status indicator (3 green, 1 red square), likely representing lines of code added/removed and a pass/fail summary. The final step evaluates the generated solution using two types of unit tests: "F2P" (likely "Feature-to-Problem") and "P2P" ("Problem-to-Problem"). The test results show that the "Test_modeling_bert" passed both pre- and post-generation checks, while "Test_modeling_gpt2" failed initially but passed after generation.

**Right Chart (b) - Performance Data:**

The chart ranks 7 LLM combinations. The data points, extracted by matching the colored bar to its label and reading the value at the bar's end, are:

1. **Codex + GPT-5.1-Codex** (Dark Blue bar): **12.5**

2. **Claude Code + Claude Opus 4.5** (Orange bar): **11.0**

3. **OpenHands + Claude Opus 4.5** (Light Orange bar): **10.5**

4. **OpenHands + DeepSeek-V3.2** (Light Blue bar): **5.5**

5. **Gemini CLI + Gemini-3-Pro-Preview** (Yellow bar): **5.0**

6. **OpenHands + Gemini-3-Pro-Preview** (Green bar): **4.5**

7. **OpenHands + Qwen3-Coder-480B-A35B-Instruct** (Purple bar): **3.5**

**Trend Verification:** The bars are arranged in descending order of their numerical value. The trend is a clear, stepwise decrease in performance score from the top-ranked combination (12.5) to the lowest (3.5). There is a significant drop between the top three performers (all above 10.0) and the bottom four (all at 5.5 or below).

### Key Observations

1. **Performance Gap:** There is a substantial performance gap between the top three LLM combinations (scores 10.5-12.5) and the remaining four (scores 3.5-5.5). The top performer scores more than 3.5 times higher than the lowest performer.

2. **Agent Framework Impact:** The "OpenHands" framework appears in four of the seven entries (positions 3, 4, 6, 7). Its performance varies dramatically depending on the paired model, from a high of 10.5 (with Claude Opus 4.5) to a low of 3.5 (with Qwen3-Coder).

3. **Model Pairing:** The highest score is achieved by a combination labeled "Codex + GPT-5.1-Codex," suggesting a specialized or fine-tuned model pairing. The second and third places use "Claude Opus 4.5" with different agent frameworks ("Claude Code" vs. "OpenHands").

4. **Task Specificity:** The left diagram specifies a very concrete, low-level programming task (implementing a PyTorch module for a GPT-2 model). The performance chart likely measures success on this or similar code generation/resolution tasks.

### Interpretation

This figure presents a two-part analysis of LLM capabilities in a specific software engineering context. The left side defines a rigorous, automated evaluation framework: it moves from a natural language task description, through LLM code generation, to verification via unit tests. This setup measures not just code generation, but the creation of *functionally correct and callable* solutions.

The right side's performance data reveals that current LLMs vary widely in their ability to solve this type of concrete programming problem. The high scores of the top combinations suggest that certain model architectures or training regimes (like those behind "Codex" and "Claude Opus 4.5") are significantly more adept at this form of technical, interface-constrained code generation. The lower scores of other capable general models indicate that task-specific fine-tuning or agent scaffolding (like "OpenHands") is crucial but not sufficient on its own; the underlying model's capability remains the primary driver of performance.

The stark divide in scores could imply a "phase change" in capability, where models above a certain threshold (here, scoring >10) can reliably handle the task, while those below it struggle fundamentally. The investigation would benefit from knowing the exact nature of the "% Resolved" metric and the specific test suite used.