## Diagram: COMET Commonsense Transformers Architecture

### Overview

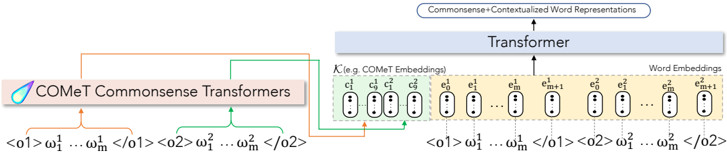

The image is a diagram illustrating the architecture of COMET (Commonsense Transformers). It shows how input sequences are processed through COMET Commonsense Transformers and a Transformer model to generate commonsense and contextualized word representations.

### Components/Axes

* **Top:** "Commonsense+Contextualized Word Representations" (output of the entire process).

* **Middle:**

* "Transformer" (a model that processes embeddings).

* "K (e.g. COMET Embeddings)" (input to the Transformer, consisting of COMET embeddings and word embeddings).

* **Left:** "COMET Commonsense Transformers" (initial processing stage).

* **Bottom:** Input sequences represented as `<o1> ω₁¹ ... ωₘ¹ </o1>` and `<o2> ω₁² ... ωₘ² </o2>`.

### Detailed Analysis

1. **Input Sequences:**

* Two input sequences are shown: `<o1> ω₁¹ ... ωₘ¹ </o1>` and `<o2> ω₁² ... ωₘ² </o2>`.

* These sequences are fed into the "COMET Commonsense Transformers" block.

2. **COMET Commonsense Transformers:**

* The "COMET Commonsense Transformers" block processes the input sequences.

* The output of this block is used to create "K (e.g. COMET Embeddings)".

3. **K (e.g. COMET Embeddings):**

* This block contains two types of embeddings: COMET embeddings (c) and Word Embeddings (e).

* COMET Embeddings are represented as `c₀¹`, `c₁¹`, `c₀²`, `c₁²` with vertical ellipses indicating more embeddings.

* Word Embeddings are represented as `e₀¹`, `e₁¹`, `eₘ¹`, `eₘ₊₁¹`, `e₀²`, `e₁²`, `eₘ²`, `eₘ₊₁²` with vertical ellipses indicating more embeddings.

4. **Transformer:**

* The "Transformer" block receives "K (e.g. COMET Embeddings)" as input.

* The output of the "Transformer" is "Commonsense+Contextualized Word Representations".

5. **Connections:**

* An orange arrow connects the first input sequence `<o1> ω₁¹ ... ωₘ¹ </o1>` to the "COMET Commonsense Transformers" block.

* A green arrow connects the second input sequence `<o2> ω₁² ... ωₘ² </o2>` to the "COMET Commonsense Transformers" block.

* An orange arrow connects the "COMET Commonsense Transformers" block to the COMET Embeddings `c` within "K (e.g. COMET Embeddings)".

* A green arrow connects the "COMET Commonsense Transformers" block to the COMET Embeddings `c` within "K (e.g. COMET Embeddings)".

* An arrow points upwards from "Transformer" to "Commonsense+Contextualized Word Representations".

* Dotted lines connect the input sequences `<o1> ω₁¹ ... ωₘ¹ </o1>` and `<o2> ω₁² ... ωₘ² </o2>` to the Word Embeddings `e` within "K (e.g. COMET Embeddings)".

### Key Observations

* The diagram illustrates a two-stage process: initial processing by COMET Commonsense Transformers, followed by a Transformer model.

* The input sequences are processed to generate both COMET embeddings and word embeddings, which are then fed into the Transformer.

* The final output is a combination of commonsense and contextualized word representations.

### Interpretation

The diagram depicts the architecture of a system that leverages commonsense knowledge to enhance word representations. The COMET Commonsense Transformers likely extract relevant commonsense information from the input sequences. This information, combined with standard word embeddings, is then used by the Transformer model to generate more informed and contextually aware word representations. The architecture suggests that incorporating commonsense knowledge can improve the quality of word representations, which can be beneficial for various natural language processing tasks.