## Diagram: LLM Agent System Architecture

### Overview

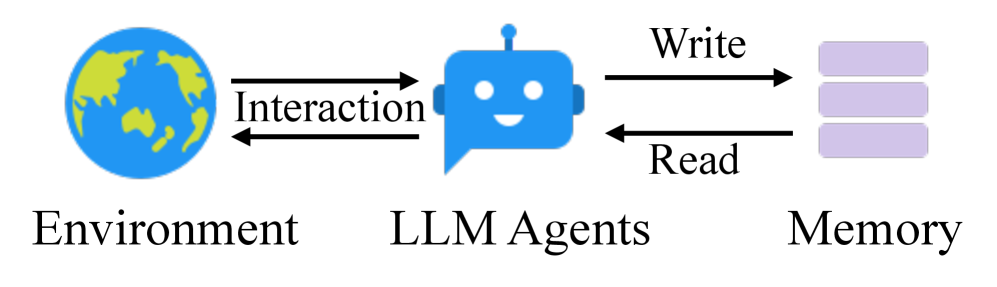

The image is a conceptual diagram illustrating the high-level architecture and information flow of a Large Language Model (LLM) agent system. It depicts three primary components and their bidirectional interactions.

### Components/Axes

The diagram consists of three main graphical components arranged horizontally from left to right, connected by labeled arrows.

1. **Component 1 (Left):**

* **Icon:** A stylized globe, colored blue (oceans) and green (landmasses).

* **Label:** "Environment" (text positioned directly below the icon).

* **Position:** Left side of the diagram.

2. **Component 2 (Center):**

* **Icon:** A blue robot head with a smiling face, resembling a chat bubble or agent avatar.

* **Label:** "LLM Agents" (text positioned directly below the icon).

* **Position:** Center of the diagram.

3. **Component 3 (Right):**

* **Icon:** A stack of three horizontal, light purple rectangles, representing a database or memory store.

* **Label:** "Memory" (text positioned directly below the icon).

* **Position:** Right side of the diagram.

4. **Interaction Arrows & Labels:**

* **Between Environment and LLM Agents:** A pair of horizontal, black arrows pointing in opposite directions. The label "Interaction" is placed centrally between these arrows.

* **Between LLM Agents and Memory:** A pair of horizontal, black arrows pointing in opposite directions.

* The top arrow points from LLM Agents to Memory and is labeled "Write".

* The bottom arrow points from Memory to LLM Agents and is labeled "Read".

### Detailed Analysis

The diagram defines a clear, cyclical data flow:

1. The **LLM Agents** engage in a bidirectional **Interaction** with the external **Environment**. This implies the agents perceive information from the environment and can act upon it.

2. The **LLM Agents** have a bidirectional connection with **Memory**.

* **Write:** The agents can store information, experiences, or learned data into the memory component.

* **Read:** The agents can retrieve previously stored information from memory to inform their interactions with the environment.

### Key Observations

* The architecture is symmetric and linear, emphasizing a clear separation of concerns between the external world (Environment), the processing unit (LLM Agents), and the internal storage (Memory).

* All interactions are explicitly bidirectional, highlighting that information flows in both directions for each connection.

* The use of simple, universal icons (globe, robot, database stack) makes the diagram easily interpretable without specialized knowledge.

### Interpretation

This diagram presents a foundational model for autonomous or semi-autonomous AI agents powered by LLMs. It abstracts away the complexities of the LLM's internal workings to focus on its role as an interactive agent.

* **What it demonstrates:** The core loop of an intelligent agent: **Perceive** (Read from Memory/Interact with Environment) -> **Reason/Decide** (within LLM Agents) -> **Act** (Write to Memory/Interact with Environment).

* **Relationships:** The Environment is the source of tasks and context. The LLM Agent is the central reasoning engine. Memory provides persistence, allowing the agent to learn from past interactions and maintain state across multiple interactions, which is crucial for complex, multi-step tasks.

* **Notable Implication:** The separation of "Memory" from the "LLM Agents" suggests an architecture where the agent's knowledge base or context window is managed externally, potentially allowing for larger, more persistent, or more structured memory than what is contained within the model's immediate parameters. This is a key design pattern for building more capable and persistent AI systems.