# Technical Document Extraction: Diagram Analysis

## Diagram Overview

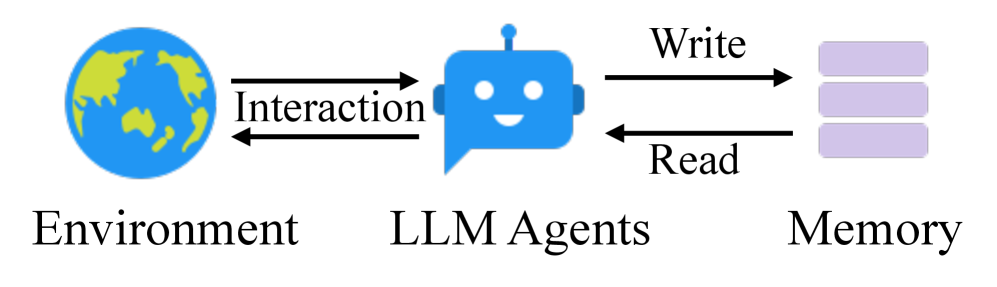

The image depicts a **component interaction diagram** illustrating the relationship between three core elements: **Environment**, **LLM Agents**, and **Memory**. The diagram uses directional arrows to represent data flow and interactions.

---

## Component Breakdown

### 1. Environment

- **Label**: "Environment" (bottom-left)

- **Visual Representation**: A globe icon (blue with green landmasses).

- **Function**: Represents external systems, users, or real-world data sources interacting with the LLM Agents.

### 2. LLM Agents

- **Label**: "LLM Agents" (center)

- **Visual Representation**: A blue robot icon with a speech bubble.

- **Function**: Acts as the central processing unit, interacting with the Environment and managing Memory.

### 3. Memory

- **Label**: "Memory" (top-right)

- **Visual Representation**: Three stacked horizontal rectangles (purple).

- **Function**: Stores data written by LLM Agents and provides read access for subsequent operations.

---

## Interaction Flow

1. **Environment ↔ LLM Agents**:

- **Bidirectional Arrows**: Labeled "Interaction".

- **Direction**:

- Environment → LLM Agents: Input data or commands.

- LLM Agents → Environment: Output responses or actions.

2. **LLM Agents → Memory**:

- **Unidirectional Arrows**:

- **Write**: Data is stored in Memory.

- **Read**: Data is retrieved from Memory.

---

## Key Observations

- **No Data Trends or Numerical Values**: The diagram is conceptual, focusing on system architecture rather than quantitative analysis.

- **Symbolic Representation**:

- The speech bubble on LLM Agents implies communication capabilities.

- Stacked rectangles for Memory suggest layered or hierarchical storage.

- **No Legends or Axes**: The diagram lacks numerical scales, categories, or color-coded legends.

---

## Transcribed Text

- **Labels**:

- Environment

- LLM Agents

- Memory

- **Arrow Labels**:

- Interaction (bidirectional)

- Write (LLM Agents → Memory)

- Read (LLM Agents → Memory)

---

## Conclusion

This diagram outlines a high-level architecture where LLM Agents mediate interactions between an external Environment and an internal Memory system. The flow emphasizes bidirectional communication with the Environment and unidirectional data storage/retrieval with Memory.