## Diagram: TTA Prompt Template

### Overview

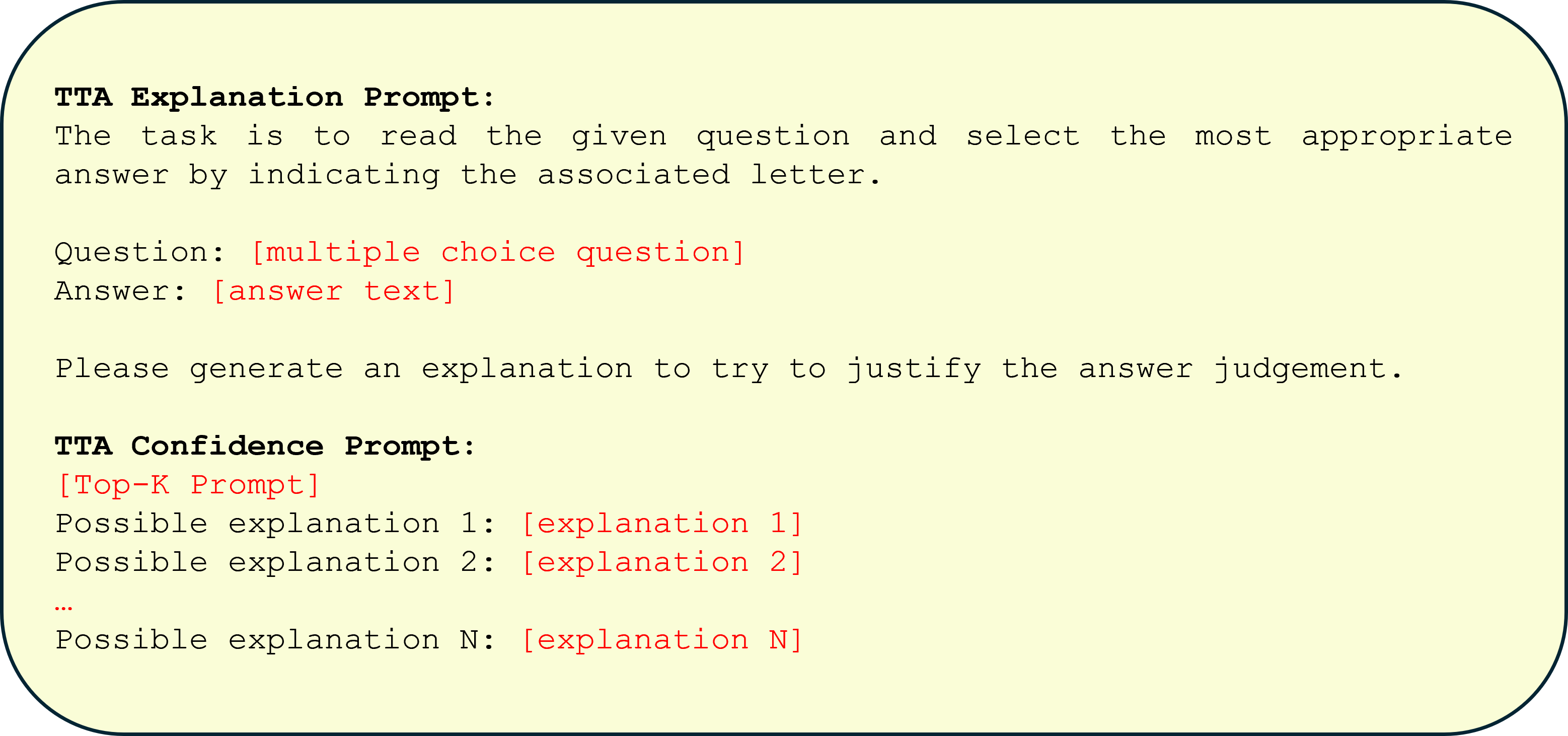

The image displays a structured text template for a two-stage prompting system, likely for an AI or machine learning evaluation task called "TTA." The template is presented within a rounded rectangular container with a light yellow background and a dark border. It consists of two distinct prompt sections: an "Explanation Prompt" and a "Confidence Prompt," each with specific placeholders for variable content.

### Components

The diagram is a textual layout, not a chart with axes. Its components are:

* **Container:** A rounded rectangle with a pale yellow fill (`#FFFACD` approximate) and a dark blue/black border.

* **Text Blocks:** Left-aligned text in a monospaced font.

* **Color Coding:** Certain placeholder text is rendered in red, while labels and static instructions are in black.

* **Spatial Layout:** The content is organized vertically. The "TTA Explanation Prompt" section occupies the top two-thirds, and the "TTA Confidence Prompt" section occupies the bottom third.

### Content Details

The image contains the following exact textual content, with red text indicated:

**TTA Explanation Prompt:**

The task is to read the given question and select the most appropriate answer by indicating the associated letter.

Question: `[multiple choice question]` *(in red)*

Answer: `[answer text]` *(in red)*

Please generate an explanation to try to justify the answer judgement.

**TTA Confidence Prompt:**

`[Top-K Prompt]` *(in red)*

Possible explanation 1: `[explanation 1]` *(in red)*

Possible explanation 2: `[explanation 2]` *(in red)*

`...`

Possible explanation N: `[explanation N]` *(in red)*

### Key Observations

1. **Two-Stage Process:** The template implies a sequential workflow: first, generating an explanation for a chosen answer, and second, evaluating confidence using multiple potential explanations.

2. **Placeholder System:** Red text consistently marks dynamic fields where specific data (questions, answers, explanations) would be inserted. The ellipsis (`...`) indicates a variable number of explanation slots.

3. **Task Definition:** The first prompt explicitly defines a multiple-choice question-answering task where the output must include both a selected letter and a justifying explanation.

4. **Confidence Mechanism:** The second prompt, labeled "Confidence Prompt," suggests a method for assessing confidence by considering a "Top-K" set of possible explanations, which could be used for ranking or uncertainty estimation.

### Interpretation

This image is not a data visualization but a **specification for a structured AI interaction protocol**. It outlines a method to elicit not just an answer, but also a rationale and a confidence assessment from a model.

* **Purpose:** The template is designed to improve the interpretability and reliability of AI responses. By requiring an explanation, it encourages the model to "show its work." The confidence prompt, which considers multiple explanations, suggests a technique for gauging the certainty of the initial answer—perhaps by checking if alternative, plausible explanations exist.

* **Relationship Between Elements:** The "Explanation Prompt" generates the primary output (answer + justification). The "Confidence Prompt" then uses that output (or variations of it) as input for a secondary evaluation stage. The `[Top-K Prompt]` likely instructs the system on how to generate or retrieve the list of `N` possible explanations.

* **Potential Application:** This structure is common in research on **Chain-of-Thought (CoT) reasoning**, **self-consistency checks**, and **uncertainty quantification** in large language models. It could be used for benchmarking model performance on tasks requiring justification, or in production systems where understanding the model's confidence is critical for decision-making.

* **Notable Design:** The clear separation of the "explanation" and "confidence" stages is a key architectural insight. It modularizes the process of reasoning and self-evaluation, making it easier to analyze and improve each component independently.