# Technical Document Extraction: Training and Checkpointing Timeline

## 1. Document Overview

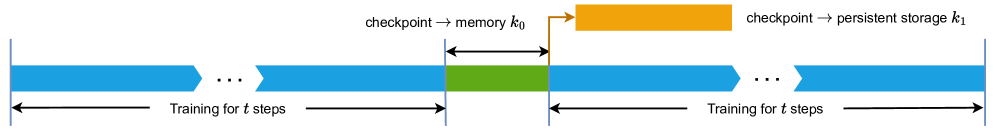

This image is a technical diagram illustrating a multi-stage machine learning training process with a two-tier checkpointing strategy. It uses a horizontal timeline to represent the flow of operations, distinguishing between active training, memory-based checkpointing, and persistent storage checkpointing.

## 2. Component Isolation

### Region A: Main Timeline (Horizontal Axis)

The timeline is composed of several colored blocks and markers:

* **Blue Blocks (Training):** Represent the active training phase. These blocks feature a chevron-style indentation on the right and a corresponding point on the left, suggesting a continuous, repeating process.

* **Green Block (Memory Checkpoint):** A distinct segment occurring immediately after a training interval.

* **Vertical Blue Lines:** Act as temporal delimiters between major phases.

### Region B: Checkpointing Operations (Upper Layer)

* **Orange Block:** Positioned above the main timeline, representing an asynchronous or secondary storage operation.

* **Orange L-shaped Arrow:** Originates at the end of the green block and points to the start of the orange block, indicating a causal or sequential relationship.

## 3. Textual Transcription and Labels

| Label | Transcription | Context/Meaning |

| :--- | :--- | :--- |

| **Lower Label 1** | `Training for t steps` | Defines the duration of the first blue training segment. |

| **Lower Label 2** | `Training for t steps` | Defines the duration of the second blue training segment. |

| **Upper Label 1** | `checkpoint → memory k₀` | Describes the green block; saving state to volatile memory. |

| **Upper Label 2** | `checkpoint → persistent storage k₁` | Describes the orange block; saving state to non-volatile storage. |

| **Symbol** | `...` | Ellipses indicate that the training blocks represent a longer, ongoing sequence of steps. |

## 4. Process Flow and Logic Analysis

### Step-by-Step Execution:

1. **Training Phase ($t$ steps):** The system undergoes training for a duration defined as $t$. This is represented by the first set of blue blocks.

2. **Memory Checkpoint ($k_0$):** Immediately following the training interval, the system performs a checkpoint to memory. This is represented by the **Green Block**. The duration of this task is $k_0$.

3. **Persistent Storage Checkpoint ($k_1$):**

* As soon as the memory checkpoint ($k_0$) is complete, an orange arrow indicates the start of a transfer to persistent storage.

* This is represented by the **Orange Block** with duration $k_1$.

* **Crucial Observation:** The orange block sits *above* the next training block. This indicates that while the state is being written to persistent storage ($k_1$), the next round of training begins immediately in parallel.

4. **Resumed Training ($t$ steps):** The second blue block begins exactly when the green block ends, showing that training is not blocked by the persistent storage write ($k_1$), only by the memory write ($k_0$).

## 5. Technical Summary of Data Points

* **Primary Task:** Training (Blue).

* **Synchronous Overhead:** Memory Checkpointing (Green, $k_0$). Training stops during this period.

* **Asynchronous Task:** Persistent Storage Checkpointing (Orange, $k_1$). Training continues while this task runs in the background.

* **Temporal Variables:**

* $t$: Duration of training intervals.

* $k_0$: Time taken to checkpoint to memory (blocking).

* $k_1$: Time taken to checkpoint to persistent storage (non-blocking/asynchronous).