# Technical Diagram Analysis

## Overview

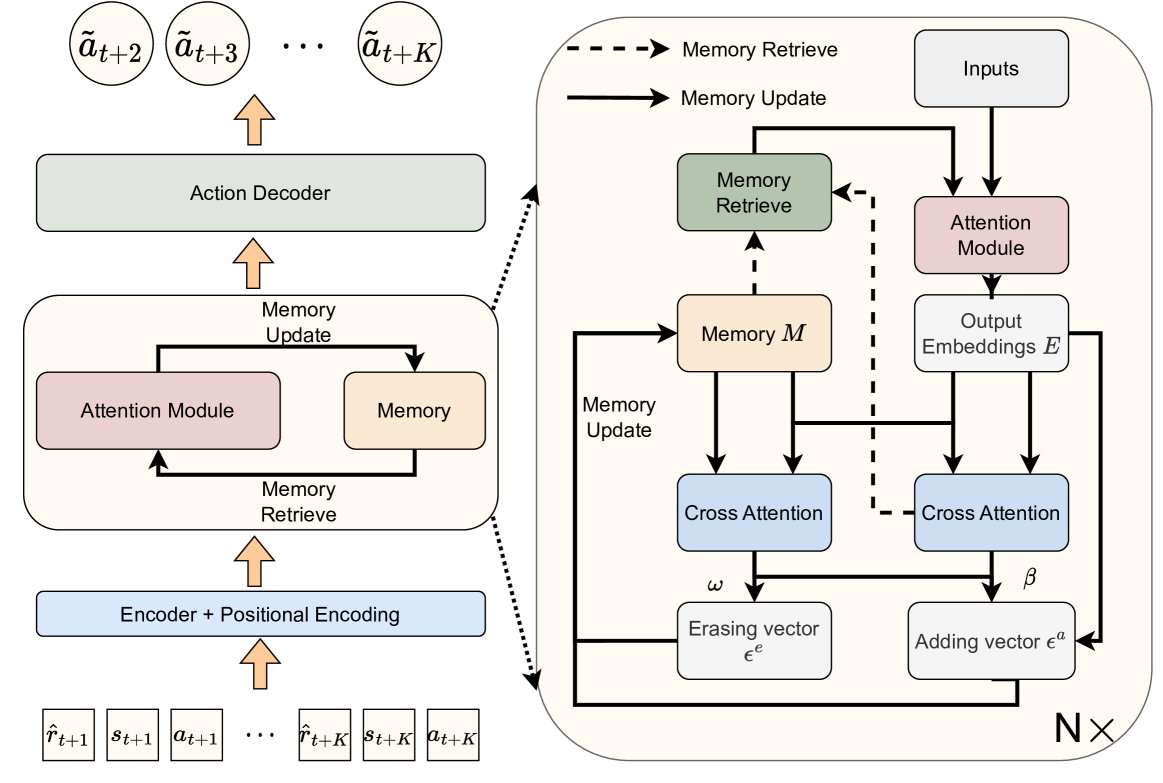

The diagram illustrates a neural architecture with two primary components:

1. **Left Section**: Sequential processing flow from encoder to action decoder

2. **Right Section**: Memory-augmented attention mechanism with cross-attention

## Left Section: Processing Flow

### Components (Bottom to Top)

1. **Encoder + Positional Encoding**

- Inputs:

- `r̂_{t+1}`, `s_{t+1}`, `a_{t+1}`, ..., `r̂_{t+K}`, `s_{t+K}`, `a_{t+K}`

- Output: Positionally encoded sequence

2. **Attention Module**

- Functions:

- Memory Retrieve

- Memory Update

- Connections:

- Bidirectional arrows between Memory and Attention Module

3. **Memory Update**

- Receives updates from Attention Module

- Maintains memory state

4. **Action Decoder**

- Receives memory updates

- Generates action sequence: `ā_{t+2}`, `ā_{t+3}`, ..., `ā_{t+K}`

## Right Section: Memory-Augmented Attention

### Key Components

1. **Memory System**

- **Memory Retrieve** (Green)

- Accesses stored information

- **Memory Update** (Blue)

- Modifies memory state

- **Memory M** (Central)

- Core memory repository

2. **Attention Mechanisms**

- **Attention Module** (Pink)

- Processes input embeddings

- **Cross Attention** (Blue)

- Two instances:

- Left: Processes memory with erasing vector εᵉ

- Right: Processes memory with adding vector εᵃ

3. **Input/Output**

- **Inputs**: Raw data feed

- **Output Embeddings E**: Processed output

### Flow Dynamics

1. **Memory Operations**

- Memory Retrieve → Cross Attention (εᵉ)

- Memory Update → Cross Attention (εᵃ)

2. **Attention Processing**

- Inputs → Attention Module → Output Embeddings E

- Cross Attention outputs modulate memory state

## Color-Coded Components

- **Pink**: Attention Module

- **Green**: Memory Retrieve

- **Blue**: Memory Update/Cross Attention

- **Gray**: Inputs/Output Embeddings

## Spatial Grounding

- Legend: Not explicitly present in diagram

- Component Colors:

- Attention Module: Pink (#FFC0CB)

- Memory Retrieve: Green (#90EE90)

- Memory Update/Cross Attention: Blue (#ADD8E6)

- Inputs/Output: Gray (#D3D3D3)

## Trend Verification

- No numerical trends present (diagram, not chart)

- Data flow directionality confirmed through arrow placement

## Component Isolation

1. **Header**: Diagram title not present

2. **Main Chart**:

- Left: Sequential processing flow

- Right: Memory-attention interaction

3. **Footer**: No explicit footer elements

## Critical Observations

1. Bidirectional memory-attention interaction

2. Dual cross-attention mechanisms with different update vectors

3. Positional encoding integrated at input stage

4. Memory system acts as persistent state between processing steps

## Missing Elements

- No explicit legend present

- No numerical data points or quantitative metrics

- No temporal axis or sequence length indicators

This architecture combines recurrent processing with memory-augmented attention, enabling long-term dependency modeling through persistent memory states and cross-modal attention mechanisms.