TECHNICAL ASSET FINGERPRINT

938105beee61de0ddd653cb7

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Cognitive Brain vs. Chain-of-Thought

### Overview

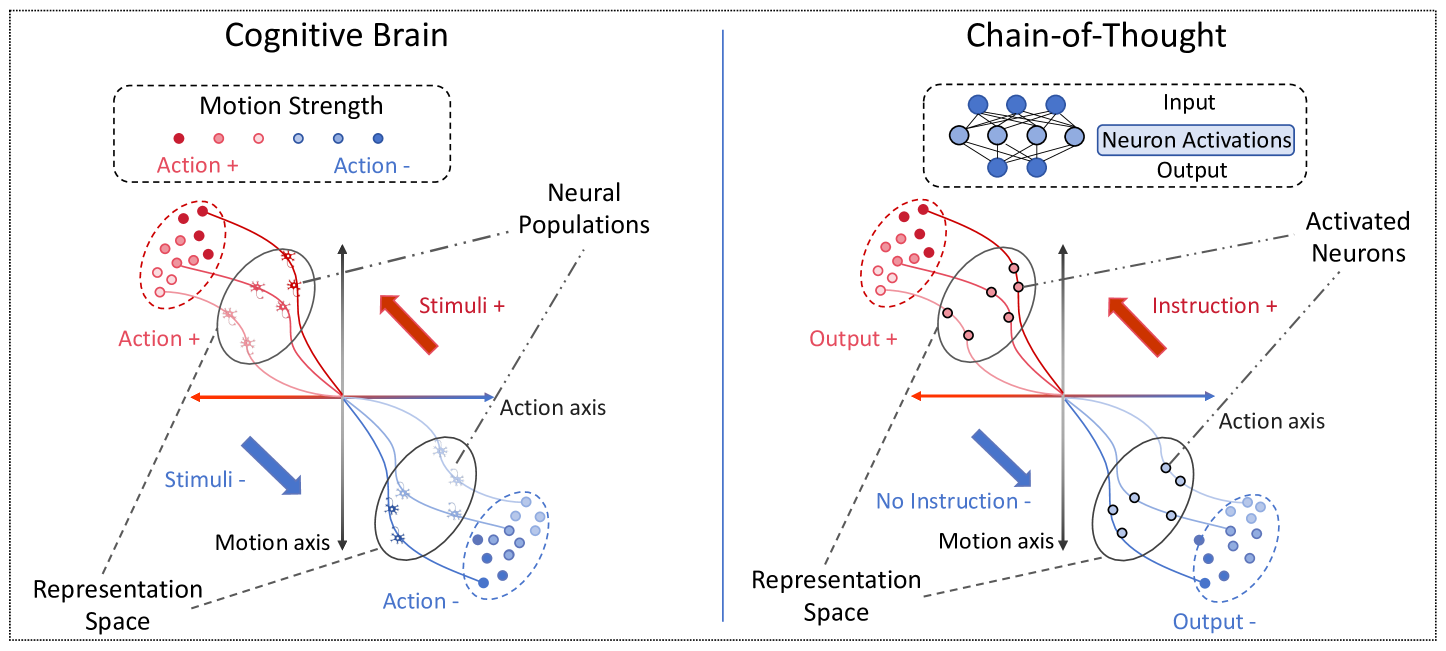

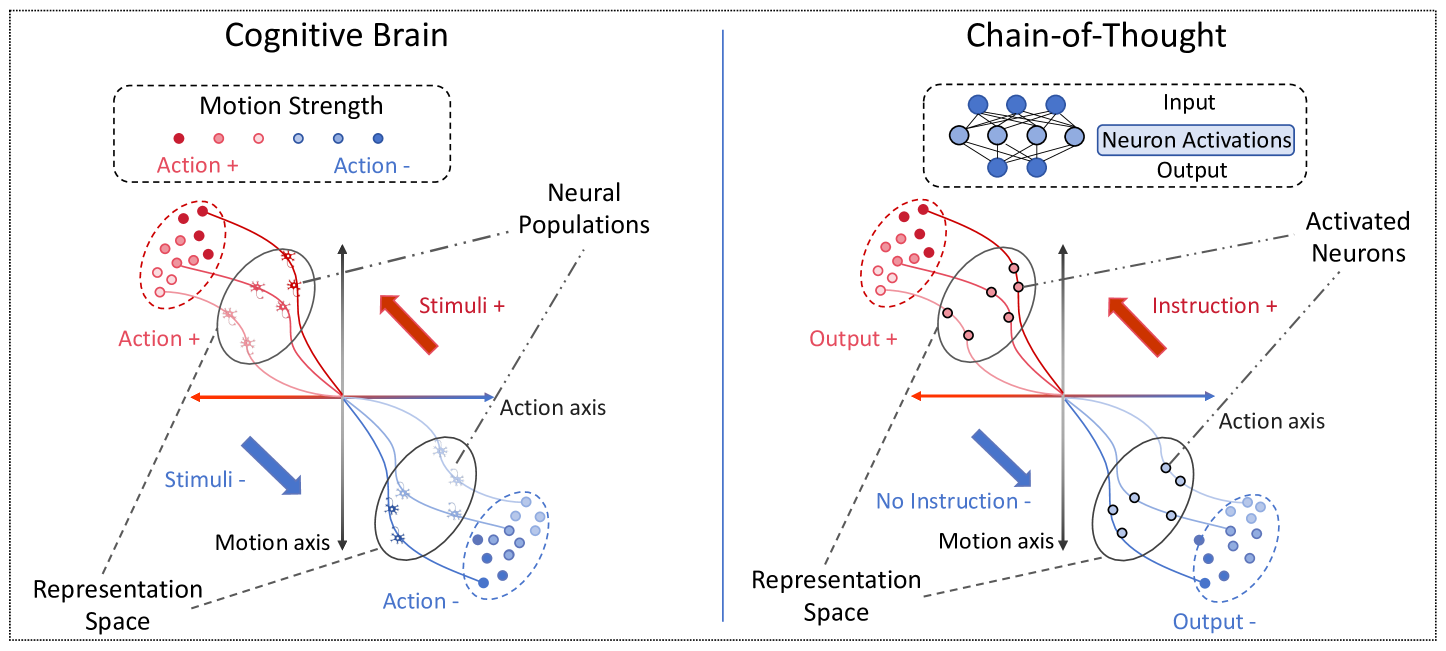

The image presents two diagrams side-by-side, illustrating conceptual models of "Cognitive Brain" and "Chain-of-Thought." Both diagrams use a 3D representation space with axes labeled "Action axis" and "Motion axis." They depict neural populations and their activation patterns in response to stimuli or instructions. The diagrams use color-coding to represent positive and negative actions/outputs.

### Components/Axes

**Left Diagram (Cognitive Brain):**

* **Title:** Cognitive Brain

* **Axes:**

* Action axis (horizontal)

* Motion axis (vertical)

* **Representation Space:** The 3D space defined by the axes.

* **Neural Populations:** Clusters of dots, color-coded.

* **Motion Strength Legend:** Located at the top-left.

* Red dots: Action +

* Blue dots: Action -

* **Stimuli Arrows:**

* Red arrow pointing up and to the right: Stimuli +

* Blue arrow pointing down and to the left: Stimuli -

* **Labels:**

* Action + (near the red neural population)

* Action - (near the blue neural population)

**Right Diagram (Chain-of-Thought):**

* **Title:** Chain-of-Thought

* **Axes:**

* Action axis (horizontal)

* Motion axis (vertical)

* **Representation Space:** The 3D space defined by the axes.

* **Neural Populations:** Clusters of dots, color-coded.

* **Neuron Activations:** A small neural network diagram at the top, labeled with "Input," "Neuron Activations" (in a blue box), and "Output."

* **Instruction Arrow:**

* Red arrow pointing up and to the right: Instruction +

* **Labels:**

* Output + (near the red neural population)

* Output - (near the blue neural population)

* No Instruction - (near the blue arrow)

* **Activated Neurons:** Label for the neural populations.

### Detailed Analysis

**Left Diagram (Cognitive Brain):**

* **Action + Neural Population:** Located in the top-left quadrant. The dots are red, indicating positive action. The motion strength varies from dark red to light red.

* **Action - Neural Population:** Located in the bottom-right quadrant. The dots are blue, indicating negative action. The motion strength varies from dark blue to light blue.

* **Stimuli + Arrow:** A red arrow pointing towards the top-right, indicating positive stimuli.

* **Stimuli - Arrow:** A blue arrow pointing towards the bottom-left, indicating negative stimuli.

**Right Diagram (Chain-of-Thought):**

* **Output + Neural Population:** Located in the top-left quadrant. The dots are red, indicating positive output.

* **Output - Neural Population:** Located in the bottom-right quadrant. The dots are blue, indicating negative output.

* **Instruction + Arrow:** A red arrow pointing towards the top-right, indicating positive instruction.

* **Neuron Activations Diagram:** Shows a simple neural network with interconnected nodes.

### Key Observations

* Both diagrams use a similar 3D representation space with "Action" and "Motion" axes.

* The Cognitive Brain diagram focuses on stimuli and actions, while the Chain-of-Thought diagram focuses on instructions and outputs.

* Color-coding (red and blue) is used consistently to represent positive and negative values.

* The Chain-of-Thought diagram includes a neural network representation, suggesting a more complex processing model.

### Interpretation

The diagrams illustrate two different models of brain function. The "Cognitive Brain" model emphasizes the role of external stimuli in driving actions, while the "Chain-of-Thought" model highlights the internal processing of instructions to generate outputs. The "Chain-of-Thought" diagram, with its neural network component, suggests a more sophisticated, multi-layered processing approach compared to the more direct stimulus-response model of the "Cognitive Brain." The spatial arrangement of neural populations in relation to the axes and stimuli/instructions suggests a mapping of neural activity within the representation space. The motion strength, indicated by the color gradient, could represent the intensity or confidence of the action/output.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Cognitive Brain vs. Chain-of-Thought

### Overview

The image presents a comparative diagram illustrating the difference between a "Cognitive Brain" and a "Chain-of-Thought" process. Both sides depict neural populations and representation spaces, but differ in how stimuli and instructions influence activity. The left side represents a more direct stimulus-response mechanism, while the right side shows a more complex, instruction-guided process.

### Components/Axes

The diagram is divided into two main sections: "Cognitive Brain" (left) and "Chain-of-Thought" (right). Each section contains:

* **Representation Space:** A circular area with scattered points representing neural activity.

* **Neural Populations:** Clusters of points within the Representation Space, differentiated by color and shape.

* **Axes:** Two axes are present in each section: "Motion axis" and "Action axis".

* **Stimuli/Instruction:** Red arrows indicating the influence of stimuli (left) or instructions (right).

* **Input/Output:** Labels indicating the flow of information.

* **Top-Left Inset:** A small diagram showing a network of interconnected nodes labeled "Neuron Activations" with "Input" and "Output" labels.

### Detailed Analysis or Content Details

**Cognitive Brain (Left Side):**

* **Motion Strength:** A horizontal row of dots at the top, labeled "Motion Strength", ranging from "Action +" to "Action -".

* **Stimuli +:** A red arrow pointing downwards from the top-center, labeled "Stimuli +". This arrow influences the neural populations in the Representation Space.

* **Stimuli -:** A red arrow pointing downwards from the top-left, labeled "Stimuli -". This arrow also influences the neural populations.

* **Action axis:** A vertical line bisecting the Representation Space.

* **Motion axis:** A horizontal line bisecting the Representation Space.

* **Neural Populations:**

* Top-right: A cluster of red asterisk-shaped points labeled "Action +".

* Bottom-left: A cluster of blue circle-shaped points labeled "Action -".

* Other scattered points of various colors and shapes.

* The arrows "Stimuli +" and "Stimuli -" appear to activate the "Action +" and "Action -" populations respectively.

**Chain-of-Thought (Right Side):**

* **Instruction +:** A red arrow pointing downwards from the top-center, labeled "Instruction +".

* **No Instruction -:** A label indicating the absence of a negative instruction.

* **Action axis:** A vertical line bisecting the Representation Space.

* **Motion axis:** A horizontal line bisecting the Representation Space.

* **Output +:** A label at the bottom-right.

* **Output -:** A label at the bottom-left.

* **Neural Populations:**

* Top-right: A cluster of red circle-shaped points labeled "Output +".

* Bottom-left: A cluster of blue asterisk-shaped points labeled "Output -".

* Other scattered points of various colors and shapes.

* The "Instruction +" arrow influences the neural populations, but the effect appears more nuanced than the direct stimulus in the Cognitive Brain.

* **Top-Left Inset:** A diagram of interconnected nodes, representing a neural network. The nodes are connected by lines, and labeled "Neuron Activations".

### Key Observations

* The "Cognitive Brain" side shows a more direct and immediate response to stimuli.

* The "Chain-of-Thought" side demonstrates a more modulated response, influenced by instructions.

* The neural populations are represented differently in each section, suggesting different types of activity.

* The inset diagram on the right side highlights the complexity of the neural network involved in the Chain-of-Thought process.

* The axes are consistent across both sides, providing a common frame of reference.

### Interpretation

The diagram illustrates a conceptual difference between a basic cognitive response (Cognitive Brain) and a more sophisticated, instruction-driven thought process (Chain-of-Thought). The Cognitive Brain operates on a more direct stimulus-response basis, while the Chain-of-Thought involves a more complex internal representation and modulation of activity based on instructions. The inset diagram suggests that the Chain-of-Thought relies on a network of interconnected neurons, allowing for more flexible and nuanced processing.

The use of arrows to represent stimuli and instructions emphasizes the directional influence on neural activity. The different shapes and colors of the neural populations likely represent different types of neurons or activity patterns. The diagram suggests that the Chain-of-Thought process is more computationally intensive and requires a more complex neural infrastructure than the Cognitive Brain. The labels "Output +" and "Output -" indicate that the Chain-of-Thought process can generate both positive and negative responses, while the Cognitive Brain appears to be more focused on immediate action.

The diagram is a high-level conceptual illustration and does not provide specific quantitative data. However, it effectively conveys the qualitative differences between the two cognitive processes. It suggests that Chain-of-Thought is a more advanced form of cognition that builds upon the basic stimulus-response mechanisms of the Cognitive Brain.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Cognitive Brain and Chain-of-Thought Processes

### Overview

The image is a comparative diagram split into two vertical panels. The left panel is titled "Cognitive Brain" and illustrates a biological or cognitive model of action selection. The right panel is titled "Chain-of-Thought" and illustrates an analogous process in an artificial neural network or AI system. Both panels depict a process within a defined "Representation Space" using a coordinate system and clustered data points.

### Components/Axes

**Shared Structural Elements (Both Panels):**

* **Representation Space:** A 2D coordinate system defined by two perpendicular axes.

* **Horizontal Axis:** Labeled "Action axis". A blue arrow points right, and a red arrow points left from the origin.

* **Vertical Axis:** Labeled "Motion axis". A black arrow points up, and a black arrow points down from the origin.

* **Legend (Top-Left of each panel):** A dashed box titled "Motion Strength". It contains a series of colored dots:

* **Red Dots (Left side):** Labeled "Action +". The dots vary in shade from dark red to light pink.

* **Blue Dots (Right side):** Labeled "Action -". The dots vary in shade from dark blue to light blue.

* **Data Clusters:** Two main clusters of colored dots (red and blue) are present in each panel, positioned in opposite quadrants of the Representation Space.

* **Directional Arrows:** Large, colored arrows indicate the driving force or input.

* A **red arrow** points towards the upper-left quadrant.

* A **blue arrow** points towards the lower-right quadrant.

**Panel-Specific Elements:**

**Left Panel: Cognitive Brain**

* **Title:** "Cognitive Brain"

* **Cluster Labels:**

* The red dot cluster in the upper-left is labeled "Action +".

* The blue dot cluster in the lower-right is labeled "Action -".

* **Driving Force Labels:**

* The red arrow is labeled "Stimuli +".

* The blue arrow is labeled "Stimuli -".

* **Additional Label:** "Neural Populations" points to the general area of the data clusters.

**Right Panel: Chain-of-Thought**

* **Title:** "Chain-of-Thought"

* **Additional Diagram (Top):** A small schematic of a neural network with three layers of nodes (blue circles) connected by lines. It is labeled:

* "Input" (top layer)

* "Neuron Activations" (middle layer, highlighted with a blue box)

* "Output" (bottom layer)

* **Cluster Labels:**

* The red dot cluster in the upper-left is labeled "Output +".

* The blue dot cluster in the lower-right is labeled "Output -".

* **Driving Force Labels:**

* The red arrow is labeled "Instruction +".

* The blue arrow is labeled "No Instruction -".

* **Additional Label:** "Activated Neurons" points to the general area of the data clusters.

### Detailed Analysis

The diagram establishes a direct visual analogy between two systems:

1. **Cognitive Brain Process:**

* **Input:** External "Stimuli" (positive/red and negative/blue).

* **Mechanism:** These stimuli influence "Neural Populations" within a "Representation Space".

* **Output:** The system settles into a state corresponding to an "Action +" (approach/positive) or "Action -" (avoid/negative) decision. The strength of the action is encoded by the shade of the dot (darker = stronger).

2. **Chain-of-Thought Process:**

* **Input:** An "Instruction" (positive/present) or "No Instruction" (negative/absent).

* **Mechanism:** This input modulates "Activated Neurons" within an analogous "Representation Space". The small neural network diagram abstractly represents the underlying computational mechanism.

* **Output:** The system produces an "Output +" or "Output -". The strength of the output is similarly encoded by dot shade.

**Spatial Grounding & Color Cross-Reference:**

* In both panels, the **red cluster** (Action+/Output+) is consistently located in the **upper-left quadrant** of the Representation Space, associated with the positive direction of the red driving arrow.

* The **blue cluster** (Action-/Output-) is consistently located in the **lower-right quadrant**, associated with the positive direction of the blue driving arrow.

* The color of the driving arrow (red/blue) matches the color of the cluster it influences, confirming the legend's mapping.

### Key Observations

* **Symmetrical Analogy:** The diagram is meticulously structured to show a one-to-one mapping between biological and artificial concepts: Stimuli ↔ Instruction, Neural Populations ↔ Activated Neurons, Action ↔ Output.

* **Directional Coding:** Positive valence (Action+, Stimuli+, Instruction+, Output+) is consistently associated with the **upper-left** direction in the representation space. Negative valence (Action-, Stimuli-, No Instruction-, Output-) is associated with the **lower-right**.

* **Strength Gradient:** The use of color saturation (dark to light) within each cluster provides a secondary dimension of information, representing the magnitude or confidence of the response.

* **Abstraction Level:** The "Chain-of-Thought" panel includes an extra layer of abstraction with the neural network schematic, explicitly linking the conceptual diagram to a common AI architecture.

### Interpretation

This diagram argues for a functional equivalence between the cognitive process of action selection in a brain and the reasoning process in a Chain-of-Thought AI model.

* **Core Thesis:** It suggests that both systems operate by mapping inputs (stimuli or instructions) onto a latent "representation space," where the direction of movement within that space corresponds to a decision or output. The "Chain-of-Thought" process in AI is framed not just as information processing, but as a form of *directed navigation* through a conceptual space, guided by the prompt or instruction.

* **Underlying Mechanism:** The "Representation Space" is the key shared construct. In neuroscience, this could be a population code in the motor cortex. In AI, it is the activation space of a transformer's hidden layers. The diagram implies that "thinking" or "reasoning" (the chain) is the trajectory through this space from an initial state to a final output state.

* **Notable Implication:** The label "No Instruction -" is particularly insightful. It posits that the *absence* of a guiding instruction is itself a powerful input that drives the system toward a default or negative output state, just as a negative stimulus drives avoidance behavior. This highlights the critical role of prompts in steering AI cognition.

* **Purpose:** The visual serves to demystify AI reasoning by grounding it in a familiar biological metaphor, while also elevating the discussion of AI cognition by giving it a structured, spatial, and dynamic interpretation akin to brain function.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Cognitive Brain and Chain-of-Thought Processing

### Overview

The image presents two interconnected diagrams illustrating neural processing mechanisms. The left diagram ("Cognitive Brain") shows how neural populations are modulated by stimuli and actions, while the right diagram ("Chain-of-Thought") demonstrates how input processing leads to output through activated neurons. Both diagrams use orthogonal axes (Representation Space vs. Action axis) and color-coded neural populations to visualize decision-making dynamics.

### Components/Axes

**Cognitive Brain Diagram:**

- **Axes:**

- Vertical: Representation Space (top to bottom)

- Horizontal: Action axis (left to right)

- **Legend:**

- Motion Strength:

- Red dots = Action +

- Blue dots = Action -

- **Key Elements:**

- Neural Populations (circles with dots)

- Arrows indicating:

- Stimuli + (red arrow)

- Stimuli - (blue arrow)

- Instruction + (red arrow)

- Instruction - (blue arrow)

- Dotted lines marking quadrants

**Chain-of-Thought Diagram:**

- **Axes:**

- Vertical: Representation Space (top to bottom)

- Horizontal: Action axis (left to right)

- **Legend:**

- Neuron Activations: Blue dots

- **Key Elements:**

- Neural Populations (circles with dots)

- Arrows indicating:

- Output + (red arrow)

- Output - (blue arrow)

- Instruction + (red arrow)

- No Instruction - (blue arrow)

- Dotted lines marking quadrants

### Detailed Analysis

**Cognitive Brain Diagram:**

1. **Neural Populations:**

- Top-left: High Motion Strength (red dots) with Stimuli + influence

- Bottom-left: Low Motion Strength (blue dots) with Stimuli - influence

- Top-right: Action + modulation

- Bottom-right: Action - modulation

2. **Directional Influences:**

- Red arrows (Stimuli +/Instruction +) point toward Action +

- Blue arrows (Stimuli -/Instruction -) point toward Action -

**Chain-of-Thought Diagram:**

1. **Input-Output Relationship:**

- Input (blue dots) → Neuron Activations → Output

- Output populations split into:

- Output + (red dots) with Instruction +

- Output - (blue dots) with No Instruction -

2. **Activation Patterns:**

- Activated Neurons cluster in top-right quadrant

- No Instruction - pathway shows lateral inhibition

### Key Observations

1. **Motion Strength Correlation:**

- Red dots (Action +) consistently appear in quadrants with positive directional influences

- Blue dots (Action -) cluster in quadrants with negative influences

2. **Instruction Dependency:**

- Output + requires explicit Instruction + input

- Output - occurs in absence of instruction (default state)

3. **Neural Population Dynamics:**

- Cognitive Brain shows bidirectional modulation (stimuli ↔ actions)

- Chain-of-Thought demonstrates unidirectional processing (input → output)

### Interpretation

The diagrams collectively model a hierarchical neural processing system:

1. **Cognitive Brain** demonstrates how sensory stimuli and motor actions interact through neural populations, with motion strength determining response directionality. The orthogonal axes suggest independent processing streams converging in decision space.

2. **Chain-of-Thought** reveals a feedforward architecture where input processing through activated neurons produces output based on explicit instructions. The absence of instruction creates a baseline output state, implying default cognitive pathways.

3. **Integration Point:** The shared Representation Space and Action axis across both diagrams suggest a unified neural framework where sensory processing (Cognitive Brain) informs decision-making (Chain-of-Thought). The color-coded motion strength and instruction states provide a quantitative measure of neural activation patterns.

4. **Clinical Implications:** The clear demarcation between instructed and non-instructed outputs could inform studies on executive function disorders, while the motion strength modulation might relate to motor control pathologies.

DECODING INTELLIGENCE...