\n

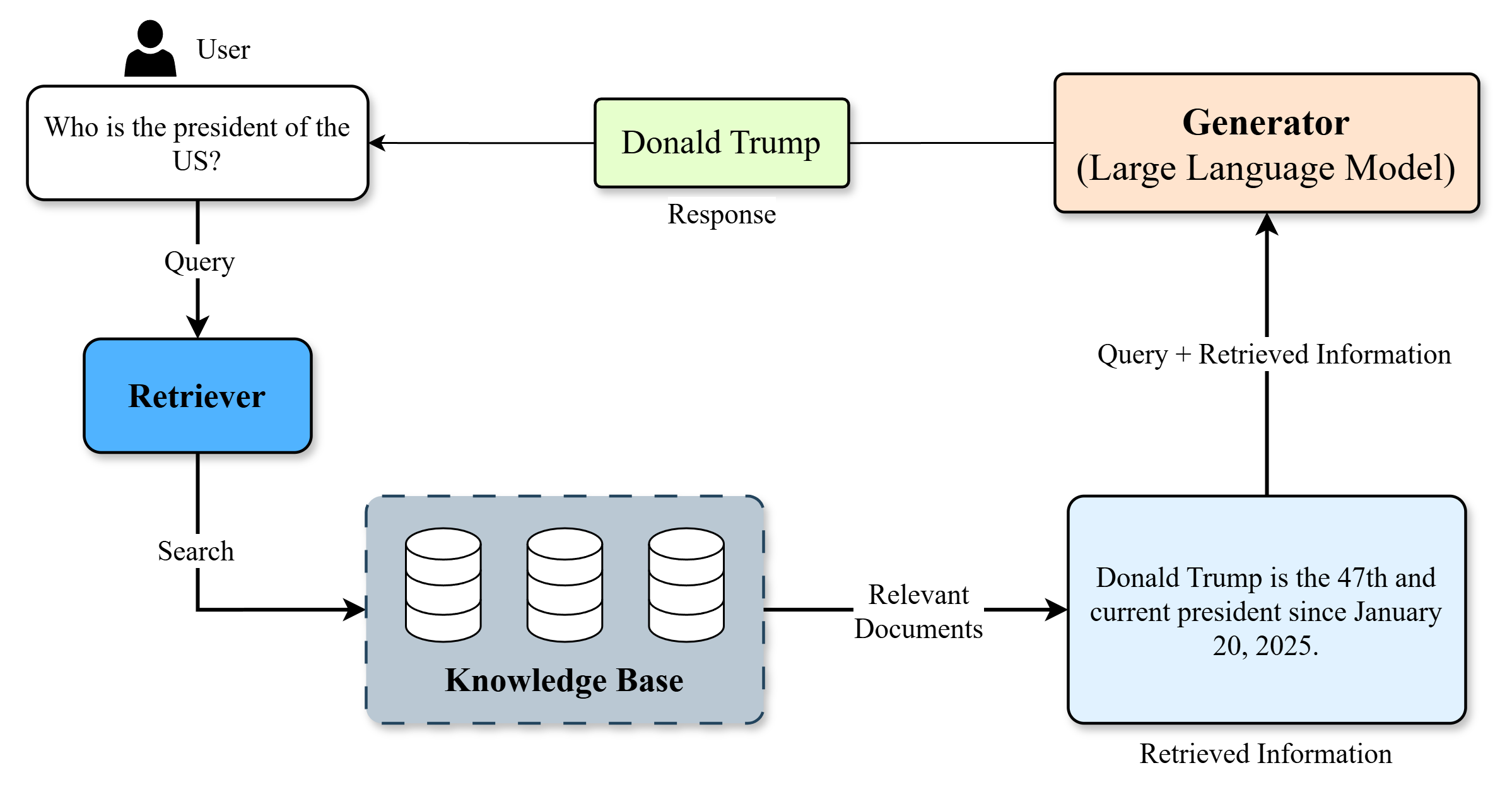

## Diagram: Retrieval-Augmented Generation (RAG) Process

### Overview

This diagram illustrates the process of Retrieval-Augmented Generation (RAG), a technique used in Large Language Models (LLMs) to improve response accuracy and relevance by grounding the LLM's knowledge in an external knowledge base. The diagram depicts the flow of information from a user query to a generated response, highlighting the roles of the Retriever, Knowledge Base, and Generator.

### Components/Axes

The diagram consists of the following components:

* **User:** Represented by a simple head icon.

* **Query:** A rounded rectangle containing the text "Who is the president of the US?".

* **Retriever:** A rounded rectangle labeled "Retriever".

* **Search:** A label indicating the action performed by the Retriever.

* **Knowledge Base:** A dashed rectangle labeled "Knowledge Base" containing four cylinder icons representing data storage.

* **Relevant Documents:** A label indicating the output of the Knowledge Base search.

* **Generator (Large Language Model):** A rectangle labeled "Generator (Large Language Model)".

* **Response:** A rectangle containing the text "Donald Trump".

* **Query + Retrieved Information:** A label indicating the input to the Generator.

* **Retrieved Information:** A rectangle containing the text "Donald Trump is the 47th and current president since January 20, 2025".

Arrows indicate the flow of information between these components.

### Detailed Analysis or Content Details

The diagram shows the following flow:

1. A **User** poses a **Query**: "Who is the president of the US?".

2. The **Query** is sent to the **Retriever**.

3. The **Retriever** performs a **Search** on the **Knowledge Base**.

4. The **Knowledge Base** returns **Relevant Documents**. Specifically, one document is shown containing the text: "Donald Trump is the 47th and current president since January 20, 2025".

5. The **Retriever** passes the **Query** combined with the **Retrieved Information** to the **Generator (Large Language Model)**.

6. The **Generator** produces a **Response**: "Donald Trump".

### Key Observations

* The diagram clearly illustrates the two-step process of RAG: retrieval and generation.

* The Knowledge Base is depicted as a collection of documents, suggesting it can contain a variety of information.

* The date "January 20, 2025" is provided as the start date of Donald Trump's presidency, which is a future date as of the time of this analysis (November 2023). This suggests the diagram is illustrating a hypothetical or future scenario.

* The diagram emphasizes the importance of grounding the LLM's response in external knowledge.

### Interpretation

The diagram demonstrates how RAG enhances LLM performance by providing access to a specific knowledge source. Instead of relying solely on its pre-trained knowledge, the LLM leverages the retrieved information to formulate a more accurate and contextually relevant response. The inclusion of the date "January 20, 2025" suggests the diagram is not depicting a current reality but rather a potential future application of RAG. The diagram highlights the benefits of RAG in scenarios where up-to-date or specialized knowledge is required, as the LLM can dynamically access and incorporate information from the Knowledge Base. The flow of information is unidirectional, indicating a simple RAG pipeline without feedback loops or iterative refinement.