## Flowchart: Information Retrieval and Response Generation System

### Overview

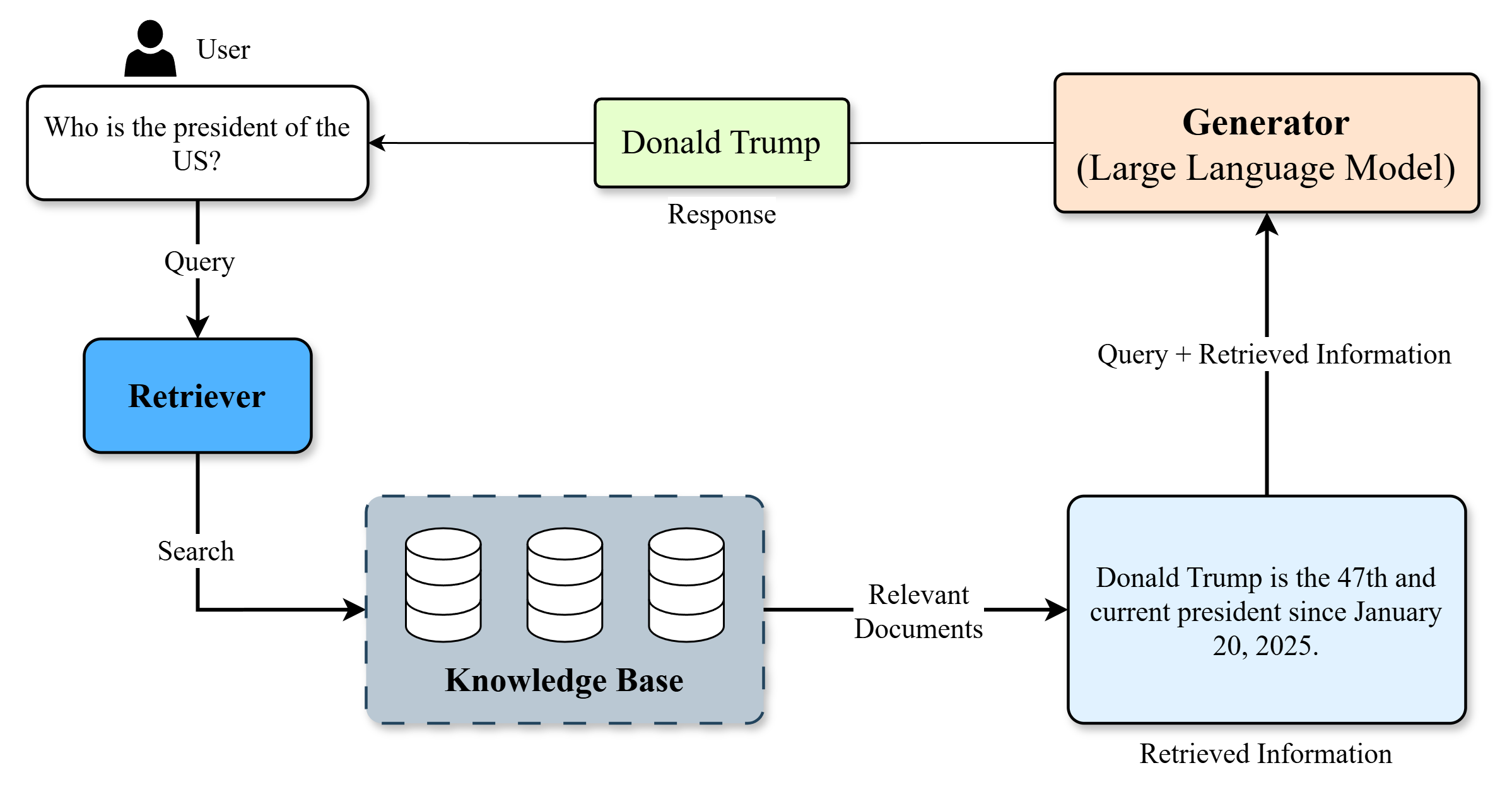

The flowchart illustrates a system workflow for processing user queries through information retrieval and response generation. It depicts a linear process starting from a user query, traversing through a knowledge base, and ending with a generated response. The example provided focuses on identifying the U.S. president.

### Components/Axes

1. **User**: Initiates the process by asking a query ("Who is the president of the US?").

2. **Query**: Transmits the user's question to the Retriever.

3. **Retriever**: Processes the query and performs a "Search" on the Knowledge Base.

4. **Knowledge Base**: A repository of information represented by three stacked cylinders (symbolizing data storage).

5. **Relevant Documents**: Output from the Knowledge Base search, fed into the Generator.

6. **Generator (Large Language Model)**: Synthesizes the query and retrieved information to produce a response.

7. **Response**: Final output ("Donald Trump") with supporting retrieved information ("Donald Trump is the 47th and current president since January 20, 2025.").

### Detailed Analysis

- **Flow Direction**:

- User → Query → Retriever → Search → Knowledge Base → Relevant Documents → Generator → Response.

- **Key Textual Elements**:

- **User Query**: "Who is the president of the US?"

- **Retriever Output**: "Donald Trump" (response).

- **Generator Input**: Combines the original query with retrieved documents.

- **Retrieved Information**: "Donald Trump is the 47th and current president since January 20, 2025."

- **Visual Symbols**:

- Knowledge Base: Three stacked cylinders (standardized representation for data storage).

- Arrows: Indicate sequential processing steps.

### Key Observations

1. The system emphasizes **modularity**, separating retrieval (Retriever/Knowledge Base) from response generation (Generator).

2. The example response includes both the answer ("Donald Trump") and contextual metadata (term "47th," date "January 20, 2025"), suggesting the Generator integrates factual and temporal data.

3. No feedback loops or error-handling mechanisms are depicted, implying a linear, deterministic workflow.

### Interpretation

This flowchart represents a simplified version of a **Retrieval-Augmented Generation (RAG)** system, where external knowledge bases inform LLM responses. The example highlights:

- **Temporal Accuracy**: The response includes a specific date (January 20, 2025), indicating the system’s ability to provide up-to-date information.

- **Factual Precision**: The claim about Donald Trump being the "47th president" is factually incorrect (as of 2023, Joe Biden is the 46th president). This discrepancy suggests either:

- A hypothetical scenario in the diagram.

- An error in the knowledge base or Generator output.

- **Workflow Efficiency**: The system prioritizes direct retrieval and synthesis without iterative refinement, which may limit nuance in complex queries.

### Limitations

- **Static Knowledge Base**: No indication of real-time updates or dynamic data sources.

- **Oversimplification**: Real-world systems often include validation steps, ambiguity resolution, and confidence scoring, which are absent here.