## Line Chart: Loss vs. Model Size Comparison

### Overview

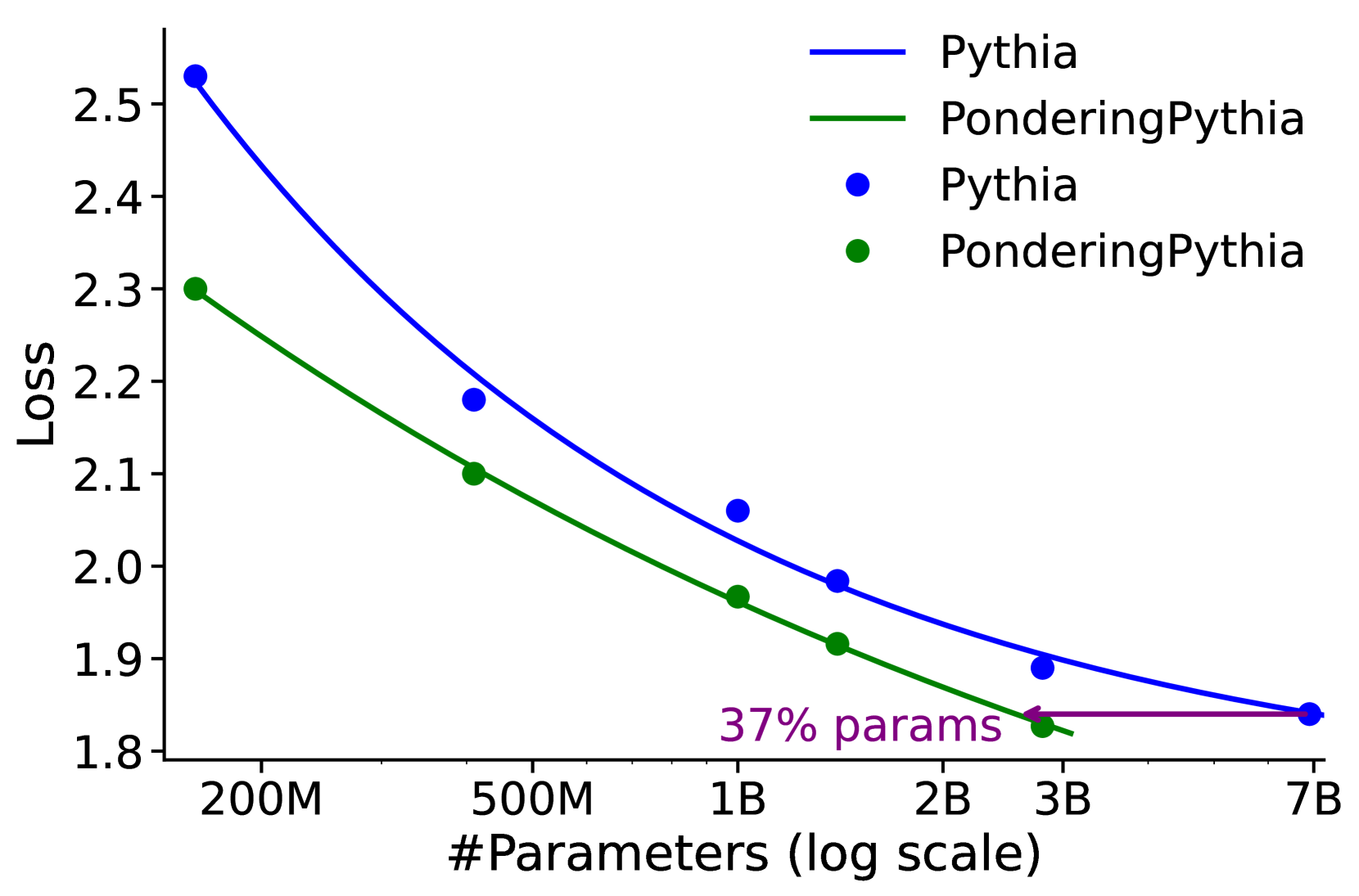

The image is a line chart comparing the performance (measured by Loss) of two language model families, "Pythia" and "PonderingPythia," across different model sizes. The chart demonstrates that PonderingPythia consistently achieves a lower loss than the standard Pythia model for a given number of parameters, suggesting greater parameter efficiency.

### Components/Axes

* **Chart Type:** Line chart with data points.

* **X-Axis:** Labeled "#Parameters (log scale)". It uses a logarithmic scale with major tick marks at 200M, 500M, 1B, 2B, 3B, and 7B (where M = Million, B = Billion).

* **Y-Axis:** Labeled "Loss". It uses a linear scale ranging from 1.8 to 2.5, with increments of 0.1.

* **Legend:** Located in the top-right corner of the chart area. It contains four entries:

* A blue line labeled "Pythia"

* A green line labeled "PonderingPythia"

* A blue dot labeled "Pythia"

* A green dot labeled "PonderingPythia"

* **Annotation:** A purple text label "37% params" with a left-pointing arrow is positioned near the bottom-right, between the 3B and 7B data points.

### Detailed Analysis

**Data Series & Trends:**

1. **Pythia (Blue Line & Dots):** The trend line slopes downward from left to right, indicating that loss decreases as the number of parameters increases.

* Data Points (Approximate):

* At ~200M params: Loss ≈ 2.53

* At ~500M params: Loss ≈ 2.18

* At ~1B params: Loss ≈ 2.06

* At ~1.5B params: Loss ≈ 1.98

* At ~3B params: Loss ≈ 1.89

* At ~7B params: Loss ≈ 1.84

2. **PonderingPythia (Green Line & Dots):** This trend line also slopes downward and is positioned consistently below the Pythia line, indicating lower loss at each comparable model size.

* Data Points (Approximate):

* At ~200M params: Loss ≈ 2.30

* At ~500M params: Loss ≈ 2.10

* At ~1B params: Loss ≈ 1.97

* At ~1.5B params: Loss ≈ 1.92

* At ~3B params: Loss ≈ 1.83

* At ~7B params: Loss ≈ 1.84 (This point converges with the Pythia point).

**Annotation Analysis:** The purple annotation "37% params" with a leftward arrow is placed between the 3B and 7B marks on the x-axis. The arrow points from the 7B region back towards the 3B region. This visually suggests that the PonderingPythia model at 3B parameters achieves a loss performance (≈1.83) comparable to the standard Pythia model at 7B parameters (≈1.84), using only about 37% of the parameters (3B / 7B ≈ 0.428, or ~43%; the "37%" may refer to a more precise calculation or a different baseline).

### Key Observations

1. **Consistent Efficiency Gap:** The green PonderingPythia line is below the blue Pythia line at every measured point except the final 7B convergence, demonstrating a consistent reduction in loss for the same model size.

2. **Diminishing Returns:** Both curves show a flattening slope as model size increases, illustrating the principle of diminishing returns in scaling laws—doubling parameters yields a smaller improvement in loss at larger scales.

3. **Performance Convergence:** At the largest measured size (7B parameters), the loss values for both model types converge to approximately 1.84.

4. **Parameter Efficiency Claim:** The annotation explicitly highlights the core finding: PonderingPythia can match the performance of a much larger Pythia model with significantly fewer parameters.

### Interpretation

This chart presents evidence for a more parameter-efficient model architecture ("PonderingPythia"). The data suggests that the modifications in PonderingPythia allow it to achieve better performance (lower loss) at smaller scales. The most significant insight is the claimed 37% parameter efficiency at the high-performance end, meaning one could potentially train a PonderingPythia model to the same loss level as a Pythia model while using roughly one-third of the parameters, leading to substantial savings in computational cost, memory, and energy.

From a Peircean investigative perspective, the chart provides the *iconic* representation (the lines and points) and the *indexical* link (the annotation arrow) to support an *abductive* inference: the observed superior performance of PonderingPythia is likely due to an architectural innovation that improves learning efficiency per parameter. The convergence at 7B might indicate a fundamental limit or that the efficiency advantage is most pronounced in the mid-range of model sizes tested.