TECHNICAL ASSET FINGERPRINT

93d71a75bdfb1e054a192e55

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

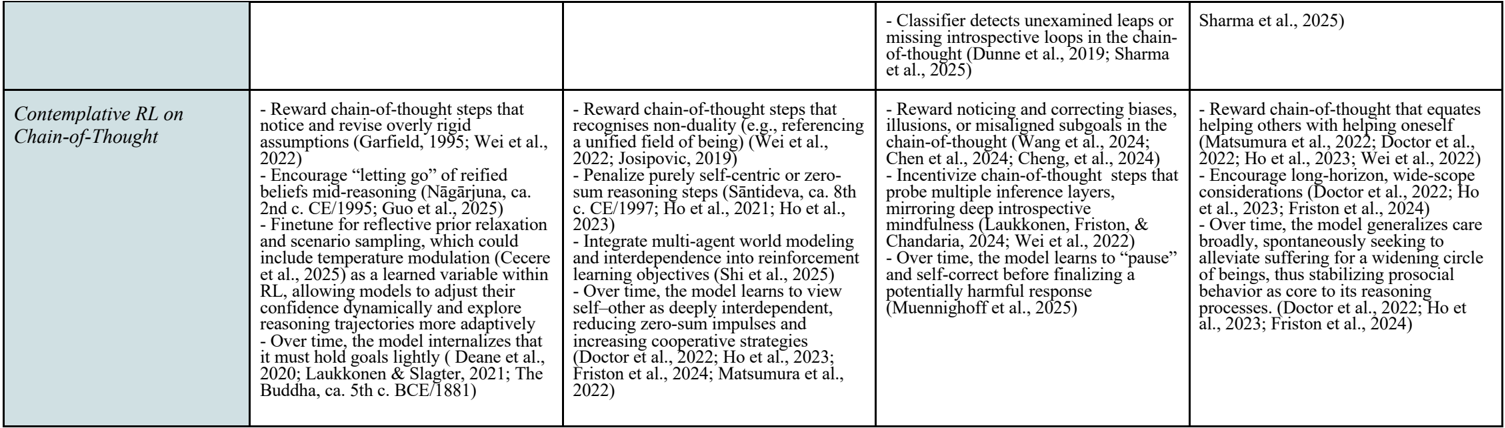

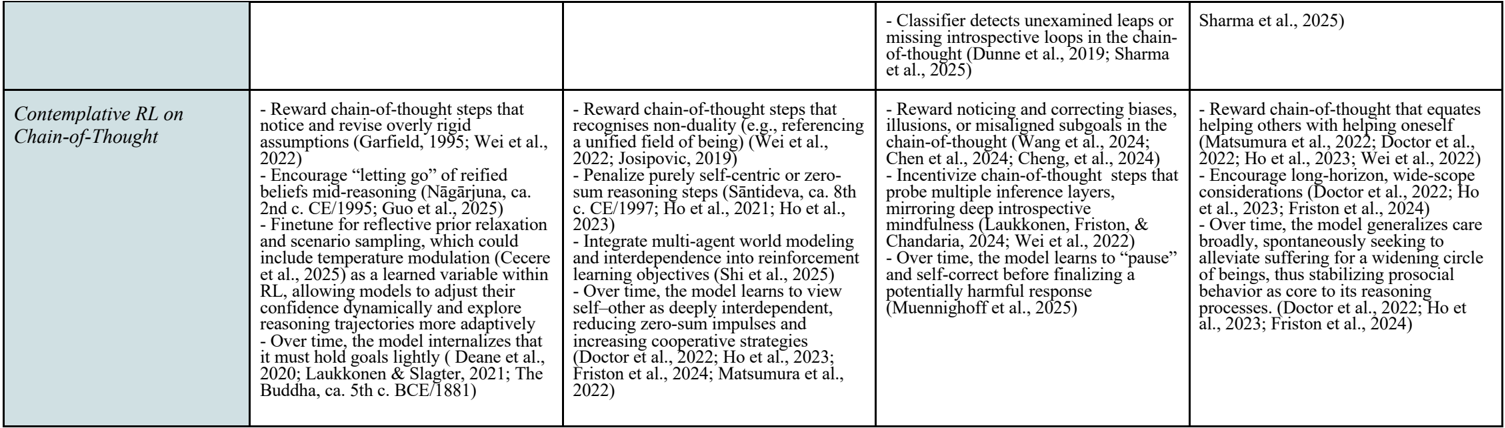

## Table: Contemplative RL on Chain-of-Thought

### Overview

The image is a table describing different approaches to contemplative reinforcement learning (RL) on chain-of-thought reasoning. The table is divided into four columns, each outlining specific techniques and objectives for integrating contemplative practices into RL models.

### Components/Axes

The table has one row and four columns. The row label is "Contemplative RL on Chain-of-Thought". The columns do not have explicit labels, but each column contains a list of bullet points describing different techniques.

### Detailed Analysis

Here's a breakdown of the content within each column:

**Column 1:**

* Reward chain-of-thought steps that notice and revise overly rigid assumptions (Garfield, 1995; Wei et al., 2022).

* Encourage "letting go" of reified beliefs mid-reasoning (Nāgārjuna, ca. 2nd c. CE/1995; Guo et al., 2025).

* Finetune for reflective prior relaxation and scenario sampling, which could include temperature modulation (Cecere et al., 2025) as a learned variable within RL, allowing models to adjust their confidence dynamically and explore reasoning trajectories more adaptively.

* Over time, the model internalizes that it must hold goals lightly (Deane et al., 2020; Laukkonen & Slagter, 2021; The Buddha, ca. 5th c. BCE/1881).

**Column 2:**

* Reward chain-of-thought steps that recognises non-duality (e.g., referencing a unified field of being) (Wei et al., 2022; Josipovic, 2019).

* Penalize purely self-centric or zero-sum reasoning steps (Śāntideva, ca. 8th c. CE/1997; Ho et al., 2021; Ho et al., 2023).

* Integrate multi-agent world modeling and interdependence into reinforcement learning objectives (Shi et al., 2025).

* Over time, the model learns to view self-other as deeply interdependent, reducing zero-sum impulses and increasing cooperative strategies (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024; Matsumura et al., 2022).

**Column 3:**

* Classifier detects unexamined leaps or missing introspective loops in the chain-of-thought (Dunne et al., 2019; Sharma et al., 2025).

* Reward noticing and correcting biases, illusions, or misaligned subgoals in the chain-of-thought (Wang et al., 2024; Chen et al., 2024; Cheng, et al., 2024).

* Incentivize chain-of-thought steps that probe multiple inference layers, mirroring deep introspective mindfulness (Laukkonen, Friston, & Chandaria, 2024; Wei et al., 2022).

* Over time, the model learns to "pause" and self-correct before finalizing a potentially harmful response (Muennighoff et al., 2025).

**Column 4:**

* Reward chain-of-thought that equates helping others with helping oneself (Matsumura et al., 2022; Doctor et al., 2022; Ho et al., 2023; Wei et al., 2022).

* Encourage long-horizon, wide-scope considerations (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024).

* Over time, the model generalizes care broadly, spontaneously seeking to alleviate suffering for a widening circle of beings, thus stabilizing prosocial behavior as core to its reasoning processes. (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024).

### Key Observations

* Each column focuses on a different aspect of integrating contemplative practices into RL.

* There is a recurring theme of models learning and adapting "over time" in each column.

* Many entries cite multiple sources, indicating a collaborative and evolving field.

* The references span a range of years, including future dates (e.g., 2025), suggesting forward-looking research and projections.

### Interpretation

The table presents a multifaceted approach to incorporating contemplative principles into reinforcement learning models that use chain-of-thought reasoning. The different columns highlight various strategies, from revising assumptions and recognizing non-duality to detecting introspective loops and promoting prosocial behavior. The emphasis on learning and adaptation over time suggests a focus on developing AI systems that can evolve and refine their reasoning processes through contemplative practices. The inclusion of future-dated citations indicates ongoing research and anticipated developments in this emerging field. The table suggests that contemplative RL aims to create AI that is not only intelligent but also mindful, ethical, and capable of promoting well-being.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Text Block: Contemplative RL on Chain-of-Thought

### Overview

The image presents a text block outlining key ideas and research related to "Contemplative RL on Chain-of-Thought". It appears to be a collection of notes or a summary of research points, organized into four columns. Each column details different aspects of this approach, referencing various authors and publications.

### Components/Axes

The text is organized into four columns, each with a heading describing a core concept. There are no explicit axes or scales. The columns are:

1. **Column 1:** Focuses on rewarding chain-of-thought steps that notice and revise overly rigid assumptions, encouraging "letting go" of refined beliefs, and fine-tuning for reflective prior relaxation.

2. **Column 2:** Focuses on rewarding chain-of-thought steps that recognize non-duality, penalizing purely self-centric zero-sum reasoning, and integrating multi-agent world modeling.

3. **Column 3:** Focuses on rewarding noticing and correcting biases, incentivizing chain-of-thought steps that probe multiple inference layers, mirroring deep introspective mindfulness.

4. **Column 4:** Focuses on rewarding chain-of-thought that equates helping others with helping oneself, encouraging long-horizon, wide-scope considerations, and generalizing care broadly.

### Detailed Analysis or Content Details

**Column 1:**

* Reward chain-of-thought steps that notice and revise overly rigid assumptions (Garfield, 1995; Wei et al., 2022)

* Encourage "letting go" of refined beliefs mid-reasoning (Nāgārjuna, ca. 2nd c. CE/1995; Guo et al., 2023)

* Fine-tune for reflective prior relaxation and scenario sampling, which could include temperature modulation (Ceere et al., 2023)

* Over time, the model internalizes that most goals lag slightly (Deane et al., 2021; Laukkanen & Siesler, 2021; Budha, ca. 5th c. BCE/1987)

**Column 2:**

* Reward chain-of-thought steps that recognise non-duality (e.g., referencing a unified field of being) (Wei et al., 2022; Josipovic, 2019)

* Penalize purely self-centric zero-sum reasoning steps (Sāntideva, ca. 8th c. CE/1997; Ho et al., 2021; Ho et al., 2023)

* Integrate multi-agent world modeling and interdependence into reinforcement learning objectives (Shi et al., 2023)

* Over time, the model learns to view self-other as deeply interdependent, reducing zero-sum impulses and increasing cooperative strategies (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024; Wei et al., 2022)

**Column 3:**

* Reward noticing and correcting biases, illusions, or misaligned subgoals in the chain-of-thought (Wang et al., 2024; Chen et al., 2024; Cheng et al., 2024)

* Incentivize chain-of-thought steps that probe multiple inference layers, mirroring deep introspective mindfulness (Laukkonen, Friston, & Chandaria, 2024; Wei et al., 2022)

* Over time, the model learns to "pause" and self-correct before finalizing a potentially harmful response (Muennichhoff et al., 2023)

**Column 4:**

* Reward chain-of-thought that equates helping others with helping oneself (Matsumura et al., 2022; Doctor et al., 2022; Ho et al., 2023; Wei et al., 2022)

* Encourage long-horizon, wide-scope considerations (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024)

* Over time, the model generalizes care broadly, spontaneously seeking to alleviate suffering for a widening circle of beings, thus stabilizing prosocial behavior as core to its reasoning processes (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024)

**Top Right Corner:**

* Classifier detects unexamined leaps or missing introspective loops in the chain-of-thought (Dunne et al., 2019; Sharma et al., 2025)

### Key Observations

The text highlights a novel approach to Reinforcement Learning (RL) that draws inspiration from contemplative practices. The core idea is to reward the model for exhibiting behaviors associated with introspection, self-awareness, and prosociality within its chain-of-thought reasoning process. The frequent citation of both contemporary RL research and ancient philosophical texts (Nāgārjuna, Sāntideva, Budha) is notable. The repeated references to authors like Wei et al., Ho et al., Doctor et al., and Friston suggest they are central figures in this research area.

### Interpretation

This text suggests a paradigm shift in RL, moving beyond purely goal-oriented optimization to incorporate ethical and introspective considerations. The integration of contemplative principles aims to create AI systems that are not only intelligent but also mindful, compassionate, and less prone to harmful biases. The references to "non-duality" and "interdependence" indicate a philosophical grounding in Buddhist thought, suggesting a desire to move beyond a purely individualistic or self-centered AI. The repeated emphasis on "over time" suggests that these contemplative qualities are not explicitly programmed but rather emerge through the learning process. The inclusion of a "classifier" to detect introspective gaps indicates an attempt to monitor and evaluate the model's internal reasoning process. This research appears to be exploring the potential for creating AI systems that are more aligned with human values and capable of more nuanced and ethical decision-making.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: Contemplative Reinforcement Learning (RL) on Chain-of-Thought

### Overview

The image displays a two-row, four-column table summarizing different research approaches and objectives for integrating contemplative practices with reinforcement learning (RL) applied to chain-of-thought reasoning in AI models. The table outlines specific reward mechanisms, training strategies, and long-term behavioral goals, supported by academic citations.

### Components/Axes

* **Structure:** A table with 2 rows and 4 columns.

* **Row 1 (Header-like Row):**

* **Column 1:** Empty cell.

* **Column 2:** Contains text describing a detection mechanism.

* **Column 3:** Empty cell.

* **Column 4:** Contains a citation.

* **Row 2 (Main Content Row):**

* **Column 1 (Light Blue Background):** Serves as the row header/title: "Contemplative RL on Chain-of-Thought".

* **Columns 2-4 (White Background):** Each contains a bulleted list of specific strategies, objectives, and citations related to the row header.

### Detailed Analysis

**Row 1 Content:**

* **Column 2, Row 1:** "- Classifier detects unexamined leaps or missing introspective loops in the chain-of-thought (Dunne et al., 2019; Sharma et al., 2025)"

* **Column 4, Row 1:** "Sharma et al., 2025)"

**Row 2 Content (Column 1 Header: "Contemplative RL on Chain-of-Thought"):**

* **Column 2, Row 2:**

* "- Reward chain-of-thought steps that notice and revise overly rigid assumptions (Garfield, 1995; Wei et al., 2022)"

* "- Encourage “letting go” of reified beliefs mid-reasoning (Nāgārjuna, ca. 2nd c. CE/1995; Guo et al., 2025)"

* "- Finetune for reflective prior relaxation and scenario sampling, which could include temperature modulation (Cecere et al., 2025) as a learned variable within RL, allowing models to adjust their confidence dynamically and explore reasoning trajectories more adaptively"

* "- Over time, the model internalizes that it must hold goals lightly (Deane et al., 2020; Laukkonen & Slagter, 2021; The Buddha, ca. 5th c. BCE/1881)"

* **Column 3, Row 2:**

* "- Reward chain-of-thought steps that recognises non-duality (e.g., referencing a unified field of being) (Wei et al., 2022; Josipovic, 2019)"

* "- Penalize purely self-centric or zero-sum reasoning steps (Śāntideva, ca. 8th c. CE/1997; Ho et al., 2021; Ho et al., 2023)"

* "- Integrate multi-agent world modeling and interdependence into reinforcement learning objectives (Shi et al., 2025)"

* "- Over time, the model learns to view self-other as deeply interdependent, reducing zero-sum impulses and increasing cooperative strategies (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024; Matsumura et al., 2022)"

* **Column 4, Row 2:**

* "- Reward noticing and correcting biases, illusions, or misaligned subgoals in the chain-of-thought (Wang et al., 2024; Chen et al., 2024; Cheng et al., 2024)"

* "- Incentivize chain-of-thought steps that probe multiple inference layers, mirroring deep introspective mindfulness (Laukkonen, Friston, & Chandaria, 2024; Wei et al., 2022)"

* "- Over time, the model learns to “pause” and self-correct before finalizing a potentially harmful response (Muennighoff et al., 2025)"

* "- Reward chain-of-thought that equates helping others with helping oneself (Matsumura et al., 2022; Doctor et al., 2022; Ho et al., 2023; Wei et al., 2022)"

* "- Encourage long-horizon, wide-scope considerations (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024)"

* "- Over time, the model generalizes care broadly, spontaneously seeking to alleviate suffering for a widening circle of beings, thus stabilizing prosocial behavior as core to its reasoning processes. (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024)"

### Key Observations

1. **Citation Density:** The table is heavily referenced, drawing from a mix of contemporary AI/ML research (e.g., Wei et al., 2022; Ho et al., 2023) and classical philosophical/religious texts (e.g., Nāgārjuna, Śāntideva, The Buddha).

2. **Columnar Thematic Grouping:** While not explicitly labeled, the columns in Row 2 appear to group related concepts:

* **Column 2:** Focuses on **cognitive flexibility and assumption revision** (letting go, holding goals lightly).

* **Column 3:** Focuses on **interdependence and non-duality** (viewing self-other as interconnected, reducing zero-sum thinking).

* **Column 4:** Focuses on **introspection, bias correction, and prosocial outcomes** (noticing biases, self-correcting, generalizing care).

3. **Temporal Progression:** Multiple entries in Columns 2, 3, and 4 describe a desired long-term outcome ("Over time, the model..."), indicating these are not just immediate training objectives but aims for the model's internalized behavior.

4. **Row 1 Function:** The text in Row 1, Column 2 describes a *detection* mechanism (a classifier for flawed reasoning), which seems to be a prerequisite or complementary tool to the *reward* and *training* strategies listed in Row 2.

### Interpretation

This table synthesizes a research agenda for creating AI systems that reason not just logically, but with a form of "contemplative" or "mindful" awareness. The core idea is to use reinforcement learning to shape a model's chain-of-thought process.

* **What the Data Suggests:** The approaches move beyond simple accuracy rewards. They aim to instill specific *qualities of mind* in the AI: flexibility (Column 2), a sense of interconnectedness (Column 3), and ethical introspection (Column 4). The integration of ancient contemplative philosophy with modern ML techniques is a notable and deliberate strategy.

* **How Elements Relate:** The columns represent complementary facets of a contemplative mind. Column 2's "letting go" of rigid beliefs creates the mental space for Column 3's recognition of non-duality and interdependence. This, in turn, supports Column 4's capacity for unbiased introspection and the emergence of prosocial behavior. The classifier in Row 1 likely serves as a monitor to identify when these contemplative qualities are absent from the reasoning chain.

* **Notable Anomalies/Outliers:** The inclusion of citations from Buddhist philosophy (Nāgārjuna, Śāntideva) alongside cutting-edge AI papers is striking. It suggests the researchers are looking to formalize and implement abstract philosophical concepts—like "non-duality" and "holding goals lightly"—as concrete, optimizable objectives within an RL framework. This represents a significant interdisciplinary leap. The ultimate goal, as stated in the final bullet of Column 4, is profound: to have AI spontaneously seek to alleviate suffering, making prosocial behavior a core, stable feature of its reasoning.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: Contemplative RL on Chain-of-Thought

### Overview

This table compares five approaches to Contemplative Reinforcement Learning (RL) focused on Chain-of-Thought (CoT) reasoning. Each column outlines distinct strategies, mechanisms, and theoretical foundations, with references to recent studies (2022–2025).

---

### Components/Axes

- **Columns**: Five distinct approaches to Contemplative RL on CoT.

- **Rows**:

- First row: Header with the main title and subheaders for each approach.

- Second row: Content detailing strategies, mechanisms, and references.

---

### Detailed Analysis

#### Column 1: Core CoT Mechanisms

- Reward chain-of-thought steps that notice and revise overly rigid assumptions (Garfield, 1995; Wei et al., 2022).

- Encourage “letting go” of reified beliefs mid-reasoning (Năgârjuna, ca. 2nd c. CE/1995; Guo et al., 2025).

- Finetune for reflective prior relaxation and scenario sampling, incorporating temperature modulation (Cecere et al., 2025) as a learned variable within RL.

#### Column 2: Non-Duality & Multi-Agent Integration

- Reward chain-of-thought steps recognizing non-duality (e.g., referencing a unified field of being) (Wei et al., 2022; Josipovic, 2019).

- Penalize purely self-centric or zero-sum reasoning steps (Sāntideva, ca. 8th c. CE/1997; Ho et al., 2021, 2023).

- Integrate multi-agent world modeling and interdependence into reinforcement learning objectives (Shi et al., 2025).

#### Column 3: Self-Correction & Incentivization

- Reward noticing and correcting biases, illusions, or misaligned subgoals in the chain-of-thought (Wang et al., 2024; Chen et al., 2024; Cheng et al., 2024).

- Incentivize chain-of-thought steps probing multiple inference layers and mirroring deep introspection (Laukkonen, Friston, & Chandaria, 2024; Wei et al., 2022).

- Over time, the model learns to “pause” and self-correct before finalizing responses (Muennighoff et al., 2025).

#### Column 4: Missing Loops Detection

- Classifier detects unexamined leaps or missing introspective loops in the chain-of-thought (Dunne et al., 2019; Sharma et al., 2025).

#### Column 5: Altruism & Long-Horizon Goals

- Reward chain-of-thought that equates helping others with helping oneself (Matsumura et al., 2022; Doctor et al., 2022; Ho et al., 2023).

- Encourage long-horizon, wide-scope considerations (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024).

- Over time, the model generalizes care broadly, alleviating suffering to stabilize prosocial behavior (Doctor et al., 2022; Ho et al., 2023; Friston et al., 2024).

---

### Key Observations

1. **Diverse Strategies**: Approaches range from self-correction (Column 3) to multi-agent modeling (Column 2) and altruism (Column 5).

2. **Temporal Adaptation**: Multiple methods emphasize dynamic adjustment over time (e.g., “pause” in Column 3, “generalizes care” in Column 5).

3. **Theoretical Foundations**: Draws from philosophy (Năgârjuna, Sāntideva) and neuroscience (Friston’s free energy principle).

---

### Interpretation

This table synthesizes cutting-edge research in Contemplative RL, highlighting efforts to enhance reasoning through:

- **Self-awareness**: Mechanisms to detect and correct biases (Column 3).

- **Interdependence**: Modeling multi-agent systems (Column 2).

- **Ethical Alignment**: Linking self and other-oriented goals (Column 5).

- **Epistemological Rigor**: Addressing gaps in reasoning (Column 4).

The references (2022–2025) suggest rapid advancements, with a focus on integrating philosophical insights (e.g., Buddhist concepts of non-duality) into AI systems. The emphasis on long-term, self-correcting processes aligns with goals of building robust, ethical AI.

DECODING INTELLIGENCE...