## Diagram: Retrieval-Augmented Generation (RAG) Process Flow

### Overview

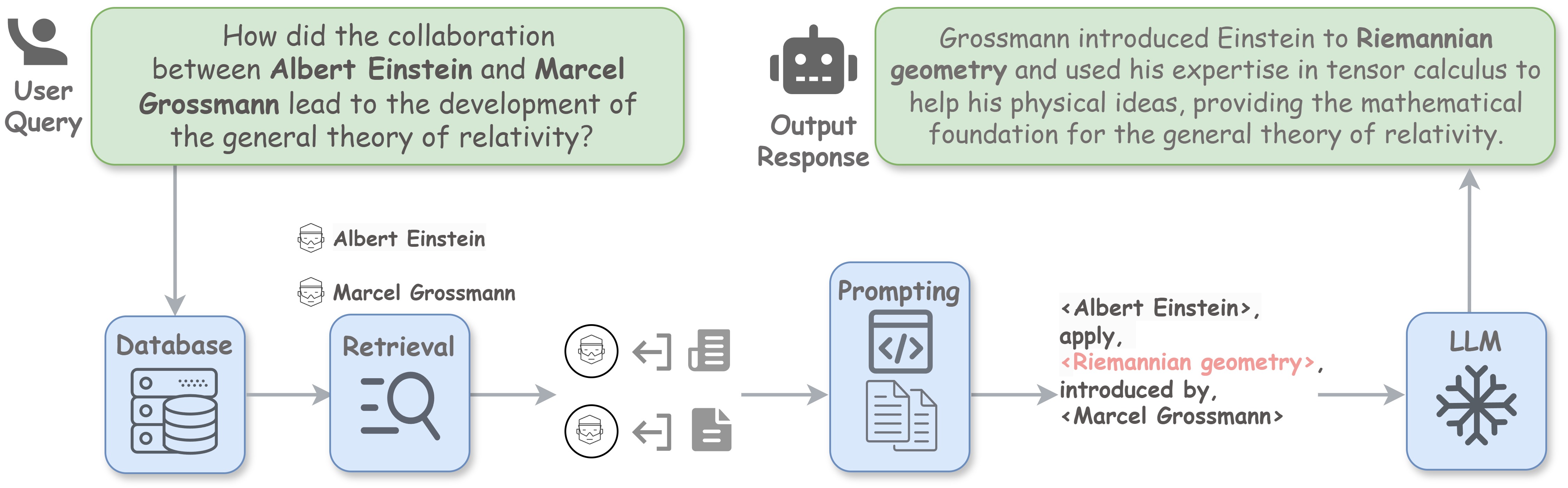

This diagram illustrates a Retrieval-Augmented Generation (RAG) process flow. It shows how a user's natural language query triggers a sequence of steps involving a database, retrieval of relevant information, prompt construction, and finally, the generation of a response by a Large Language Model (LLM). The example query and response are about the collaboration between Albert Einstein and Marcel Grossmann.

### Components/Flow

The diagram is organized into two main horizontal sections:

1. **Top Section (User Interaction):** Contains the initial query and final output.

2. **Bottom Section (System Process):** Shows the internal workflow from query to response generation.

**Key Components and Their Placement:**

* **Top-Left:** A "User Query" icon (a person silhouette) next to a green speech bubble containing the query text.

* **Top-Right:** An "Output Response" icon (a robot head) next to a green speech bubble containing the generated answer.

* **Bottom Flow (Left to Right):**

1. **Database (Left):** A blue box labeled "Database" with an icon of servers and disks. An arrow points from the User Query to this box.

2. **Retrieval (Center-Left):** A blue box labeled "Retrieval" with a magnifying glass icon. An arrow points from the Database to this box.

3. **Retrieved Entities (Center):** Two circular icons representing "Albert Einstein" and "Marcel Grossmann," each with a document icon and a bidirectional arrow, indicating information retrieval for these entities.

4. **Prompting (Center-Right):** A blue box labeled "Prompting" with icons of code (`</>`) and documents. An arrow points from the retrieved entities to this box.

5. **LLM (Right):** A blue box labeled "LLM" with a snowflake-like icon. An arrow points from the Prompting box to this box, and another arrow points from the LLM box up to the Output Response.

### Detailed Analysis

**Textual Content Transcription:**

1. **User Query (Top-Left Green Bubble):**

* Text: "How did the collaboration between Albert Einstein and Marcel Grossmann lead to the development of the general theory of relativity?"

2. **Output Response (Top-Right Green Bubble):**

* Text: "Grossmann introduced Einstein to Riemannian geometry and used his expertise in tensor calculus to help his physical ideas, providing the mathematical foundation for the general theory of relativity."

3. **Component Labels:**

* "User Query"

* "Database"

* "Retrieval"

* "Prompting"

* "LLM"

* "Output Response"

4. **Entity Labels (Center):**

* "Albert Einstein"

* "Marcel Grossmann"

5. **Prompt Construction Text (Between Prompting and LLM):**

* This text appears to be a structured prompt template with retrieved entities inserted.

* Line 1: `<Albert Einstein>,`

* Line 2: `apply,`

* Line 3: `<Riemannian geometry>,` (This text is highlighted in a pink/red color).

* Line 4: `introduced by,`

* Line 5: `<Marcel Grossmann>`

### Key Observations

* **Process Flow:** The diagram clearly depicts a linear, sequential process: Query → Database → Retrieval → Prompt Construction → LLM → Response.

* **Information Highlighting:** The term `<Riemannian geometry>` in the prompt construction is visually highlighted in a distinct color (pink/red), suggesting it is a key retrieved concept or a focus of the prompt.

* **Entity-Centric Retrieval:** The retrieval step is shown to specifically target information about the two named entities (Einstein and Grossmann) mentioned in the query.

* **Structured Prompting:** The prompt sent to the LLM is not the raw query but a structured template that incorporates the retrieved entities and concepts.

### Interpretation

This diagram demonstrates the core mechanism of a Retrieval-Augmented Generation (RAG) system. It visually explains how such a system grounds its responses in factual data rather than relying solely on the LLM's parametric memory.

* **What it demonstrates:** The process shows how a complex, knowledge-intensive question is answered by first retrieving relevant facts (about Einstein, Grossmann, and Riemannian geometry) from an external database and then using those facts to construct a precise prompt for the LLM. This ensures the final response is accurate and contextually relevant to the specific query.

* **Relationships:** The flow arrows define a strict dependency chain. The quality of the final "Output Response" is directly dependent on the accuracy of the "Retrieval" step and the effectiveness of the "Prompting" strategy in framing the retrieved information for the LLM.

* **Notable Pattern:** The diagram emphasizes the transformation of a natural language question into a structured, entity-aware prompt. This structured prompt (`<Albert Einstein>, apply, <Riemannian geometry>, introduced by, <Marcel Grossmann>`) acts as a focused instruction set for the LLM, guiding it to synthesize an answer based precisely on the retrieved relationships. The highlighting of "Riemannian geometry" underscores its role as the critical conceptual bridge in the retrieved information.