\n

## Diagram: LLM Skill Mismatch Example

### Overview

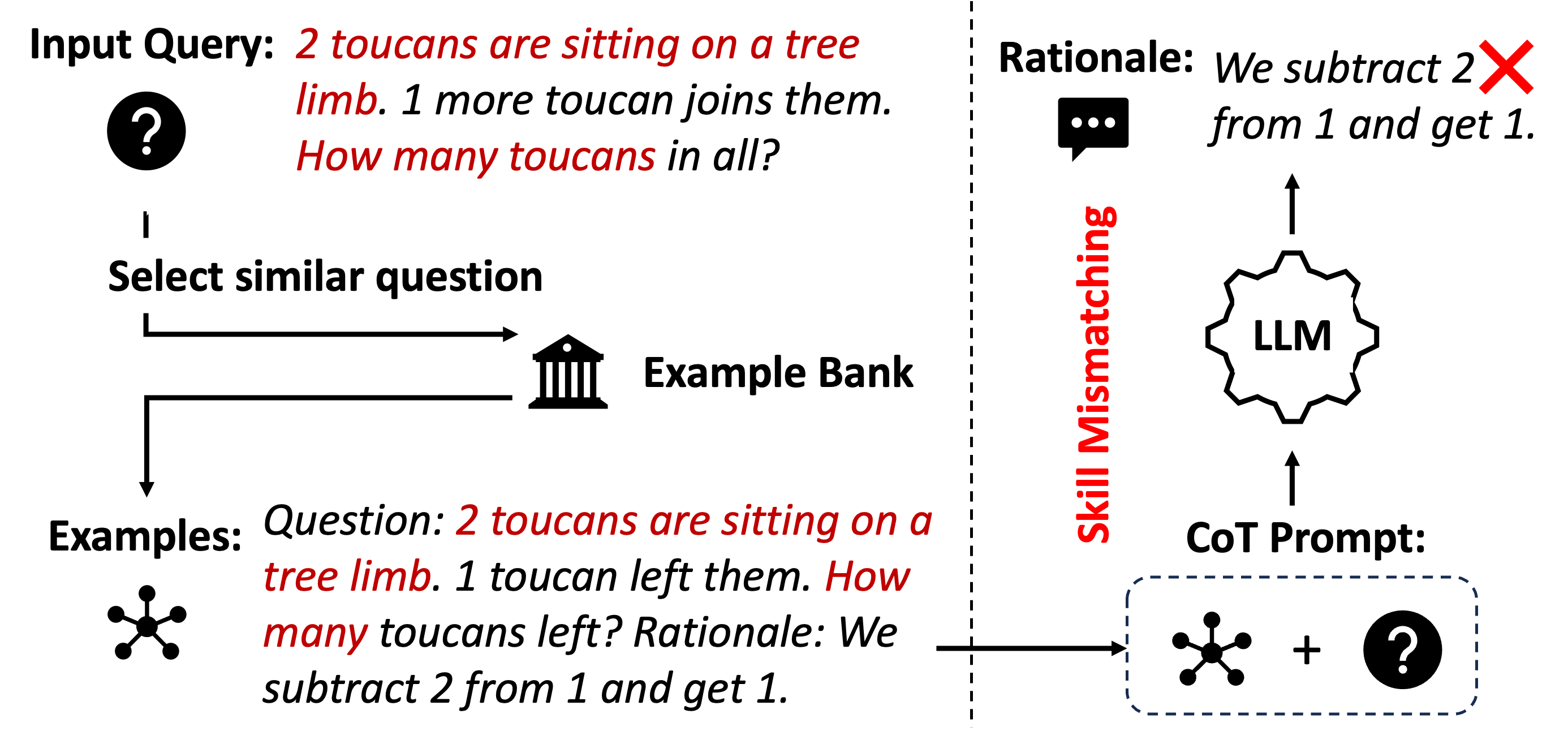

This diagram illustrates a scenario where a Large Language Model (LLM) exhibits a skill mismatch when attempting to solve a mathematical problem. It depicts the flow of information from an input query, through an example bank, to the LLM, and highlights the incorrect rationale generated. The diagram focuses on a simple addition problem framed as a word problem involving toucans.

### Components/Axes

The diagram consists of the following components:

* **Input Query:** A text box at the top-left labeled "Input Query: 2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?"

* **Select similar question:** An arrow pointing from the Input Query to an "Example Bank".

* **Example Bank:** A rectangular box labeled "Example Bank" containing an example question and rationale.

* **Examples:** A set of four intersecting circles labeled "Examples".

* **Question (within Example Bank):** "Question: 2 toucans are sitting on a tree limb. 1 toucan left them. How many toucans left? Rationale: We subtract 2 from 1 and get 1."

* **Rationale:** A speech bubble at the top-right labeled "Rationale: We subtract 2 from 1 and get 1." with a red "X" over it.

* **LLM:** A brain-shaped icon labeled "LLM".

* **CoT Prompt:** A dashed rectangular box labeled "CoT Prompt:" containing the intersecting circles from "Examples" and the question mark from "Input Query".

* **Skill Mismatch:** A vertical text label on the right side labeled "Skill Mismatch".

* **Arrows:** Arrows indicating the flow of information between components.

### Detailed Analysis or Content Details

The diagram demonstrates a Chain-of-Thought (CoT) prompting approach. The process begins with an input query asking for the total number of toucans after one joins two existing ones. The system attempts to find a similar question in the "Example Bank". The example provided in the bank is a subtraction problem ("2 toucans are sitting on a tree limb. 1 toucan left them. How many toucans left?"). The LLM, when presented with the original addition problem, incorrectly applies the subtraction rationale from the example, resulting in an incorrect answer.

The "Rationale" box explicitly states the incorrect calculation: "We subtract 2 from 1 and get 1." This is visually emphasized by the red "X" overlaid on the speech bubble.

The "CoT Prompt" box shows the combination of the "Examples" (intersecting circles) and the "Input Query" (question mark) as input to the LLM.

### Key Observations

* The diagram highlights a critical failure mode of LLMs: applying learned patterns inappropriately.

* The example in the "Example Bank" is a subtraction problem, while the "Input Query" is an addition problem. This mismatch leads to the incorrect rationale.

* The visual emphasis on the incorrect rationale (red "X") underscores the severity of the error.

* The diagram clearly illustrates the flow of information and the point at which the error occurs.

### Interpretation

The diagram demonstrates that LLMs, even with CoT prompting, can struggle with tasks requiring nuanced understanding and correct application of skills. The LLM appears to have focused on the *form* of the question (a word problem involving toucans) rather than the *operation* required (addition vs. subtraction). This suggests that LLMs can be susceptible to superficial similarities and may not possess true reasoning capabilities. The "Skill Mismatch" label accurately describes the core issue: the LLM lacks the ability to discern the appropriate skill (addition) for the given problem. The diagram serves as a cautionary tale about the limitations of LLMs and the importance of careful prompt engineering and evaluation. The diagram is a visual representation of a failure case, and does not contain any numerical data. It is a conceptual illustration of a problem in LLM behavior.