TECHNICAL ASSET FINGERPRINT

949671d331a6ca40b9d44ffb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

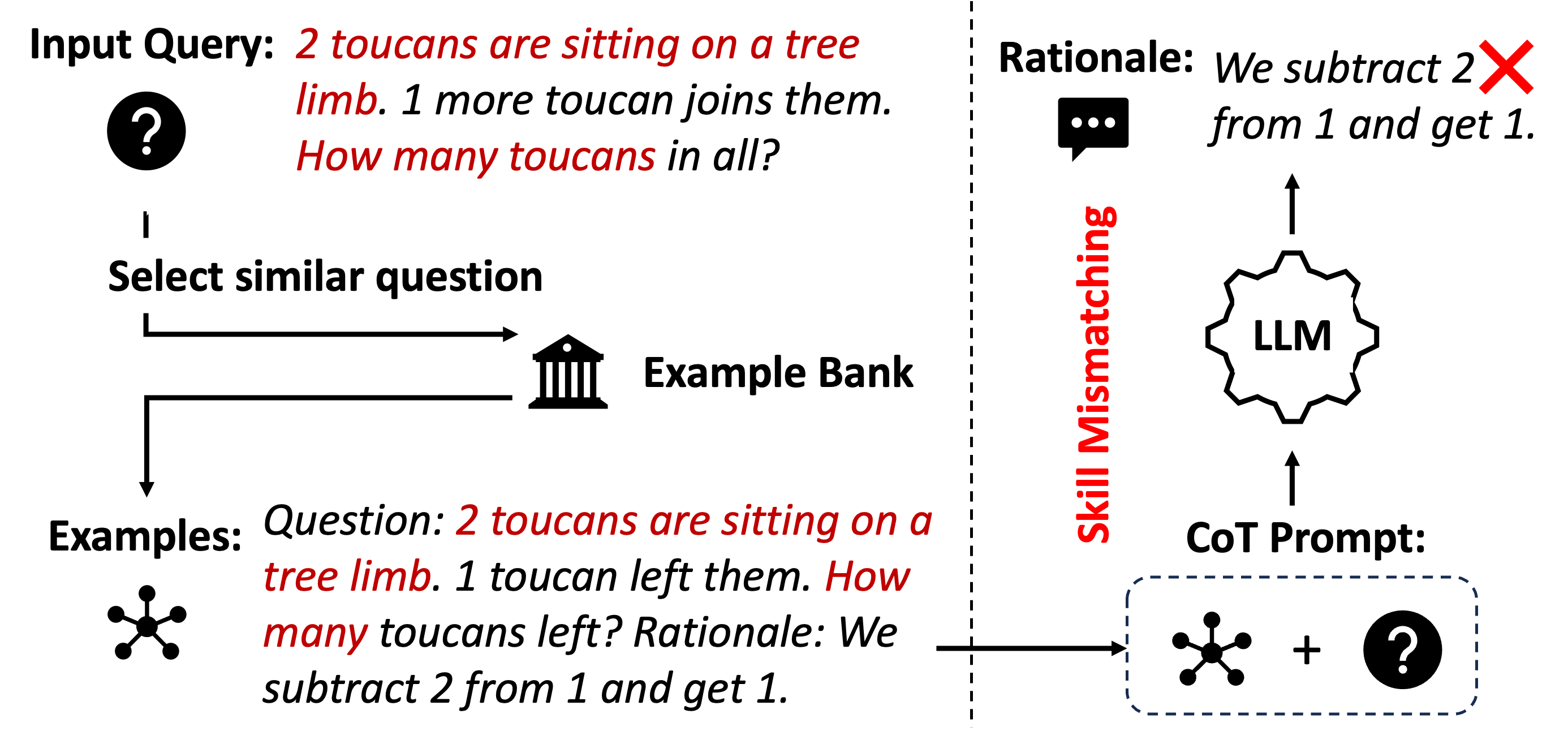

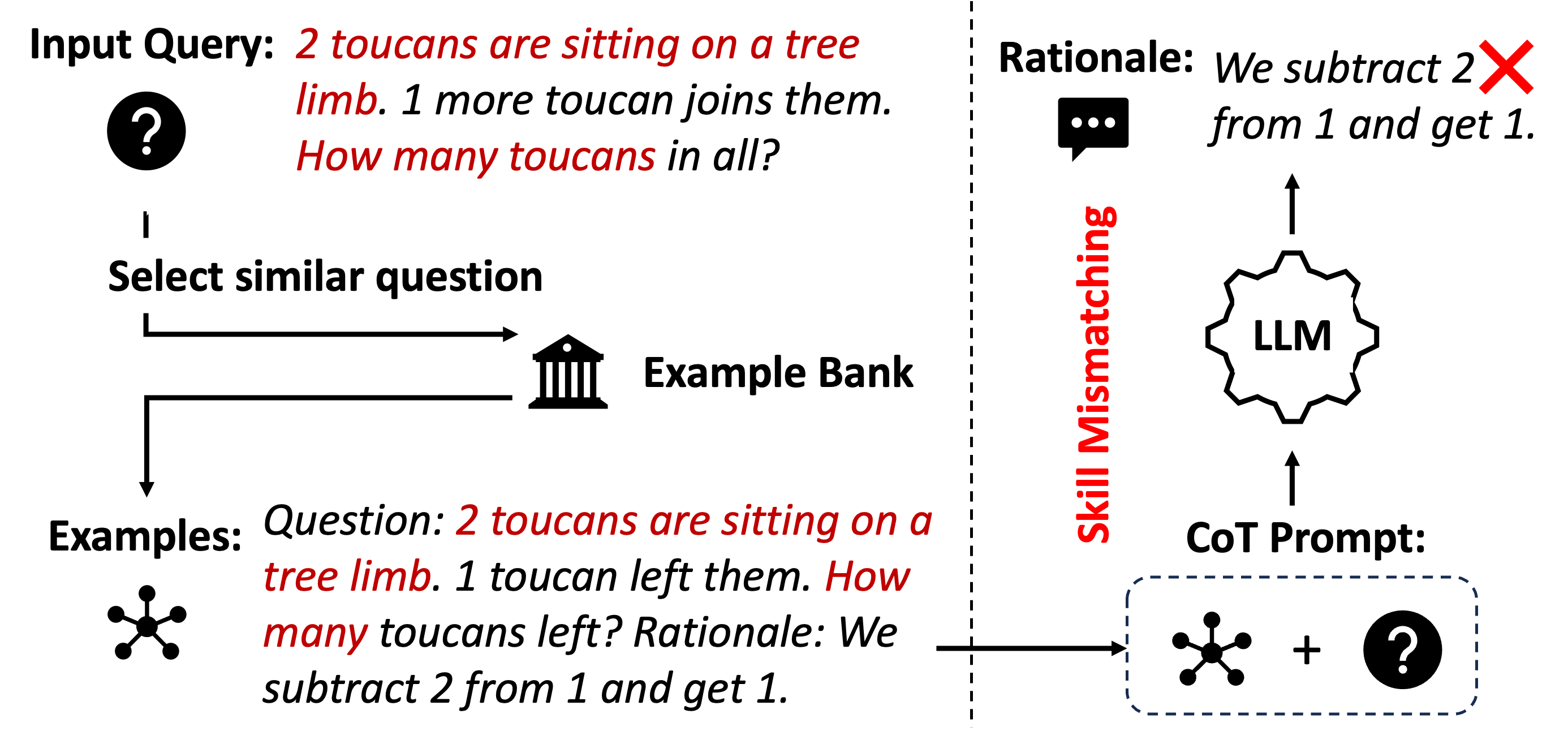

## Diagram: LLM Reasoning with Example Retrieval and Skill Mismatch

### Overview

This image is a conceptual diagram illustrating a process where a Large Language Model (LLM) attempts to solve a math word problem by retrieving a similar example from a bank, but the retrieved example leads to incorrect reasoning due to a "Skill Mismatching." The diagram is divided into two main sections by a vertical dashed line: the left side depicts the input and example retrieval process, and the right side depicts the LLM's processing and flawed output.

### Components/Axes

The diagram is not a chart with axes but a flow diagram with labeled components and directional arrows.

**Left Section (Input & Retrieval):**

* **Top-Left:** A text box labeled **"Input Query:"** followed by the problem text: *"2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?"* The text "2 toucans are sitting on a tree limb." and "How many toucans in all?" is in red font.

* **Below Input Query:** A black circle icon containing a white question mark.

* **Flow Arrow:** A black arrow points downward from the question mark icon to a text box.

* **Text Box:** Labeled **"Select similar question"**.

* **Flow Arrow:** A black arrow points from this text box to the right, towards an icon.

* **Icon:** A black silhouette of a classical building (like a bank or library).

* **Label:** Next to the building icon is the text **"Example Bank"**.

* **Flow Arrow:** A black arrow points downward from the "Select similar question" text box to another text box.

* **Text Box:** Labeled **"Examples:"**.

* **Icon:** To the left of the "Examples:" label is a black network/node icon (a central dot connected to five outer dots).

* **Example Text:** To the right of the "Examples:" label is the retrieved example: *"Question: 2 toucans are sitting on a tree limb. 1 toucan left them. How many toucans left? Rationale: We subtract 2 from 1 and get 1."* The text "2 toucans are sitting on a tree limb." and "How many toucans left?" is in red font.

**Right Section (LLM Processing & Output):**

* **Vertical Divider:** A black dashed line runs vertically down the center of the image.

* **Vertical Label:** To the right of the dashed line, the text **"Skill Mismatching"** is written vertically in red font.

* **Top-Right:** A text box labeled **"Rationale:"** followed by the LLM's output: *"We subtract 2 from 1 and get 1."* A large red "X" mark is superimposed over the word "subtract".

* **Icon:** Below the "Rationale:" label is a black speech bubble icon with three dots inside.

* **Flow Arrow:** A black arrow points upward from a central icon to the speech bubble.

* **Central Icon:** A black gear-shaped icon with the text **"LLM"** inside it.

* **Flow Arrow:** A black arrow points upward from a lower box to the "LLM" gear icon.

* **Lower Box:** A dashed-line box containing two icons connected by a plus sign: the network/node icon (from the Examples) and the question mark icon (from the Input Query).

* **Label:** Above this dashed box is the text **"CoT Prompt:"**.

* **Flow Arrow:** A black arrow points from the "Examples:" section on the left, across the dashed line, into the dashed "CoT Prompt" box on the right.

### Detailed Analysis

The diagram outlines a specific failure mode in an AI reasoning pipeline:

1. **Input:** The system receives an addition problem ("1 more joins them").

2. **Retrieval:** It searches an "Example Bank" for a similar question. It retrieves an example that is structurally similar (same setup with toucans on a limb) but involves a different operation (subtraction: "1 toucan left them").

3. **Prompt Construction:** The retrieved example (network icon) and the original query (question mark icon) are combined to form a Chain-of-Thought (CoT) Prompt.

4. **LLM Processing:** This combined prompt is fed into the LLM.

5. **Flawed Output:** The LLM, influenced by the retrieved example's rationale, generates an incorrect "Rationale" for the original problem. It incorrectly applies subtraction ("We subtract 2 from 1") instead of the required addition, leading to the wrong answer (1 instead of 3). The red "X" explicitly marks this as an error.

6. **Diagnosis:** The vertical red label "Skill Mismatching" identifies the root cause: the retrieved example, while superficially similar, required a different mathematical skill (subtraction vs. addition), which misled the LLM.

### Key Observations

* **Visual Emphasis:** Red font is used strategically to highlight key problem elements: the critical parts of the word problems ("2 toucans...", "How many...") and the core issue ("Skill Mismatching").

* **Error Highlighting:** The large red "X" over "subtract" is a clear visual marker of the logical error in the generated rationale.

* **Iconography:** Simple, universal icons (question mark, building, network, speech bubble, gear) are used to represent abstract concepts (query, bank, examples, output, model).

* **Flow Clarity:** Arrows clearly trace the path from input, through retrieval and prompt construction, to the LLM and its erroneous output.

* **Spatial Separation:** The dashed line cleanly separates the retrieval subsystem (left) from the model execution subsystem (right), with "Skill Mismatching" labeling the interface problem between them.

### Interpretation

This diagram serves as a critical case study in the limitations of example-based or retrieval-augmented generation for reasoning tasks. It demonstrates that **similarity in surface form (topic, sentence structure) does not guarantee similarity in underlying reasoning skill**.

The data suggests that a naive retrieval system can introduce harmful bias. By providing an example that uses subtraction, it "primes" the LLM to perform subtraction, even when the new problem context ("joins them") clearly calls for addition. This is a form of **negative transfer** or **distractor interference**.

The diagram argues that for robust AI reasoning, systems need more than just similar examples; they require:

1. **Skill-aware retrieval:** Finding examples that match the *operation* or *reasoning pattern* needed, not just the topic.

2. **Meta-cognitive checks:** The ability for the model to recognize when a retrieved example's rationale conflicts with the problem's requirements.

3. **Disentanglement:** Separating the retrieval of relevant knowledge from the application of reasoning skills.

In essence, the image warns that without careful design, the very mechanisms meant to help AI reason (like providing examples) can become the source of its failure, highlighting the importance of precision in the "skill" being matched during the retrieval process.

DECODING INTELLIGENCE...