\n

## Diagram: Taxonomy of SCA attacks on DNN implementations

### Overview

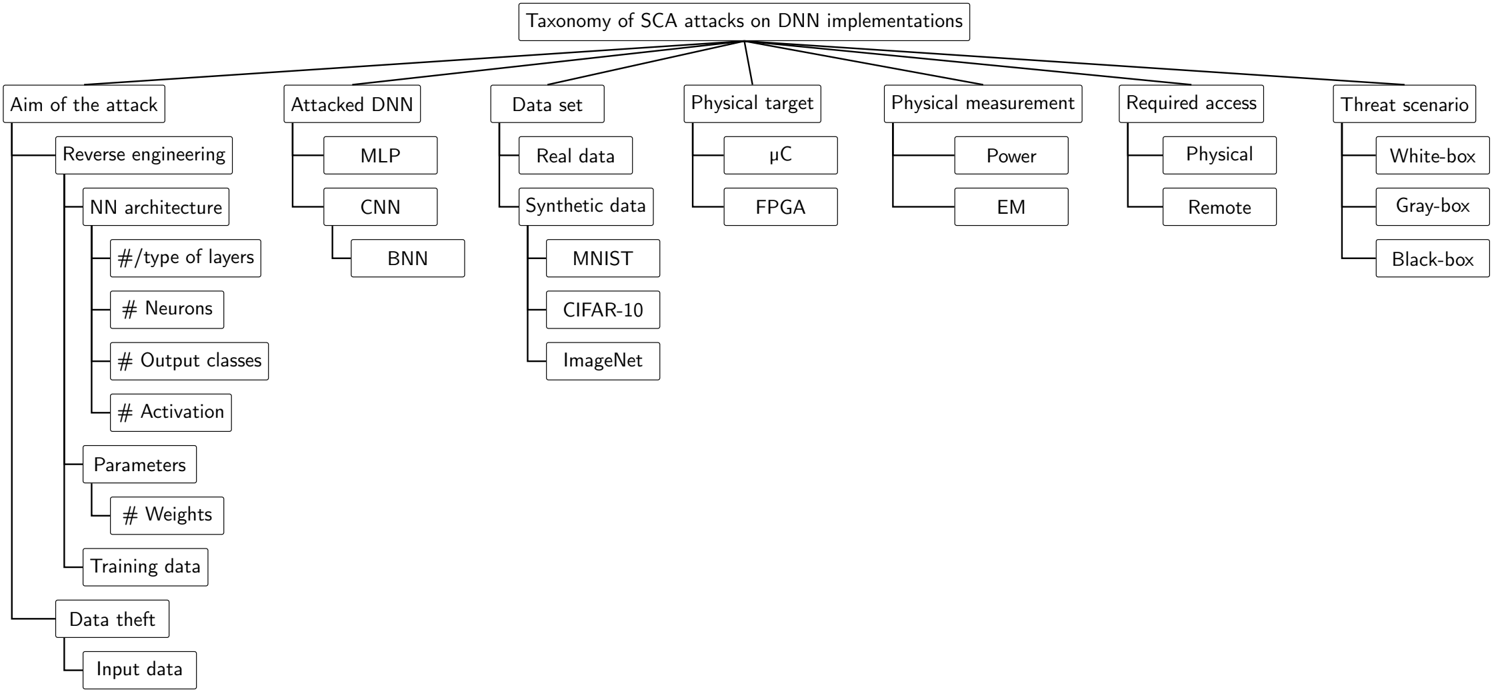

This diagram presents a taxonomy of Side-Channel Attacks (SCA) targeting Deep Neural Network (DNN) implementations. It categorizes attacks based on their aim, the type of DNN attacked, the dataset used, the physical target, the physical measurement taken, the required access level, and the resulting threat scenario. The diagram uses a flow chart structure, branching out from central categories to more specific sub-categories.

### Components/Axes

The diagram is structured around six main categories, positioned horizontally:

1. **Aim of the attack:** (Leftmost)

2. **Attacked DNN:**

3. **Data set:**

4. **Physical target:**

5. **Physical measurement:**

6. **Threat scenario:** (Rightmost)

Each category branches out into several sub-categories.

### Detailed Analysis or Content Details

Here's a breakdown of each category and its sub-categories:

**1. Aim of the attack:**

* Reverse engineering

* NN architecture

* # /type of layers

* # Neurons

* # Output classes

* # Activation

* Parameters

* # Weights

* Training data

* Data theft

* Input data

**2. Attacked DNN:**

* MLP (Multilayer Perceptron)

* CNN (Convolutional Neural Network)

* BNN (Binary Neural Network)

**3. Data set:**

* Real data

* Synthetic data

* MNIST

* CIFAR-10

* ImageNet

**4. Physical target:**

* µC (Microcontroller)

* FPGA (Field-Programmable Gate Array)

**5. Physical measurement:**

* Power

* EM (Electromagnetic)

**6. Required access:**

* Physical

* Remote

**7. Threat scenario:**

* White-box

* Gray-box

* Black-box

The diagram shows connections between these categories. For example, an attack aiming for "Reverse engineering" can target any of the "Attacked DNN" types (MLP, CNN, BNN) and utilize any of the listed "Data sets". Similarly, a physical target of "µC" can be measured using "Power" or "EM" measurements. The "Required access" dictates the "Threat scenario".

### Key Observations

* The diagram provides a comprehensive overview of potential attack vectors against DNN implementations.

* The taxonomy highlights the interplay between different attack characteristics.

* The diagram doesn't provide quantitative data, but rather a qualitative categorization of attack possibilities.

* The connections between categories are not exhaustive, implying that combinations not explicitly shown are also possible.

### Interpretation

This diagram serves as a valuable resource for security researchers and practitioners involved in designing and deploying DNN-based systems. It illustrates the diverse range of potential side-channel attacks and the factors that influence their feasibility and impact. The taxonomy helps to systematically analyze and mitigate these threats.

The diagram suggests that the threat model for DNN implementations is complex, with a wide range of attack possibilities depending on the specific implementation details, the available resources, and the attacker's capabilities. The categorization into "White-box," "Gray-box," and "Black-box" threat scenarios is particularly useful for understanding the level of knowledge and access an attacker might have.

The inclusion of different DNN architectures (MLP, CNN, BNN) and datasets (MNIST, CIFAR-10, ImageNet) indicates that the vulnerability to SCA attacks can vary depending on the specific DNN model and the data it processes. The diagram doesn't explicitly address the effectiveness of different countermeasures, but it provides a framework for evaluating and comparing their performance in different attack scenarios.