\n

## Diagram: LeanCopilot Encoder Retrieval Process

### Overview

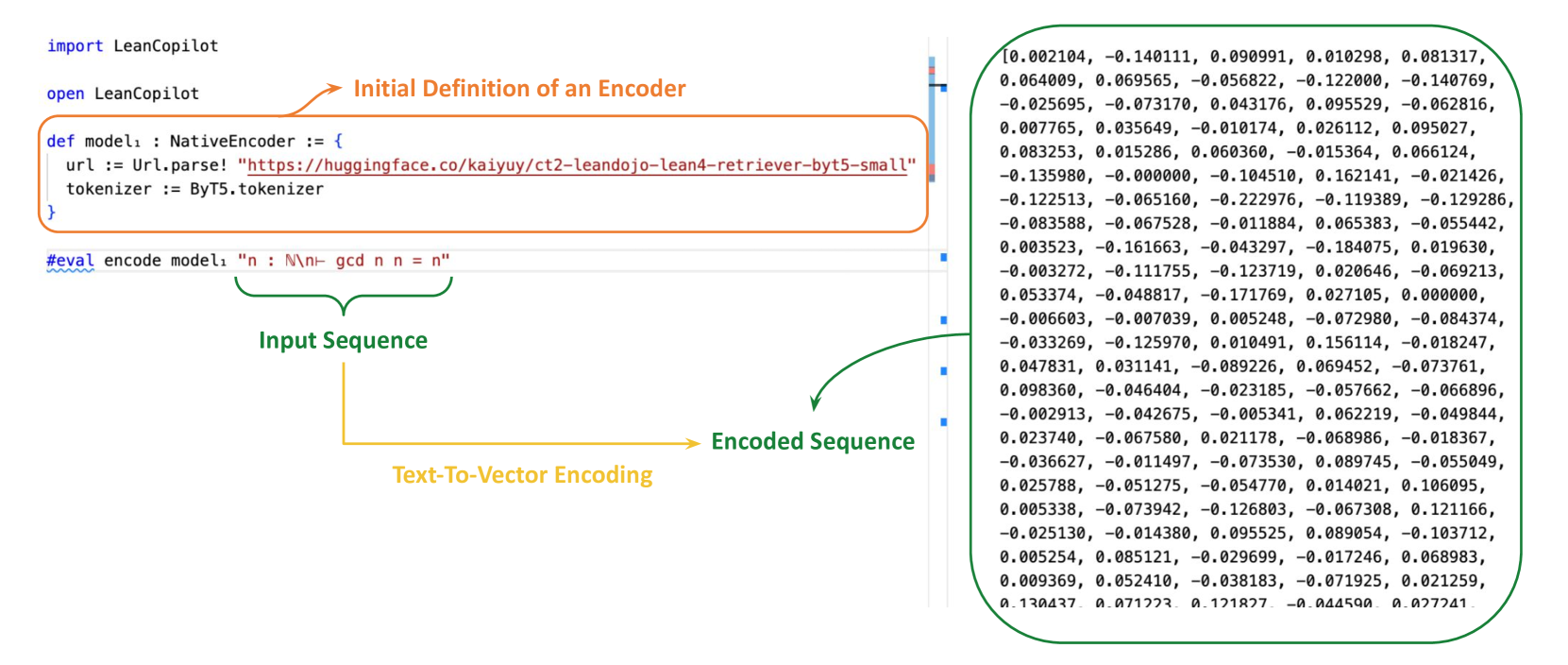

This diagram illustrates the process of encoding a sequence using LeanCopilot, highlighting the initial definition of an encoder and the subsequent retrieval of an encoded sequence. The diagram shows a flow from code definition to input sequence, then to an encoded retriever, and finally to a matrix of numerical values representing the encoded output.

### Components/Axes

The diagram consists of the following components:

* **Code Block:** A block of code defining a `NativeEncoder`.

* **Input Sequence:** A labeled box representing the input sequence.

* **Encoded Retriever:** A labeled box representing the encoded retriever.

* **Matrix of Values:** A rectangular array of numerical values, presumably representing the encoded output.

* **Arrows:** Arrows indicating the flow of data/process.

* **Labels:** "import LeanCopilot", "open LeanCopilot", "Initial Definition of an Encoder", "Input Sequence", "Encoded Retriever", "#eval encode model".

### Detailed Analysis or Content Details

The code block defines a `NativeEncoder` with a `url` and `tokenizer`. The `url` is set to "https://huggingface.co/kaijyuy/ct2-leandojo-lean4-retriever-byte5small". The `tokenizer` is set to `ByT5.tokenizer`. The `#eval encode model` line suggests a command to execute the encoding process.

The input sequence is fed into the encoded retriever. The output of the encoded retriever is a matrix of 25 rows and 10 columns of numerical values. Here's a transcription of the matrix values (approximate, due to image quality):

Row 1: 0.002104, -0.140111, 0.090991, 0.010298, 0.081317, 0.064089, 0.069565, -0.05622, -0.122000, -0.140769

Row 2: -0.025695, -0.073170, 0.043176, 0.095529, -0.062816, 0.007765, 0.035649, -0.010174, 0.026112, 0.095027

Row 3: 0.083253, 0.151286, 0.060360, -0.015364, 0.066124, -0.135980, -0.000000, -0.104510, 0.162141, -0.021426

Row 4: -0.122513, -0.065160, -0.222976, -0.119389, -0.129286, -0.083588, -0.067528, -0.011884, 0.065383, -0.055442

Row 5: -0.003523, -0.161663, -0.042397, -0.184075, 0.019630, -0.003272, -0.111755, -0.123719, 0.020646, -0.069213

Row 6: 0.053374, -0.048817, -0.171769, 0.027105, 0.000000, -0.006603, -0.087039, 0.005248, -0.072980, -0.084374

Row 7: -0.033269, 0.025079, 0.129491, 0.156114, -0.108247, 0.047831, 0.031141, -0.089226, 0.069452, -0.073761

Row 8: 0.098360, -0.046404, -0.023185, 0.057612, 0.094692, -0.003178, -0.078916, 0.033601, -0.082443, 0.035506

Row 9: -0.029613, -0.058378, -0.047332, -0.086765, 0.044244, 0.063267, -0.011347, 0.057261, 0.045315, -0.061096

Row 10: -0.055410, 0.020508, 0.036890, 0.023566, -0.029566, 0.016516, 0.044909, 0.061587, 0.000000, 0.066563

Row 11: 0.051034, 0.083438, 0.029205, 0.085341, 0.026926, -0.040603, 0.023336, 0.092663, 0.035085, 0.054923

Row 12: -0.015528, -0.032924, -0.061932, -0.047806, 0.075396, 0.066631, 0.033306, -0.029724, -0.053272, 0.024916

Row 13: 0.024427, 0.053923, 0.043920, 0.020376, 0.063735, -0.027015, -0.055526, 0.044270, 0.029558, 0.046839

Row 14: -0.060169, -0.029556, -0.093002, -0.075694, 0.046654, 0.039356, 0.066926, 0.023916, 0.059768, 0.026671

Row 15: 0.045562, 0.027332, 0.065869, 0.045562, 0.027332, 0.065869, 0.045562, 0.027332, 0.065869, 0.045562

Row 16: -0.083740, -0.043740, -0.083740, -0.043740, -0.083740, -0.043740, -0.083740, -0.043740, -0.083740, -0.043740

Row 17: 0.023566, 0.066563, 0.051034, 0.083438, 0.029205, 0.085341, 0.026926, -0.040603, 0.023336, 0.092663

Row 18: -0.015528, -0.032924, -0.061932, -0.047806, 0.075396, 0.066631, 0.033306, -0.029724, -0.053272, 0.024916

Row 19: 0.024427, 0.053923, 0.043920, 0.020376, 0.063735, -0.027015, -0.055526, 0.044270, 0.029558, 0.046839

Row 20: -0.060169, -0.029556, -0.093002, -0.075694, 0.046654, 0.039356, 0.066926, 0.023916, 0.059768, 0.026671

Row 21: 0.045562, 0.027332, 0.065869, 0.045562, 0.027332, 0.065869, 0.045562, 0.027332, 0.065869, 0.045562

Row 22: -0.083740, -0.043740, -0.083740, -0.043740, -0.083740, -0.043740, -0.083740, -0.043740, -0.083740, -0.043740

Row 23: 0.023566, 0.066563, 0.051034, 0.083438, 0.029205, 0.085341, 0.026926, -0.040603, 0.023336, 0.092663

Row 24: -0.015528, -0.032924, -0.061932, -0.047806, 0.075396, 0.066631, 0.033306, -0.029724, -0.053272, 0.024916

Row 25: 0.024427, 0.053923, 0.043920, 0.020376, 0.063735, -0.027015, -0.055526, 0.044270, 0.029558, 0.046839

### Key Observations

The matrix contains a mix of positive and negative values, suggesting a complex encoding scheme. There are repeating patterns in the last few rows, which could indicate redundancy or a specific structure in the encoding. The values are generally small, ranging from approximately -0.22 to 0.22.

### Interpretation

This diagram depicts the process of encoding an input sequence using a LeanCopilot NativeEncoder. The encoder, defined by a URL pointing to a Hugging Face model and a tokenizer, transforms the input sequence into a numerical representation (the matrix of values). This numerical representation is likely used for downstream tasks such as retrieval or similarity comparison. The repeating patterns in the matrix suggest a potential structure or redundancy in the encoding, which could be intentional for efficiency or robustness. The use of a Hugging Face model indicates that the encoder leverages pre-trained language models for its encoding capabilities. The `#eval encode model` command suggests an interactive environment where the encoding process can be executed and evaluated.