\n

## Technical Diagram: LeanCopilot NativeEncoder Workflow

### Overview

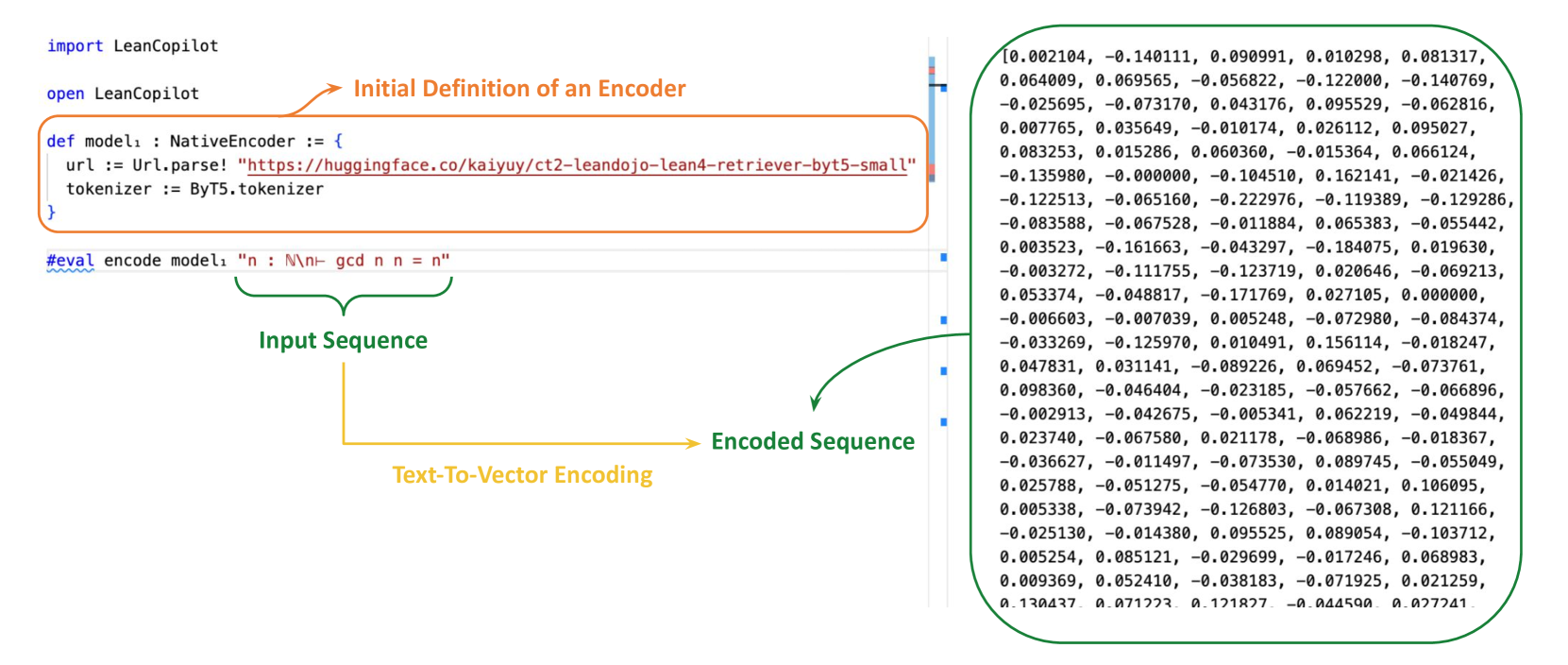

The image is a technical diagram illustrating the process of using the `LeanCopilot` library in the Lean 4 programming language to encode a text sequence into a numerical vector. It shows a code snippet with annotations explaining the flow from model definition to text-to-vector encoding.

### Components/Axes

The diagram is composed of three main regions:

1. **Header/Code Definition (Top-Left):** Contains Lean 4 code for importing the library and defining an encoder model.

2. **Input/Process Flow (Center):** Shows the evaluation command and uses annotated arrows to explain the transformation.

3. **Output Data (Right):** Displays the resulting encoded vector as a long list of floating-point numbers.

**Annotations & Labels:**

* **Orange Arrow & Label:** Points from the code block defining `model₁` to the text "Initial Definition of an Encoder".

* **Green Brace & Label:** Underlines the input string `"n : ℕ\n- gcd n n = n"` and is labeled "Input Sequence".

* **Yellow Arrow & Label:** Connects the "Input Sequence" to the "Encoded Sequence" and is labeled "Text-To-Vector Encoding".

* **Green Arrow & Label:** Points from the yellow arrow to the numerical output block and is labeled "Encoded Sequence".

### Detailed Analysis

**1. Code Block (Top-Left):**

The code is written in Lean 4.

```lean

import LeanCopilot

open LeanCopilot

def model₁ : NativeEncoder := {

url := Url.parse! "https://huggingface.co/kaiyuy/ct2-leandojo-lean4-retriever-byt5-small"

tokenizer := ByT5.tokenizer

}

#eval encode model₁ "n : ℕ\n- gcd n n = n"

```

* **Language:** Lean 4.

* **Key Elements:**

* `import LeanCopilot` and `open LeanCopilot`: Imports and opens the library namespace.

* `def model₁ : NativeEncoder := { ... }`: Defines a model named `model₁` of type `NativeEncoder`.

* `url := Url.parse! "https://huggingface.co/kaiyuy/ct2-leandojo-lean4-retriever-byt5-small"`: Specifies the model's location on Hugging Face Hub.

* `tokenizer := ByT5.tokenizer`: Specifies the tokenizer to use.

* `#eval encode model₁ "n : ℕ\n- gcd n n = n"`: An evaluation command that encodes the given input string using the defined model.

**2. Input Sequence:**

The input text to be encoded is: `"n : ℕ\n- gcd n n = n"`.

* This appears to be a snippet of a mathematical proof or definition in Lean, stating that for a natural number `n`, the greatest common divisor of `n` and `n` is `n`.

**3. Encoded Sequence (Output):**

The output is a dense vector of floating-point numbers. The image shows a truncated list, beginning with:

`[0.002104, -0.140111, 0.090991, 0.010298, 0.081317, 0.064009, 0.069565, -0.056822, -0.122000, -0.140769, ...]`

* The list is extensive and continues beyond the visible frame, indicated by the ellipsis `...` at the end of the visible portion.

* The values are a mix of positive and negative decimals, typical of a neural network's embedding output.

### Key Observations

* **Process Clarity:** The diagram effectively uses color-coded annotations (orange, green, yellow) to isolate and explain each stage of the process: definition, input, transformation, and output.

* **Model Specificity:** The encoder is explicitly linked to a specific, hosted model (`ct2-leandojo-lean4-retriever-byt5-small`), indicating this is a retrieval-focused model for Lean 4 code.

* **Input Context:** The input sequence is not arbitrary text but a formal mathematical statement, suggesting the encoder's purpose is for processing formal language or proof scripts.

* **Output Nature:** The encoded sequence is a high-dimensional vector, which is the standard numerical representation used for tasks like semantic search, similarity comparison, or as input to other machine learning models.

### Interpretation

This diagram serves as a concrete example of **text-to-vector encoding** within a specialized domain (formal mathematics in Lean 4). It demonstrates the practical application of the `LeanCopilot` library to convert symbolic, human-readable code into a machine-interpretable format.

The workflow implies a larger system where such encoded vectors could be used for:

1. **Retrieval:** Finding similar theorems or proof steps in a large database (as suggested by the model name "retriever").

2. **Semantic Analysis:** Understanding the meaning of code snippets beyond syntax.

3. **Machine Learning Integration:** Feeding formal code representations into neural networks for tasks like automated theorem proving or code suggestion.

The choice of a ByT5-based tokenizer and a model hosted on Hugging Face points to the use of modern transformer-based architectures adapted for this niche technical domain. The diagram successfully demystifies the "black box" of encoding by showing the direct lineage from a line of Lean code to its corresponding numerical fingerprint.