TECHNICAL ASSET FINGERPRINT

94ee865140eda2af73da7fd0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

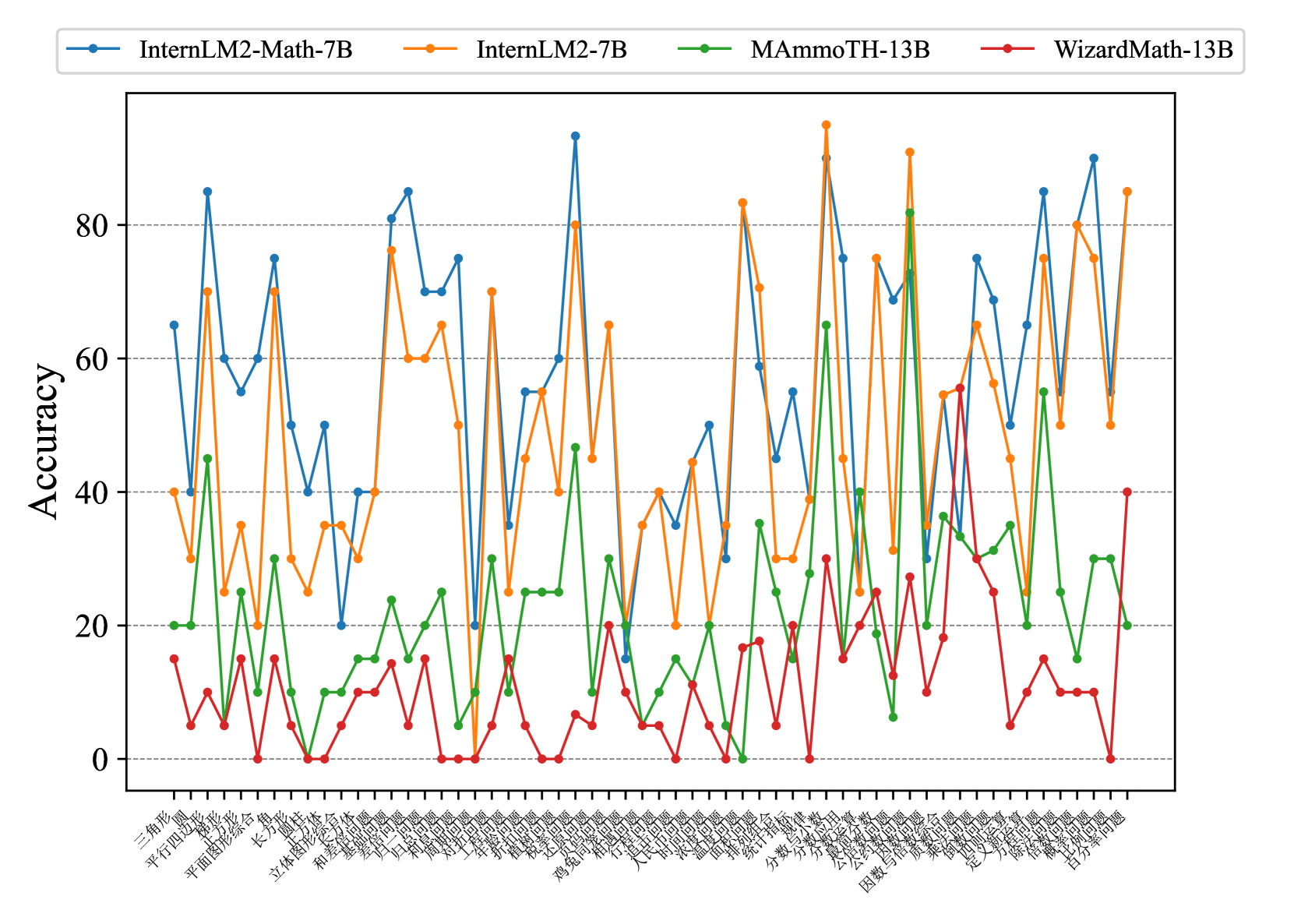

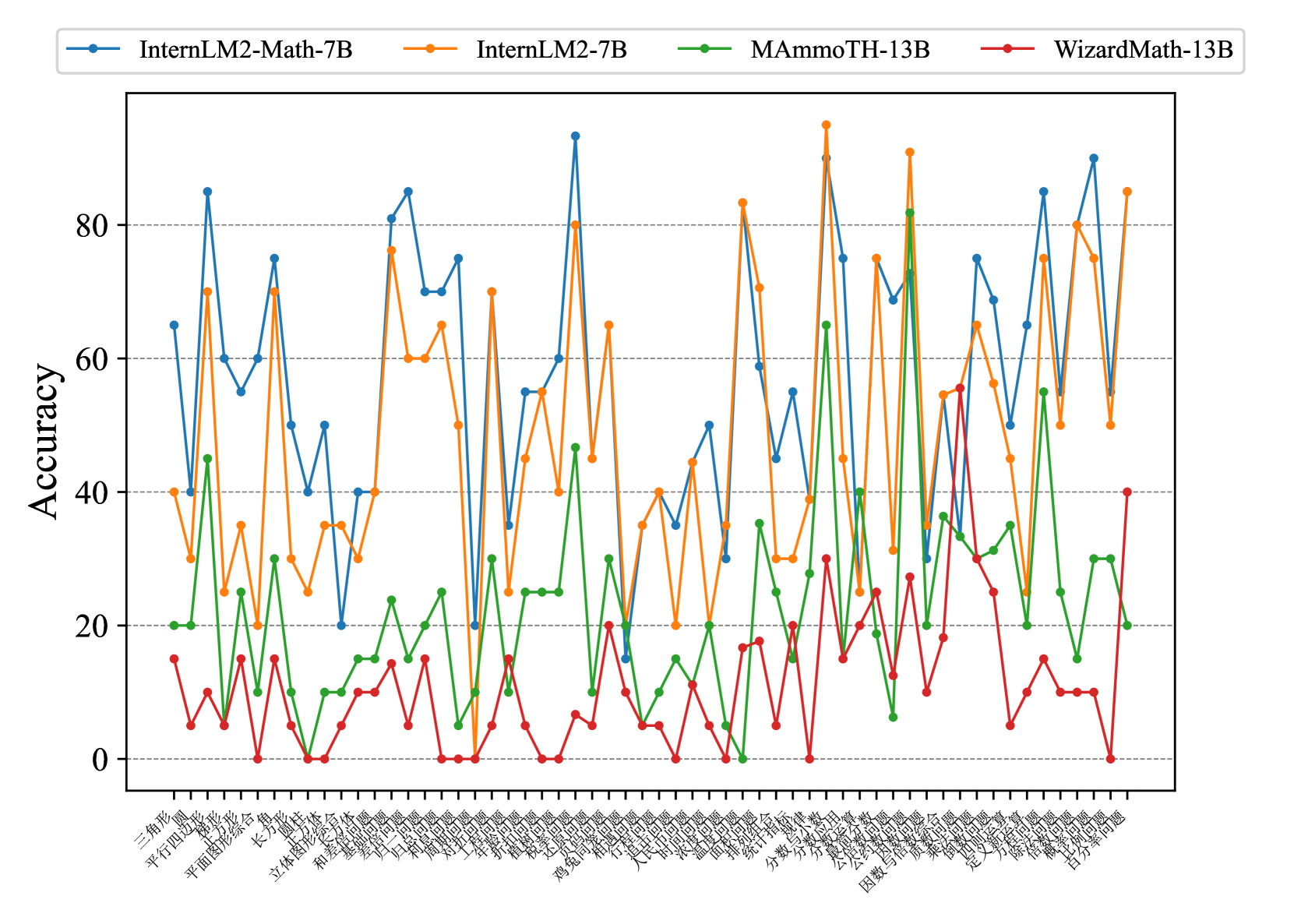

## Line Chart: Model Accuracy on Math Problems

### Overview

The image is a line chart comparing the accuracy of four different language models (InternLM2-Math-7B, InternLM2-7B, MAmmoTH-13B, and WizardMath-13B) on a variety of math problem types. The x-axis represents different types of math problems (in Chinese), and the y-axis represents the accuracy score, ranging from 0 to 80.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Represents different types of math problems, labeled in Chinese. The labels are rotated for readability.

* The labels are: 三角形 (Triangle), 平行四边形 (Parallelogram), 平面图形综合 (Plane figure synthesis), 立体 (Solid), 长方形 (Rectangle), 正方形 (Square), 圆柱 (Cylinder), 圆锥 (Cone), 立体图形综合 (Solid figure synthesis), 和差倍问题 (Sum difference multiple problem), 基础问题 (Basic problem), 平均数问题 (Average problem), 工作问题 (Work problem), 年龄问题 (Age problem), 植树问题 (Tree planting problem), 行程问题 (Travel problem), 鸡兔同笼问题 (Chicken and rabbit in the same cage problem), 浓度问题 (Concentration problem), 面积问题 (Area problem), 统计指标 (Statistical indicators), 分数与小数 (Fractions and decimals), 分数应用题 (Fraction application problems), 公差与公倍数 (Common difference and common multiple), 因数与倍数 (Factors and multiples), 定义新运算 (Define new operation), 比例问题 (Proportion problem), 整数问题 (Integer problem), 除法问题 (Division problem), 概率问题 (Probability problem), 百分数问题 (Percentage problem).

* **Y-axis:** Represents Accuracy, ranging from 0 to 80, with increments of 20.

* **Legend:** Located at the top of the chart.

* Blue: InternLM2-Math-7B

* Orange: InternLM2-7B

* Green: MAmmoTH-13B

* Red: WizardMath-13B

### Detailed Analysis

Here's a breakdown of each model's performance across the different problem types:

* **InternLM2-Math-7B (Blue):** This model generally performs the best, with accuracy scores frequently above 60. It shows peaks in accuracy for problems like "Triangle" (~64), "Plane figure synthesis" (~84), "Rectangle" (~76), "Solid figure synthesis" (~64), "Average problem" (~76), "Travel problem" (~76), "Area problem" (~76), "Fractions and decimals" (~76), "Percentage problem" (~84). It dips to ~40 for "Cylinder" and "Common difference and common multiple".

* **InternLM2-7B (Orange):** This model's performance is generally lower than InternLM2-Math-7B, but still competitive. It shows peaks in accuracy for problems like "Triangle" (~40), "Plane figure synthesis" (~72), "Rectangle" (~60), "Solid figure synthesis" (~40), "Average problem" (~60), "Travel problem" (~92), "Area problem" (~60), "Fractions and decimals" (~60), "Percentage problem" (~80). It dips to ~32 for "Cylinder" and "Common difference and common multiple".

* **MAmmoTH-13B (Green):** This model's performance is generally lower than the other two, with accuracy scores mostly below 40. It shows peaks in accuracy for problems like "Triangle" (~24), "Plane figure synthesis" (~20), "Rectangle" (~24), "Solid figure synthesis" (~24), "Average problem" (~24), "Travel problem" (~44), "Area problem" (~32), "Fractions and decimals" (~32), "Percentage problem" (~28). It dips to ~0 for "Cylinder" and "Common difference and common multiple".

* **WizardMath-13B (Red):** This model consistently has the lowest accuracy scores, often near or at 0. It shows peaks in accuracy for problems like "Triangle" (~12), "Plane figure synthesis" (~16), "Rectangle" (~12), "Solid figure synthesis" (~8), "Average problem" (~12), "Travel problem" (~20), "Area problem" (~16), "Fractions and decimals" (~24), "Percentage problem" (~32). It dips to ~0 for "Cylinder" and "Common difference and common multiple".

### Key Observations

* InternLM2-Math-7B generally outperforms the other models.

* WizardMath-13B consistently shows the lowest accuracy.

* All models struggle with certain problem types, particularly those related to "Cylinder" and "Common difference and common multiple".

* There is significant variance in performance across different problem types for all models.

### Interpretation

The chart suggests that the InternLM2-Math-7B model is the most effective at solving a variety of math problems compared to the other models tested. The significant performance differences across problem types indicate that the models may have varying strengths and weaknesses depending on the specific mathematical concepts involved. The consistently low performance of WizardMath-13B suggests it may require further training or optimization for these types of problems. The common difficulty across all models with "Cylinder" and "Common difference and common multiple" problems could indicate a need for improved training data or model architecture to handle these specific mathematical concepts.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Math Problem Accuracy by Model

### Overview

This image presents a line chart comparing the accuracy of four different language models – InternLM2-Math-7B, InternLM2-7B, MAmmoTH-13B, and WizardMath-13B – across a series of math problems. The x-axis represents the math problems (in Chinese characters), and the y-axis represents the accuracy, ranging from 0 to 80.

### Components/Axes

* **Y-axis Title:** Accuracy

* **X-axis Title:** (Chinese characters representing math problem types - see "Detailed Analysis" for approximate translations)

* **Legend:** Located at the top-center of the chart.

* InternLM2-Math-7B (Blue line with circle markers)

* InternLM2-7B (Orange line with circle markers)

* MAmmoTH-13B (Green line with circle markers)

* WizardMath-13B (Red line with circle markers)

* **Scale:** Y-axis is scaled from 0 to 80, with increments of 10.

* **X-axis Markers:** Numerous Chinese characters representing different math problem types.

### Detailed Analysis

The chart displays accuracy scores for each model across a range of math problems. Due to the Chinese characters on the x-axis, precise problem names are difficult to determine without translation. However, based on visual grouping and common math topics, approximate translations are:

1. 三角函数 (Trigonometric Functions)

2. 平均问题 (Average Problems)

3. 平面向量 (Plane Vectors)

4. 立体几何 (Solid Geometry)

5. 长方体 (Rectangular Prism)

6. 和差倍问题 (Sum-Difference-Multiple Problems)

7. 方程组 (System of Equations)

8. 不等式 (Inequalities)

9. 数列 (Sequences)

10. 极限 (Limits)

11. 导数 (Derivatives)

12. 函数 (Functions)

13. 概率 (Probability)

14. 统计 (Statistics)

15. 组合 (Combinations)

16. 计数 (Counting)

17. 逻辑 (Logic)

18. 几何 (Geometry)

19. 面积 (Area)

20. 体积 (Volume)

21. 角度 (Angles)

22. 比例 (Proportions)

23. 百分数 (Percentages)

24. 混合 (Mixed)

Here's a breakdown of the trends and approximate accuracy values for each model:

* **InternLM2-Math-7B (Blue):** Starts around 65, dips to ~40, rises to a peak of ~85, fluctuates between 50-80 for the majority of the problems, and ends around 70.

* **InternLM2-7B (Orange):** Starts around 40, generally stays between 20-50, with a peak around 85 at problem 16 (计数/Counting). Ends around 30.

* **MAmmoTH-13B (Green):** Starts around 20, fluctuates between 10-30, with occasional spikes up to ~40. Ends around 20.

* **WizardMath-13B (Red):** Starts around 0, generally stays between 0-20, with a few peaks around 30-40. Ends around 10.

### Key Observations

* InternLM2-Math-7B consistently outperforms the other models across most problem types, achieving the highest accuracy scores.

* InternLM2-7B shows a significant peak in accuracy for the "Counting" problem (problem 16).

* MAmmoTH-13B and WizardMath-13B exhibit relatively low and stable accuracy scores throughout the chart.

* There is considerable fluctuation in accuracy for all models, suggesting sensitivity to the specific problem type.

### Interpretation

The data suggests that InternLM2-Math-7B is the most capable model for solving a diverse range of math problems, as indicated by its consistently higher accuracy scores. The peak in InternLM2-7B's accuracy for "Counting" problems might indicate a specific strength in combinatorial reasoning. The lower performance of MAmmoTH-13B and WizardMath-13B could be due to their architecture or training data. The fluctuations in accuracy across all models highlight the challenges of math problem-solving and the importance of model robustness. The chart provides a comparative performance assessment of these models, which can inform model selection for specific math-related tasks. The use of Chinese characters for the problem types suggests the models were likely evaluated on a dataset tailored to a Chinese-speaking audience or focused on mathematical concepts commonly taught in Chinese education systems.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Accuracy Comparison of Four AI Models Across Math Problem Categories

### Overview

This image is a line chart comparing the performance (accuracy) of four different large language models on a wide variety of math problem categories. The chart displays accuracy percentages on the y-axis against a dense list of specific math topics on the x-axis. The data reveals significant variability in model performance across different types of mathematical problems.

### Components/Axes

* **Chart Type:** Multi-series line chart.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, ranging from 0 to approximately 95 (implied by the highest data point).

* **Major Gridlines:** Horizontal dashed lines at 0, 20, 40, 60, and 80.

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains a dense list of math problem categories.

* **Categories (Transcribed from Chinese, left to right):**

1. 三角形 (Triangle)

2. 圆 (Circle)

3. 平行四边形 (Parallelogram)

4. 梯形 (Trapezoid)

5. 长方形 (Rectangle)

6. 平面图形综合 (Plane Figure Synthesis)

7. 角 (Angle)

8. 长方体 (Cuboid)

9. 正方体 (Cube)

10. 立体图形综合 (Solid Figure Synthesis)

11. 和差倍问题 (Sum-Difference-Multiple Problem)

12. 盈亏问题 (Surplus-Deficit Problem)

13. 归一问题 (Unitary Method Problem)

14. 归总问题 (Total Method Problem)

15. 和倍问题 (Sum-Multiple Problem)

16. 差倍问题 (Difference-Multiple Problem)

17. 植树问题 (Tree Planting Problem)

18. 年龄问题 (Age Problem)

19. 鸡兔同笼 (Chicken and Rabbit in the Same Cage)

20. 行程问题 (Travel Problem)

21. 工程问题 (Work Problem)

22. 浓度问题 (Concentration Problem)

23. 经济问题 (Economic Problem)

24. 数列与数表 (Sequence and Number Table)

25. 排列组合 (Permutation and Combination)

26. 统计与概率 (Statistics and Probability)

27. 分数分数应用题 (Fraction Application Problem)

28. 分数百分数 (Fraction Percentage)

29. 公式计算 (Formula Calculation)

30. 公因数与公倍数 (Common Factor and Common Multiple)

31. 因数与倍数 (Factor and Multiple)

32. 质数合数 (Prime and Composite Numbers)

33. 定义新运算 (New Operation Definition)

34. 方程与方程组 (Equation and System of Equations)

35. 除法与减法 (Division and Subtraction)

36. 模糊问题 (Fuzzy Problem)

37. 百分率问题 (Percentage Rate Problem)

* **Legend:** Located at the top center of the chart.

* **Blue line with circle markers:** InternLM2-Math-7B

* **Orange line with circle markers:** InternLM2-7B

* **Green line with circle markers:** MAmmoTH-13B

* **Red line with circle markers:** WizardMath-13B

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

* **InternLM2-Math-7B (Blue):** Exhibits a highly volatile, "spiky" trend with frequent, sharp peaks and troughs. It achieves the highest overall accuracy on the chart.

* **Notable Peaks:** ~85% (三角形/Triangle), ~85% (和差倍问题/Sum-Difference-Multiple), ~92% (植树问题/Tree Planting), ~88% (排列组合/Permutation and Combination), ~85% (因数与倍数/Factor and Multiple).

* **Notable Troughs:** ~20% (长方体/Cuboid), ~15% (鸡兔同笼/Chicken and Rabbit), ~30% (行程问题/Travel Problem), ~20% (分数百分数/Fraction Percentage).

* **InternLM2-7B (Orange):** Also shows high volatility, often tracking closely with the blue line but generally at a slightly lower accuracy level, with some exceptions.

* **Notable Peaks:** ~95% (植树问题/Tree Planting - highest point on chart), ~83% (经济问题/Economic Problem), ~90% (排列组合/Permutation and Combination), ~85% (百分率问题/Percentage Rate Problem).

* **Notable Troughs:** ~20% (梯形/Trapezoid), ~25% (归一问题/Unitary Method), ~20% (鸡兔同笼/Chicken and Rabbit), ~25% (方程与方程组/Equation).

* **MAmmoTH-13B (Green):** Shows a less volatile but consistently lower performance trend compared to the blue and orange lines. Its accuracy rarely exceeds 60%.

* **Notable Peaks:** ~65% (排列组合/Permutation and Combination), ~55% (因数与倍数/Factor and Multiple), ~45% (圆/Circle).

* **Notable Troughs:** ~0% (长方形/Rectangle, 鸡兔同笼/Chicken and Rabbit, 统计与概率/Statistics and Probability), ~5% (和差倍问题/Sum-Difference-Multiple).

* **WizardMath-13B (Red):** Demonstrates the lowest overall performance, with accuracy frequently at or near 0% for many categories. Its highest points are modest.

* **Notable Peaks:** ~40% (百分率问题/Percentage Rate Problem), ~30% (排列组合/Permutation and Combination), ~25% (公式计算/Formula Calculation).

* **Notable Troughs:** 0% (observed for at least 10 categories including 长方形/Rectangle, 盈亏问题/Surplus-Deficit, 鸡兔同笼/Chicken and Rabbit, 统计与概率/Statistics and Probability).

### Key Observations

1. **Performance Hierarchy:** A clear performance hierarchy is visible: InternLM2-Math-7B (Blue) and InternLM2-7B (Orange) are the top performers, followed by MAmmoTH-13B (Green), with WizardMath-13B (Red) performing the worst.

2. **Problem-Specific Strengths/Weaknesses:** All models show extreme variability. No single model dominates all categories. For example, the "Tree Planting" (植树问题) category sees peak performance for both top models, while "Chicken and Rabbit in the Same Cage" (鸡兔同笼) is a major weakness for all.

3. **Correlation:** The blue and orange lines (both InternLM2 variants) show a strong positive correlation in their peaks and troughs, suggesting similar underlying strengths and weaknesses despite the "Math" fine-tuning of the blue model.

4. **Zero-Performance Categories:** WizardMath-13B (Red) and MAmmoTH-13B (Green) hit 0% accuracy on multiple categories, indicating a complete failure to solve those problem types in this evaluation.

### Interpretation

This chart provides a granular diagnostic of model capabilities across the spectrum of elementary to middle school mathematics. The data suggests that:

* **Specialization Matters:** The "InternLM2-Math-7B" model, presumably fine-tuned for math, does not consistently outperform its base "InternLM2-7B" counterpart. Their performance is intertwined, indicating that general capability may be as important as specialized tuning for this broad set of tasks.

* **The "Long Tail" of Math Problems:** The extreme volatility highlights that AI math proficiency is not monolithic. Models have "blind spots" (e.g., classic puzzles like "Chicken and Rabbit") and areas of relative strength (e.g., combinatorics, percentage problems). This implies that benchmarking on a narrow set of problems can be misleading.

* **Model Robustness:** The consistent underperformance and frequent 0% scores of WizardMath-13B and MAmmoTH-13B suggest they may lack the robustness or foundational reasoning skills required for this diverse problem set compared to the InternLM2 family.

* **Investigative Insight (Peircean):** The chart acts as a **diagnostic index**. The shared troughs (like "Chicken and Rabbit") point to a class of problems that remain a fundamental challenge for current LLMs, possibly due to their reliance on specific logical framing or algebraic translation that models struggle with. The shared peaks suggest problem types that are more amenable to pattern recognition or have been well-represented in training data. The divergence between the two top models on specific categories could be a fruitful area for investigating the effects of math-specific fine-tuning.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Model Accuracy Across Question Categories

### Overview

The image is a line chart comparing the accuracy of four AI models across 30+ question categories (x-axis) measured in percentage (y-axis). Four distinct lines represent different models, with significant variability in performance across categories.

### Components/Axes

- **X-axis**: Labeled with Chinese characters representing question categories (e.g., "三角形面积", "平行四边形性质", "长方形周长"). Categories are densely packed and unlabeled in English.

- **Y-axis**: Labeled "Accuracy" with a scale from 0 to 100, marked at 20-unit intervals.

- **Legend**: Positioned at the top-right, mapping colors to models:

- **Blue**: InternLM2-Math-7B

- **Orange**: InternLM2-7B

- **Green**: MAmmoTH-13B

- **Red**: WizardMath-13B

### Detailed Analysis

1. **InternLM2-Math-7B (Blue Line)**:

- **Trend**: Dominates with the highest peaks (up to ~90%) and most consistent performance.

- **Key Data Points**:

- Peaks at ~90% for categories like "三角形面积" and "长方形周长".

- Dips below 60% for categories like "平行四边形性质" and "立体图形体积".

2. **InternLM2-7B (Orange Line)**:

- **Trend**: Second-highest performance, peaking ~85% but with sharper fluctuations.

- **Key Data Points**:

- Peaks at ~85% for "平行四边形性质" and "立体图形体积".

- Drops to ~30% for "平行四边形性质" and "长方形周长".

3. **MAmmoTH-13B (Green Line)**:

- **Trend**: Moderate performance, peaking ~80% but with significant dips.

- **Key Data Points**:

- Peaks at ~80% for "平行四边形性质" and "立体图形体积".

- Drops to ~20% for "平行四边形性质" and "长方形周长".

4. **WizardMath-13B (Red Line)**:

- **Trend**: Lowest performance, peaking ~40% with erratic fluctuations.

- **Key Data Points**:

- Peaks at ~40% for "平行四边形性质" and "立体图形体积".

- Drops to ~0% for "平行四边形性质" and "长方形周长".

### Key Observations

- **Performance Variability**: All models show category-specific strengths/weaknesses. For example:

- InternLM2-Math-7B excels in geometry-related categories (e.g., "三角形面积").

- WizardMath-13B struggles with most categories, suggesting limited training in these areas.

- **Model Specialization**: InternLM2-Math-7B’s consistent high performance implies optimization for mathematical reasoning, while others may lack specialization.

- **Outliers**: The red line (WizardMath-13B) has the most erratic pattern, with sharp drops to 0% in multiple categories.

### Interpretation

The chart highlights that model performance is highly dependent on question category. InternLM2-Math-7B’s dominance suggests it was specifically trained for mathematical tasks, while other models (e.g., WizardMath-13B) may have been fine-tuned for narrower domains. The variability underscores the importance of domain-specific training in AI systems. The red line’s extreme fluctuations could indicate overfitting or insufficient data for certain categories.

DECODING INTELLIGENCE...