## Line Chart: Math Problem Accuracy by Model

### Overview

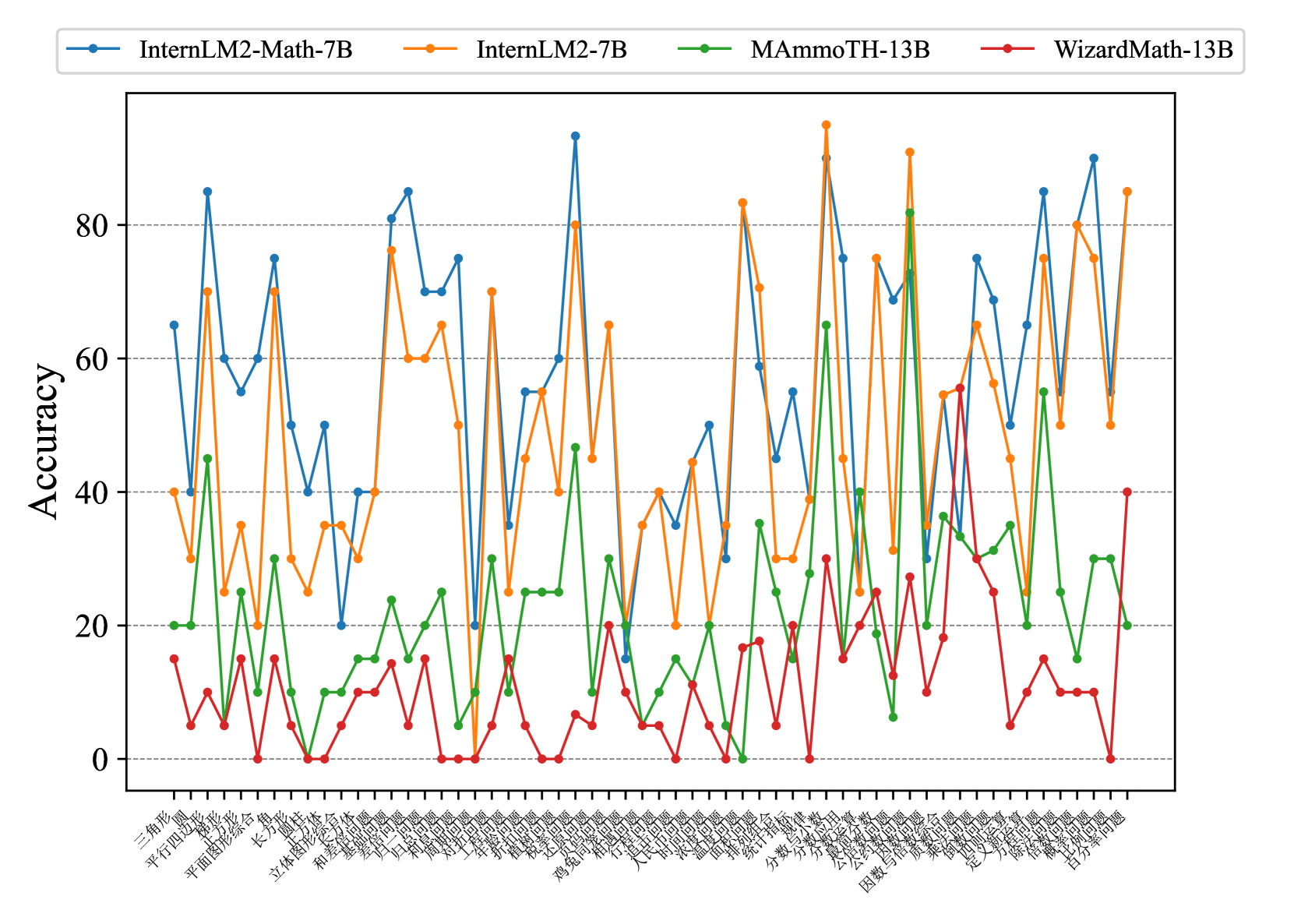

This image presents a line chart comparing the accuracy of four different language models – InternLM2-Math-7B, InternLM2-7B, MAmmoTH-13B, and WizardMath-13B – across a series of math problems. The x-axis represents the math problems (in Chinese characters), and the y-axis represents the accuracy, ranging from 0 to 80.

### Components/Axes

* **Y-axis Title:** Accuracy

* **X-axis Title:** (Chinese characters representing math problem types - see "Detailed Analysis" for approximate translations)

* **Legend:** Located at the top-center of the chart.

* InternLM2-Math-7B (Blue line with circle markers)

* InternLM2-7B (Orange line with circle markers)

* MAmmoTH-13B (Green line with circle markers)

* WizardMath-13B (Red line with circle markers)

* **Scale:** Y-axis is scaled from 0 to 80, with increments of 10.

* **X-axis Markers:** Numerous Chinese characters representing different math problem types.

### Detailed Analysis

The chart displays accuracy scores for each model across a range of math problems. Due to the Chinese characters on the x-axis, precise problem names are difficult to determine without translation. However, based on visual grouping and common math topics, approximate translations are:

1. 三角函数 (Trigonometric Functions)

2. 平均问题 (Average Problems)

3. 平面向量 (Plane Vectors)

4. 立体几何 (Solid Geometry)

5. 长方体 (Rectangular Prism)

6. 和差倍问题 (Sum-Difference-Multiple Problems)

7. 方程组 (System of Equations)

8. 不等式 (Inequalities)

9. 数列 (Sequences)

10. 极限 (Limits)

11. 导数 (Derivatives)

12. 函数 (Functions)

13. 概率 (Probability)

14. 统计 (Statistics)

15. 组合 (Combinations)

16. 计数 (Counting)

17. 逻辑 (Logic)

18. 几何 (Geometry)

19. 面积 (Area)

20. 体积 (Volume)

21. 角度 (Angles)

22. 比例 (Proportions)

23. 百分数 (Percentages)

24. 混合 (Mixed)

Here's a breakdown of the trends and approximate accuracy values for each model:

* **InternLM2-Math-7B (Blue):** Starts around 65, dips to ~40, rises to a peak of ~85, fluctuates between 50-80 for the majority of the problems, and ends around 70.

* **InternLM2-7B (Orange):** Starts around 40, generally stays between 20-50, with a peak around 85 at problem 16 (计数/Counting). Ends around 30.

* **MAmmoTH-13B (Green):** Starts around 20, fluctuates between 10-30, with occasional spikes up to ~40. Ends around 20.

* **WizardMath-13B (Red):** Starts around 0, generally stays between 0-20, with a few peaks around 30-40. Ends around 10.

### Key Observations

* InternLM2-Math-7B consistently outperforms the other models across most problem types, achieving the highest accuracy scores.

* InternLM2-7B shows a significant peak in accuracy for the "Counting" problem (problem 16).

* MAmmoTH-13B and WizardMath-13B exhibit relatively low and stable accuracy scores throughout the chart.

* There is considerable fluctuation in accuracy for all models, suggesting sensitivity to the specific problem type.

### Interpretation

The data suggests that InternLM2-Math-7B is the most capable model for solving a diverse range of math problems, as indicated by its consistently higher accuracy scores. The peak in InternLM2-7B's accuracy for "Counting" problems might indicate a specific strength in combinatorial reasoning. The lower performance of MAmmoTH-13B and WizardMath-13B could be due to their architecture or training data. The fluctuations in accuracy across all models highlight the challenges of math problem-solving and the importance of model robustness. The chart provides a comparative performance assessment of these models, which can inform model selection for specific math-related tasks. The use of Chinese characters for the problem types suggests the models were likely evaluated on a dataset tailored to a Chinese-speaking audience or focused on mathematical concepts commonly taught in Chinese education systems.