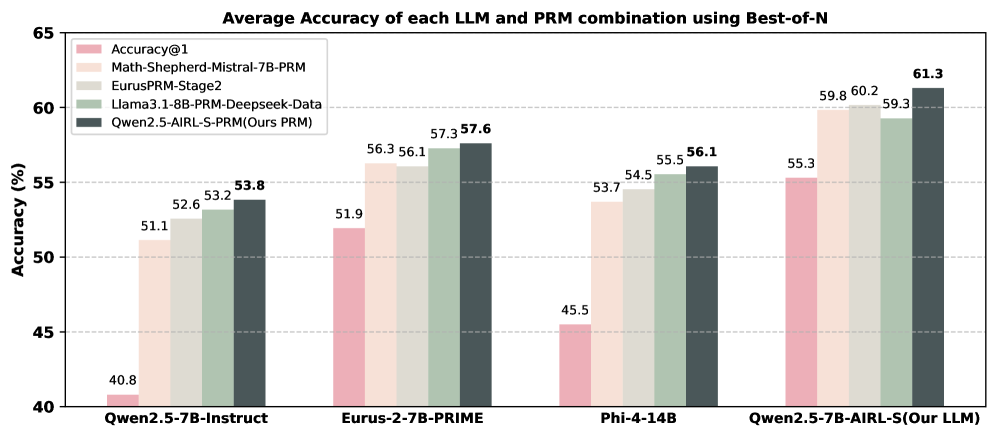

## Bar Chart: Average Accuracy of each LLM and PRM combination using Best-of-N

### Overview

This is a grouped bar chart comparing the performance of four different Large Language Models (LLMs) when paired with five different Process Reward Models (PRMs) or evaluation methods. The performance metric is average accuracy percentage, measured using a "Best-of-N" sampling strategy. The chart demonstrates how the choice of PRM significantly impacts the final accuracy score for each base LLM.

### Components/Axes

* **Chart Title:** "Average Accuracy of each LLM and PRM combination using Best-of-N"

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 40 to 65, with major gridlines at intervals of 5% (40, 45, 50, 55, 60, 65).

* **X-Axis:** Lists four distinct LLM models, which form the primary groups:

1. `Qwen2.5-7B-Instruct`

2. `Eurus-2-7B-PRIME`

3. `Phi-4-14B`

4. `Qwen2.5-7B-AIRL-S(Our LLM)`

* **Legend:** Located in the top-left corner of the plot area. It defines five data series (PRM/evaluation methods), each associated with a specific color:

* **Pink:** `Accuracy@1`

* **Light Beige:** `Math-Shepherd-Mistral-7B-PRM`

* **Light Gray:** `EurusPRM-Stage2`

* **Light Green:** `Llama3.1-8B-PRM-Deepseek-Data`

* **Dark Gray:** `Qwen2.5-AIRL-S-PRM(Ours PRM)`

### Detailed Analysis

The chart displays five bars for each of the four LLM groups. The values are annotated on top of each bar.

**1. Group: Qwen2.5-7B-Instruct**

* **Trend:** Accuracy increases progressively from the baseline `Accuracy@1` to the advanced PRMs.

* **Data Points:**

* Accuracy@1 (Pink): **40.8%**

* Math-Shepherd-Mistral-7B-PRM (Light Beige): **51.1%**

* EurusPRM-Stage2 (Light Gray): **52.6%**

* Llama3.1-8B-PRM-Deepseek-Data (Light Green): **53.2%**

* Qwen2.5-AIRL-S-PRM (Dark Gray): **53.8%**

**2. Group: Eurus-2-7B-PRIME**

* **Trend:** Similar upward trend. The gap between the baseline and the best PRM is smaller than in the first group.

* **Data Points:**

* Accuracy@1 (Pink): **51.9%**

* Math-Shepherd-Mistral-7B-PRM (Light Beige): **56.3%**

* EurusPRM-Stage2 (Light Gray): **56.1%** *(Note: Slightly lower than the previous bar)*

* Llama3.1-8B-PRM-Deepseek-Data (Light Green): **57.3%**

* Qwen2.5-AIRL-S-PRM (Dark Gray): **57.6%**

**3. Group: Phi-4-14B**

* **Trend:** A clear, steady increase in accuracy across the PRM sequence.

* **Data Points:**

* Accuracy@1 (Pink): **45.5%**

* Math-Shepherd-Mistral-7B-PRM (Light Beige): **53.7%**

* EurusPRM-Stage2 (Light Gray): **54.5%**

* Llama3.1-8B-PRM-Deepseek-Data (Light Green): **55.5%**

* Qwen2.5-AIRL-S-PRM (Dark Gray): **56.1%**

**4. Group: Qwen2.5-7B-AIRL-S(Our LLM)**

* **Trend:** This group shows the highest overall accuracies. The trend is upward, with a notable jump to the final PRM.

* **Data Points:**

* Accuracy@1 (Pink): **55.3%**

* Math-Shepherd-Mistral-7B-PRM (Light Beige): **59.8%**

* EurusPRM-Stage2 (Light Gray): **60.2%**

* Llama3.1-8B-PRM-Deepseek-Data (Light Green): **59.3%** *(Note: Slight dip compared to previous bar)*

* Qwen2.5-AIRL-S-PRM (Dark Gray): **61.3%**

### Key Observations

1. **Consistent PRM Hierarchy:** In almost every LLM group, the `Accuracy@1` (pink) bar is the lowest, and the `Qwen2.5-AIRL-S-PRM` (dark gray) bar is the highest. This pattern holds for three out of four groups, with the `Eurus-2-7B-PRIME` group being a very close exception.

2. **Performance of "Our" Models:** The chart highlights two "Ours" components: the LLM `Qwen2.5-7B-AIRL-S` and the PRM `Qwen2.5-AIRL-S-PRM`. Their combination yields the highest overall accuracy on the chart (**61.3%**).

3. **Baseline vs. PRM Boost:** The improvement from using any PRM over the `Accuracy@1` baseline is substantial, ranging from approximately +13 to +18 percentage points across all LLMs.

4. **Minor Anomalies:** There are two instances where the strict ascending order is broken:

* In the `Eurus-2-7B-PRIME` group, `EurusPRM-Stage2` (56.1%) is marginally lower than `Math-Shepherd-Mistral-7B-PRM` (56.3%).

* In the `Qwen2.5-7B-AIRL-S` group, `Llama3.1-8B-PRM-Deepseek-Data` (59.3%) is lower than both `Math-Shepherd` (59.8%) and `EurusPRM` (60.2%).

### Interpretation

This chart provides strong evidence for the efficacy of Process Reward Models (PRMs) in improving the mathematical reasoning accuracy of LLMs when using a Best-of-N sampling strategy. The data suggests that the selection of PRM is a critical hyperparameter, often leading to greater performance gains than the difference between some of the base LLMs themselves.

The consistent superiority of the `Qwen2.5-AIRL-S-PRM` across different LLM backbones indicates it is a robust and high-performing reward model. The fact that the authors' own LLM (`Qwen2.5-7B-AIRL-S`) paired with their own PRM achieves the top result suggests a successful co-design or fine-tuning strategy tailored for this task.

The minor dips in performance for certain PRMs within specific LLM groups (e.g., `EurusPRM` on `Eurus-2-7B-PRIME`) hint at potential compatibility issues or that a PRM's effectiveness may not be perfectly universal, possibly depending on the underlying data distribution or model architecture it was trained to evaluate. Overall, the chart makes a clear case for investing in specialized PRMs to unlock higher performance from LLMs in reasoning tasks.