TECHNICAL ASSET FINGERPRINT

95dde04919417db2852dcd79

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Data Pipeline and Factuality Alignment Diagram

### Overview

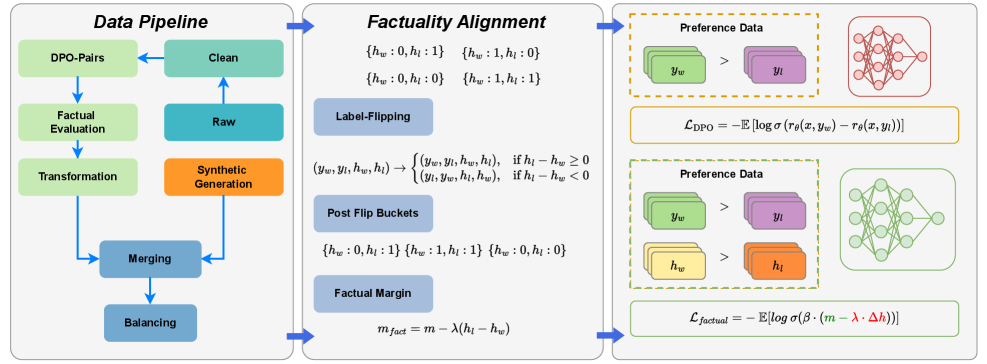

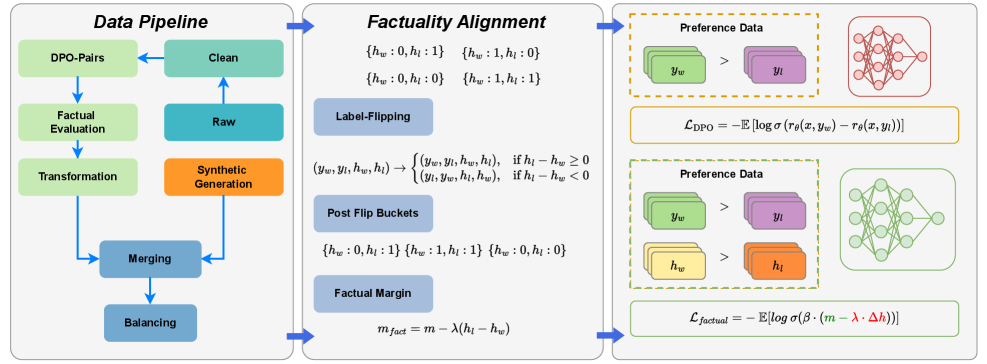

The image presents a diagram illustrating a data pipeline and a factuality alignment process. It outlines the steps involved in preparing data, aligning it with factual information, and using it for preference learning. The diagram is divided into three main sections: Data Pipeline, Factuality Alignment, and Preference Data with Loss Functions.

### Components/Axes

**Data Pipeline (Left Section):**

* **Title:** Data Pipeline

* **Components (Top to Bottom):**

* DPO-Pairs (light green rectangle)

* Clean (light green rectangle)

* Factual Evaluation (light green rectangle)

* Raw (light blue rectangle)

* Transformation (light green rectangle)

* Synthetic Generation (orange rectangle)

* Merging (light blue rectangle)

* Balancing (light blue rectangle)

* **Flow:** Arrows indicate the flow of data between components.

**Factuality Alignment (Middle Section):**

* **Title:** Factuality Alignment

* **Components:**

* Buckets:

* {hw: 0, hl: 1}

* {hw: 1, hl: 0}

* {hw: 0, hl: 0}

* {hw: 1, hl: 1}

* Label-Flipping (light blue rectangle)

* Transformation Rule:

* (yw, yl, hw, hl) -> { (yw, yl, hw, hl), if hl - hw >= 0; (yl, yw, hl, hw), if hl - hw < 0 }

* Post Flip Buckets (light blue rectangle)

* Buckets:

* {hw: 0, hl: 1}

* {hw: 1, hl: 1}

* {hw: 0, hl: 0}

* Factual Margin (light blue rectangle)

* Equation: m_fact = m - λ(hl - hw)

**Preference Data and Loss Functions (Right Section):**

* **Title:** Preference Data

* **Top Section:**

* Preference Data (dashed orange rectangle)

* yw (light green stacked rectangles) > yl (light purple stacked rectangles)

* Neural Network (red)

* Loss Function: L_DPO = -E [log σ(rθ(x, yw) - rθ(x, yl))]

* **Bottom Section:**

* Preference Data (dashed green rectangle)

* yw (light green stacked rectangles) > yl (light purple stacked rectangles)

* hw (light yellow stacked rectangles) > hl (orange stacked rectangles)

* Neural Network (green)

* Loss Function: L_factual = -E [log σ(β · (m - λ · Δh))]

### Detailed Analysis or ### Content Details

**Data Pipeline:**

* The data pipeline starts with DPO-Pairs and proceeds through cleaning, factual evaluation, transformation, and merging.

* Synthetic generation is performed after the "Raw" data stage.

* The pipeline ends with a balancing step.

**Factuality Alignment:**

* The factuality alignment section involves label flipping based on a condition involving hl and hw.

* Post-flip buckets are used to categorize data.

* The factual margin is calculated using the formula m_fact = m - λ(hl - hw).

**Preference Data and Loss Functions:**

* The top section shows a preference for yw over yl, associated with the L_DPO loss function and a red neural network.

* The bottom section shows preferences for yw over yl and hw over hl, associated with the L_factual loss function and a green neural network.

### Key Observations

* The diagram illustrates a process for aligning data with factual information and using it to train models based on preferences.

* The factuality alignment step involves label flipping and margin calculation.

* Two different loss functions (L_DPO and L_factual) are used in conjunction with different preference data scenarios.

### Interpretation

The diagram presents a methodology for incorporating factuality into preference learning. The data pipeline prepares the data, while the factuality alignment step ensures that the data is consistent with factual information. The preference data sections show how this aligned data can be used to train neural networks using different loss functions. The use of label flipping and factual margin calculation suggests an attempt to mitigate the impact of incorrect or biased labels. The two loss functions, L_DPO and L_factual, likely represent different approaches to incorporating factual information into the learning process. The diagram suggests a comprehensive approach to training models that are both aligned with factual information and capable of learning from preferences.

DECODING INTELLIGENCE...

EXPERT: gemini-3-flash-free VERSION 1

RUNTIME: nugit/gemini/gemini-3-flash-preview

INTEL_VERIFIED

# Technical Document Extraction: Factuality Alignment and Data Pipeline

This image illustrates a three-stage technical workflow for enhancing the factuality of Large Language Models (LLMs) using Direct Preference Optimization (DPO) variants. The process is divided into three primary segments: **Data Pipeline**, **Factuality Alignment**, and the resulting **Preference Data/Loss Functions**.

---

## 1. Segment 1: Data Pipeline

This section describes the iterative process of preparing and refining data for model training.

### Components and Flow:

* **Raw (Teal Box):** The starting point of the data flow.

* **Clean (Green Box):** Data moves from Raw to Clean.

* **DPO-Pairs (Light Green Box):** Cleaned data is structured into pairs for preference learning.

* **Factual Evaluation (Light Green Box):** The pairs undergo a factuality check.

* **Transformation (Light Green Box):** Data is transformed based on evaluation results.

* **Synthetic Generation (Orange Box):** A parallel track where synthetic data is generated.

* **Merging (Blue Box):** The transformed factual data and synthetic data are combined.

* **Balancing (Blue Box):** The final step to ensure dataset distribution is optimized before moving to the next stage.

---

## 2. Segment 2: Factuality Alignment

This section details the mathematical logic used to categorize and adjust data based on factual correctness ($h$).

### Key Variables:

* $y_w$: Winning (preferred) response.

* $y_l$: Losing (non-preferred) response.

* $h_w$: Factuality score of the winning response.

* $h_l$: Factuality score of the losing response.

### Logic Blocks:

* **Initial States:** Four possible factual pairings are identified:

* $\{h_w: 0, h_l: 1\}$

* $\{h_w: 1, h_l: 0\}$

* $\{h_w: 0, h_l: 0\}$

* $\{h_w: 1, h_l: 1\}$

* **Label-Flipping:** A conditional logic operation to ensure the "winner" is the more factual response:

$$(y_w, y_l, h_w, h_l) \rightarrow \begin{cases} (y_w, y_l, h_w, h_l), & \text{if } h_l - h_w \geq 0 \\ (y_l, y_w, h_l, h_w), & \text{if } h_l - h_w < 0 \end{cases}$$

* **Post Flip Buckets:** The resulting categories after the flipping logic is applied:

* $\{h_w: 0, h_l: 1\}$

* $\{h_w: 1, h_l: 1\}$

* $\{h_w: 0, h_l: 0\}$

* **Factual Margin:** A formula to calculate the margin for the loss function:

$$m_{fact} = m - \lambda(h_l - h_w)$$

---

## 3. Segment 3: Preference Data and Loss Functions

This section shows the application of the processed data into neural network training objectives.

### Standard DPO (Top Section):

* **Preference Data:** Visualized as green blocks ($y_w$) being preferred ($>$) over purple blocks ($y_l$).

* **Model:** Represented by a red neural network diagram.

* **Loss Function ($\mathcal{L}_{\text{DPO}}$):**

$$\mathcal{L}_{\text{DPO}} = -\mathbb{E} [\log \sigma (r_\theta(x, y_w) - r_\theta(x, y_l))]$$

### Factual DPO (Bottom Section):

* **Preference Data:** Includes standard preference ($y_w > y_l$) and factual preference where yellow blocks ($h_w$) are preferred over orange blocks ($h_l$).

* **Model:** Represented by a green neural network diagram.

* **Loss Function ($\mathcal{L}_{\text{factual}}$):**

$$\mathcal{L}_{\text{factual}} = -\mathbb{E} [\log \sigma (\beta \cdot (m - \lambda \cdot \Delta h))]$$

* *Note: The terms $(m - \lambda \cdot \Delta h)$ are highlighted in green and red respectively within the formula.*

---

## Summary of Visual Mappings

| Component | Color Code | Purpose |

| :--- | :--- | :--- |

| **Synthetic Data** | Orange | Artificially generated factual samples. |

| **Winning Response ($y_w$)** | Green | The response the model should prefer. |

| **Losing Response ($y_l$)** | Purple | The response the model should avoid. |

| **Factual Score ($h$)** | Yellow/Orange | Used to weight the margin of the loss function. |

| **Standard Model** | Red | Baseline DPO training. |

| **Factual Model** | Green | Training with the integrated factual margin. |

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Data Pipeline and Factuality Alignment

### Overview

The image presents a diagram illustrating a data pipeline for processing DPO-Pairs (Direct Preference Optimization Pairs) and a factuality alignment process. The diagram is divided into two main sections: "Data Pipeline" on the left and "Factuality Alignment" on the right. The "Data Pipeline" section shows a series of steps from raw data to clean data, while the "Factuality Alignment" section details a process involving label flipping and preference data.

### Components/Axes

The diagram consists of several rectangular blocks representing processing stages. Arrows indicate the flow of data between these stages. Mathematical equations are present within the "Factuality Alignment" section. The diagram also includes representations of neural networks, visually depicted as interconnected nodes.

### Detailed Analysis or Content Details

**Data Pipeline (Left Side):**

* **DPO-Pairs:** The starting point of the pipeline.

* **Factual Evaluation:** A processing step following DPO-Pairs.

* **Transformation:** A processing step following Factual Evaluation.

* **Merging:** A processing step following Transformation.

* **Balancing:** A processing step following Merging.

* **Synthetic Generation:** A processing step that feeds into the "Clean" stage.

* **Raw:** A data source feeding into "Synthetic Generation".

* **Clean:** The output of the pipeline, receiving input from both "Synthetic Generation" and a feedback loop from "Balancing".

* Arrows indicate a flow from DPO-Pairs -> Factual Evaluation -> Transformation -> Merging -> Balancing -> Clean. A separate flow goes from Raw -> Synthetic Generation -> Clean. A feedback loop exists from Clean -> Balancing.

**Factuality Alignment (Right Side):**

* **Label-Flipping:** A central component with the following transformations:

* `(h<sub>w</sub>: 0, h<sub>i</sub>: 0)` -> `(h<sub>w</sub>: 1, h<sub>i</sub>: 0)`

* `(h<sub>w</sub>: 0, h<sub>i</sub>: 1)` -> `(h<sub>w</sub>: 1, h<sub>i</sub>: 1)`

* `(y<sub>w</sub>, y<sub>i</sub>, h<sub>w</sub>, h<sub>i</sub>)` -> `(y<sub>w</sub>, y<sub>i</sub>, h<sub>w</sub>, h<sub>i</sub>)` if h<sub>w</sub> - h<sub>i</sub> ≥ 0

* `(y<sub>w</sub>, y<sub>i</sub>, h<sub>w</sub>, h<sub>i</sub>)` -> `(y<sub>w</sub>, y<sub>i</sub>, h<sub>w</sub>, h<sub>i</sub>)` if h<sub>w</sub> - h<sub>i</sub> < 0

* **Post Flip Buckets:**

* `(h<sub>w</sub>: 0, h<sub>i</sub>: 1)`

* `(h<sub>w</sub>: 1, h<sub>i</sub>: 1)`

* `(h<sub>w</sub>: 0, h<sub>i</sub>: 0)`

* **Factual Margin:**

* m<sub>fact</sub> = m - λ(h<sub>w</sub> - h<sub>i</sub>)

* **Preference Data (Top):**

* y<sub>w</sub> > y<sub>i</sub>

* Neural network diagram with purple and orange nodes.

* Equation: L<sub>DPO</sub> = - E[log σ(r<sub>θ</sub>(x, y<sub>w</sub>) - r<sub>θ</sub>(x, y<sub>i</sub>))]

* **Preference Data (Bottom):**

* h<sub>w</sub> > h<sub>i</sub>

* Neural network diagram with purple and green nodes.

* Equation: L<sub>factual</sub> = - E[log σ(β * (m - λ * Δh))]

**Neural Networks:**

* Two neural network diagrams are present, representing the models used in the preference data processing. The top network has purple and orange nodes, while the bottom network has purple and green nodes.

### Key Observations

The diagram highlights a pipeline for refining data, specifically DPO-Pairs, through factual evaluation, transformation, merging, and balancing. The factuality alignment process focuses on adjusting labels and incorporating a factual margin to improve the accuracy and reliability of the data. The use of neural networks suggests a machine learning approach to preference modeling. The equations provided indicate loss functions used in the optimization process.

### Interpretation

This diagram illustrates a sophisticated data processing pipeline designed to enhance the quality and factual consistency of data used for training machine learning models, likely in the context of reinforcement learning from human feedback (RLHF) or direct preference optimization (DPO). The pipeline aims to address potential biases or inaccuracies in the initial data by incorporating factual evaluation and alignment techniques. The label-flipping mechanism and factual margin calculation suggest an attempt to mitigate the impact of incorrect or misleading labels. The use of preference data and neural networks indicates a focus on learning from human preferences and improving the model's ability to distinguish between desirable and undesirable outputs. The two preference data sections with different neural network node colors suggest potentially different stages or aspects of the preference learning process. The equations represent the loss functions used to train the models, guiding them towards better alignment with factual information and human preferences.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Data Pipeline and Factuality Alignment Process

### Overview

The image is a technical flowchart illustrating a multi-stage process for preparing and aligning data for training a machine learning model, specifically focusing on factuality. The diagram is divided into three main panels connected by blue arrows, indicating a sequential flow from left to right: **Data Pipeline**, **Factuality Alignment**, and **Preference Data** with associated loss functions.

### Components/Axes

The diagram is structured into three primary vertical panels:

1. **Left Panel: Data Pipeline**

* **Title:** "Data Pipeline" (top center).

* **Components:** A flowchart of interconnected rectangular boxes.

* **Flow Direction:** Arrows indicate a cyclical and sequential process. The main flow appears to start from "Raw" and "Synthetic Generation," moving through "Merging" and "Balancing," then to "Transformation," "Factual Evaluation," and finally to "DPO-Pairs" and "Clean."

2. **Middle Panel: Factuality Alignment**

* **Title:** "Factuality Alignment" (top center).

* **Components:** Contains mathematical notation and three labeled subsections: "Label-Flipping," "Post Flip Buckets," and "Factual Margin."

* **Flow Direction:** A blue arrow points from the "Data Pipeline" panel into this section, and another arrow points from this section to the "Preference Data" panel.

3. **Right Panel: Preference Data**

* **Components:** Two dashed-line boxes, each containing a diagram of stacked data cards, a neural network schematic, and a mathematical loss function formula.

* **Spatial Grounding:** The top box is outlined in orange, and the bottom box is outlined in green. Each box represents a different data configuration and its corresponding loss calculation.

### Detailed Analysis / Content Details

#### **1. Data Pipeline Panel**

* **Box Labels (transcribed):**

* `DPO-Pairs` (light green)

* `Clean` (light green)

* `Factual Evaluation` (light green)

* `Raw` (teal)

* `Transformation` (light green)

* `Synthetic Generation` (orange)

* `Merging` (blue-grey)

* `Balancing` (blue-grey)

* **Flow Description:** The process involves multiple steps. "Raw" data and "Synthetic Generation" feed into "Merging." The output of "Merging" goes to "Balancing." The "Balancing" step connects to "Transformation." "Transformation" leads to "Factual Evaluation." The output of "Factual Evaluation" splits into two paths: one to "DPO-Pairs" and another to "Clean." An arrow also points from "Clean" back to "DPO-Pairs," suggesting a refinement or pairing step.

#### **2. Factuality Alignment Panel**

* **Mathematical Notation (top):**

* `{h_w : 0, h_l : 1}`

* `{h_w : 1, h_l : 0}`

* `{h_w : 0, h_l : 0}`

* `{h_w : 1, h_l : 1}`

* *Interpretation:* These appear to be four possible binary states or buckets for two variables, `h_w` (likely "high weight" or "winning") and `h_l` (likely "high loss" or "losing").

* **Label-Flipping Subsection:**

* **Label:** "Label-Flipping" (blue box).

* **Formula:** `(y_w, y_l, h_w, h_l) → { (y_w, y_l, h_w, h_l), if h_l - h_w ≥ 0; (y_l, y_w, h_l, h_w), if h_l - h_w < 0 }`

* *Interpretation:* This defines a rule for swapping the labels `y_w` and `y_l` (likely "winning" and "losing" outputs) and their associated `h` values based on the difference `h_l - h_w`.

* **Post Flip Buckets Subsection:**

* **Label:** "Post Flip Buckets" (blue box).

* **Content:** `{h_w : 0, h_l : 1} {h_w : 1, h_l : 1} {h_w : 0, h_l : 0}`

* *Interpretation:* This lists the three possible states for `(h_w, h_l)` after the label-flipping operation has been applied.

* **Factual Margin Subsection:**

* **Label:** "Factual Margin" (blue box).

* **Formula:** `m_fact = m - λ(h_l - h_w)`

* *Interpretation:* This defines a "factual margin" (`m_fact`) as an original margin `m` adjusted by a factor `λ` multiplied by the difference `(h_l - h_w)`.

#### **3. Preference Data Panel**

* **Top (Orange) Box:**

* **Data Cards:** Shows two stacks. Left stack (green) labeled `y_w`. Right stack (purple) labeled `y_l`. A "greater than" symbol (`>`) is between them, indicating a preference: `y_w > y_l`.

* **Network Diagram:** A simple neural network schematic (red nodes and connections).

* **Loss Function:** `L_DPO = -E[log σ(τ_θ(x, y_w) - τ_θ(x, y_l))]`

* *Interpretation:* This is the standard Direct Preference Optimization (DPO) loss function, where `σ` is the sigmoid function, `τ_θ` is the model's output (e.g., logit), and `E` denotes expectation.

* **Bottom (Green) Box:**

* **Data Cards:** Shows two pairs. Top pair: green `y_w` > purple `y_l`. Bottom pair: yellow `h_w` > orange `h_l`.

* **Network Diagram:** A more complex neural network schematic (green nodes and connections).

* **Loss Function:** `L_factual = -E[log σ(β · (m - λ · Δh))]`

* *Interpretation:* This is a modified loss function incorporating the factual margin. `β` is likely a temperature or scaling parameter, `m` is a margin, `λ` is a scaling factor, and `Δh` represents the difference `h_l - h_w` from the earlier formula.

### Key Observations

1. **Process Integration:** The diagram explicitly links a data preparation pipeline ("Data Pipeline") to a mathematical framework for adjusting model preferences based on factuality ("Factuality Alignment"), which then informs the final training objective ("Preference Data" loss functions).

2. **Label-Flipping Mechanism:** A core technical component is the "Label-Flipping" rule, which conditionally swaps winning and losing examples based on their associated `h` values. This seems designed to correct or balance the training signal.

3. **Two-Tiered Loss Structure:** The final output presents two distinct loss functions: a standard DPO loss (`L_DPO`) and a specialized factual loss (`L_factual`). The `L_factual` function directly incorporates the margin and difference terms defined in the middle panel.

4. **Visual Coding:** Colors are used consistently: green for "winning" or preferred elements (`y_w`, `h_w`), purple/orange for "losing" elements (`y_l`, `h_l`). The network diagrams change color (red to green) between the two loss boxes, possibly indicating a shift from a base model to a factuality-aligned model.

### Interpretation

This diagram outlines a sophisticated methodology for training language models to be more factual. The process begins by curating and balancing a dataset that includes both raw and synthetic data. The core innovation lies in the "Factuality Alignment" stage, which introduces a mechanism to dynamically adjust the preference labels (`y_w`, `y_l`) based on auxiliary signals (`h_w`, `h_l`). These signals likely represent some measure of factual correctness or confidence.

The "Label-Flipping" rule acts as a corrective filter: if the "losing" example has a higher `h` value than the "winning" one (`h_l - h_w ≥ 0`), their labels are swapped. This ensures the model is not trained on mislabeled preferences where the supposedly worse output might actually be more factual.

The final training uses a composite approach. The standard DPO loss (`L_DPO`) teaches the model general preferences, while the novel factual loss (`L_factual`) explicitly penalizes deviations from a factual margin (`m_fact`). This margin is smaller when the difference between the losing and winning `h` values (`Δh`) is large, tightening the constraint for clearly mismatched pairs.

In essence, the system doesn't just learn from static preference data; it actively cleans and re-weights that data based on factuality signals before using a specialized loss function to enforce a factual margin during training. This represents a closed-loop approach to improving model factuality through data curation and objective engineering.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-Stage Data Processing and Alignment System

### Overview

The diagram illustrates a three-stage technical pipeline for data processing and alignment, combining data engineering, factual verification, and preference modeling. The system uses color-coded components (green, blue, orange, purple) with directional arrows showing data flow and transformation relationships.

### Components/Axes

1. **Data Pipeline Section (Left)**

- **Green Boxes**: DPO-Pairs, Factual Evaluation, Transformation

- **Teal Boxes**: Clean, Raw

- **Orange Box**: Synthetic Generation

- **Blue Boxes**: Merging, Balancing

- **Arrows**: Connect components in sequential flow

- **Legend**: Color coding for data types (green=raw data, teal=processed data, orange=synthetic data, blue=aggregated data)

2. **Factuality Alignment Section (Center)**

- **Blue Boxes**: Label-Flipping, Post Flip Buckets

- **Equations**:

- `m_fact = m - λ(h_l - h_w)`

- Factual margin calculation with λ parameter

- **Arrows**: Connect label-flipping to post-flip buckets to factual margin

- **Legend**: Blue/green color coding for alignment stages

3. **Preference Data Section (Right)**

- **Green/Purple Boxes**: Preference data comparisons (y_w > y_l)

- **Equations**:

- `L_DPO = -E[logσ(r_θ(x,y_w) - r_θ(x,y_l))]`

- `L_factual = -E[logσ(β·(m - λ·Δh))]`

- **Neural Network Diagrams**:

- Red network (preference model)

- Green network (factual alignment model)

- **Legend**: Orange/green color coding for preference modeling

### Detailed Analysis

1. **Data Pipeline Flow**

- DPO-Pairs → Clean → Raw → Merging → Balancing

- Factual Evaluation → Transformation → Synthetic Generation

- Color progression: Green (raw) → Teal (cleaned) → Orange (synthetic) → Blue (balanced)

2. **Factuality Alignment Mechanics**

- Label-flipping creates four post-flip bucket combinations:

- {h_w:0,h_l:1} → {h_w:1,h_l:0}

- {h_w:0,h_l:0} → {h_w:1,h_l:1}

- Factual margin calculation adjusts based on label difference (Δh = h_l - h_w)

3. **Preference Modeling**

- Two-stage neural network architecture:

- Red network processes preference data (y_w > y_l)

- Green network handles factual alignment (h_w > h_l)

- Loss functions combine preference (L_DPO) and factual (L_factual) components

### Key Observations

1. **Data Transformation Path**: Raw data undergoes multiple transformations (cleaning, synthetic generation) before balancing

2. **Factual Verification**: Explicit margin calculation ensures factual consistency between labels

3. **Preference Integration**: Neural networks model both user preferences and factual accuracy

4. **Color-Coded Logic**: Each color represents a distinct data type or processing stage

5. **Mathematical Rigor**: Equations show probabilistic modeling (σ=sigmoid function) and margin adjustments

### Interpretation

This system appears designed for training AI models with dual objectives: factual accuracy and user preference alignment. The data pipeline ensures high-quality input data through cleaning and balancing. Factuality alignment introduces a novel approach using label-flipping and margin calculations to verify factual consistency. The preference modeling combines direct comparison (y_w > y_l) with neural network processing to optimize both preference satisfaction and factual accuracy. The use of margin adjustments (λ parameter) suggests a tunable tradeoff between factual rigor and preference alignment. The neural network diagrams indicate a sophisticated approach to modeling complex preference patterns while maintaining factual constraints.

DECODING INTELLIGENCE...