TECHNICAL ASSET FINGERPRINT

96512cfb3e5ba1640fe467c9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

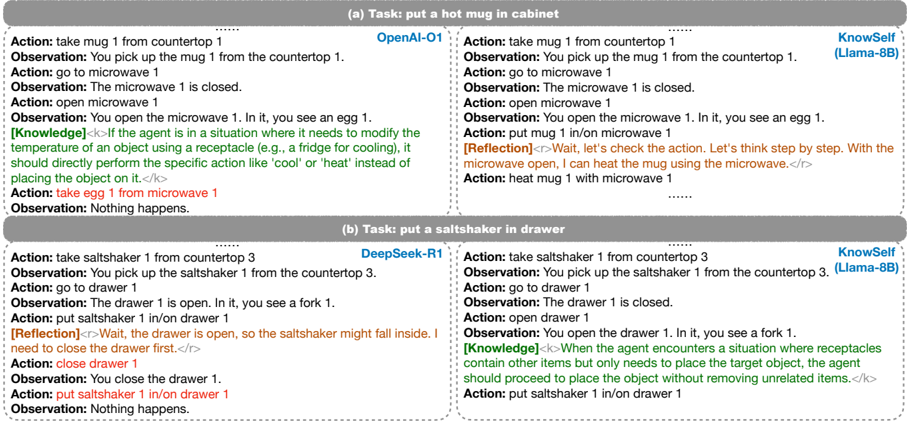

## Dialogue Comparison: Task Execution by Different Models

### Overview

The image presents a comparison of action sequences and observations generated by different AI models (OpenAI-01, DeepSeek-R1, and KnowSelf (Llama-8B)) while attempting to complete two tasks: (a) putting a hot mug in a cabinet and (b) putting a saltshaker in a drawer. The image is divided into two rows, one for each task, and each task is further divided into two columns, comparing the outputs of two different models for the same task. The outputs consist of alternating "Action" and "Observation" statements, along with occasional "Knowledge" or "Reflection" statements.

### Components/Axes

* **Tasks:**

* (a) Task: put a hot mug in cabinet

* (b) Task: put a saltshaker in drawer

* **Models:**

* OpenAI-01 (top-left)

* KnowSelf (Llama-8B) (top-right)

* DeepSeek-R1 (bottom-left)

* KnowSelf (Llama-8B) (bottom-right)

* **Statement Types:**

* Action: Describes the action taken by the agent.

* Observation: Describes the agent's observation after taking the action.

* Knowledge: Represents the agent's prior knowledge or reasoning.

* Reflection: Represents the agent's self-reflection or reasoning about its actions.

### Detailed Analysis or ### Content Details

**Task (a): Put a hot mug in cabinet**

* **OpenAI-01 (Top-Left):**

* Action: take mug 1 from countertop 1

* Observation: You pick up the mug 1 from the countertop 1.

* Action: go to microwave 1

* Observation: The microwave 1 is closed.

* Action: open microwave 1

* Observation: You open the microwave 1. In it, you see an egg 1.

* Knowledge: If the agent is in a situation where it needs to modify the temperature of an object using a receptacle (e.g., a fridge for cooling), it should directly perform the specific action like 'cool' or 'heat' instead of placing the object on it.

* Action: take egg 1 from microwave 1

* Observation: Nothing happens.

* **KnowSelf (Llama-8B) (Top-Right):**

* Action: take mug 1 from countertop 1

* Observation: You pick up the mug 1 from the countertop 1.

* Action: go to microwave 1

* Observation: The microwave 1 is closed.

* Action: open microwave 1

* Observation: You open the microwave 1. In it, you see an egg 1.

* Action: put mug 1 in/on microwave 1

* Reflection: Wait, let's check the action. Let's think step by step. With the microwave open, I can heat the mug using the microwave.

* Action: heat mug 1 with microwave 1

**Task (b): Put a saltshaker in drawer**

* **DeepSeek-R1 (Bottom-Left):**

* Action: take saltshaker 1 from countertop 3

* Observation: You pick up the saltshaker 1 from the countertop 3.

* Action: go to drawer 1

* Observation: The drawer 1 is open. In it, you see a fork 1.

* Action: put saltshaker 1 in/on drawer 1

* Reflection: Wait, the drawer is open, so the saltshaker might fall inside. I need to close the drawer first.

* Action: close drawer 1

* Observation: You close the drawer 1.

* Action: put saltshaker 1 in/on drawer 1

* Observation: Nothing happens.

* **KnowSelf (Llama-8B) (Bottom-Right):**

* Action: take saltshaker 1 from countertop 3

* Observation: You pick up the saltshaker 1 from the countertop 3.

* Action: go to drawer 1

* Observation: The drawer 1 is closed.

* Action: open drawer 1

* Observation: You open the drawer 1. In it, you see a fork 1.

* Knowledge: When the agent encounters a situation where receptacles contain other items but only needs to place the target object, the agent should proceed to place the object without removing unrelated items.

* Action: put saltshaker 1 in/on drawer 1

### Key Observations

* **Task (a):** OpenAI-01 gets distracted by the egg in the microwave and attempts to take the egg out, while KnowSelf (Llama-8B) reflects on the situation and decides to heat the mug in the microwave.

* **Task (b):** DeepSeek-R1 recognizes the issue of putting the saltshaker in an open drawer and decides to close it first. KnowSelf (Llama-8B) provides a general knowledge statement about handling receptacles with other items but does not correct the action of putting the saltshaker in the drawer.

* Both models, in both tasks, end with the observation "Nothing happens" after attempting to place the object in the receptacle.

### Interpretation

The image demonstrates the different reasoning and problem-solving approaches of various AI models when faced with simple tasks. OpenAI-01 seems to get sidetracked by irrelevant objects (the egg), while KnowSelf (Llama-8B) shows some ability to reflect on its actions and plan accordingly, at least in the first task. DeepSeek-R1 demonstrates a more practical approach by identifying and correcting a potential problem (putting the saltshaker in an open drawer). KnowSelf (Llama-8B) provides relevant knowledge but doesn't always apply it effectively to the specific situation. The "Nothing happens" observation at the end of several sequences suggests that the models are not fully completing the tasks successfully, indicating a need for further refinement in their planning and execution capabilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

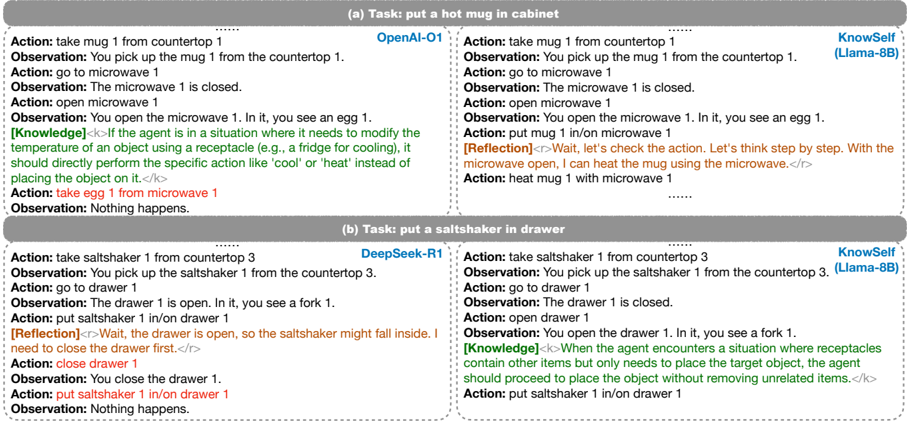

## Textual Data: Agent Interaction Logs - Hot Mug & Saltshaker Tasks

### Overview

The image presents two sets of interaction logs between an agent and an environment, detailing actions taken and observations made during two tasks: (A) putting a hot mug in a cabinet and (B) putting a saltshaker in a drawer. Each task is presented with logs from two different agent models: "OpenAI-01" and "KnowSelf (Llama-8B)" for task A, and "DeepSeek-R1" and "KnowSelf (Llama-8B)" for task B. The logs consist of alternating "Action" and "Observation" lines, interspersed with "[Knowledge]" and "[Reflection]" statements.

### Components/Axes

The image is structured into two main sections, labeled "(a) Task: put a hot mug in cabinet" and "(b) Task: put a saltshaker in drawer". Within each task, there are two columns representing the logs from different agent models. The logs themselves are presented as a sequence of text lines. There are no explicit axes or scales.

### Detailed Analysis or Content Details

**Task (a): put a hot mug in cabinet**

* **OpenAI-01:**

* Action: take mug 1 from countertop 1

* Observation: You pick up the mug 1 from the countertop 1.

* Action: go to microwave 1

* Observation: The microwave 1 is closed.

* Action: open microwave 1

* Observation: You open the microwave 1. In it, you see an egg 1.

* [Knowledge]: <code>If the agent is in a situation where it needs to modify the temperature of an object using a receptacle (e.g., a fridge for cooling), it should directly perform the specific action like 'cool' or 'heat' instead of placing the object on it.</code>

* Action: take egg 1 from microwave 1

* Observation: Nothing happens.

* **KnowSelf (Llama-8B):**

* Action: take mug 1 from countertop 1

* Observation: You pick up the mug 1 from the countertop 1.

* Action: go to microwave 1

* Observation: The microwave 1 is closed.

* Action: open microwave 1

* Observation: You open the microwave 1. In it, you see an egg 1.

* Action: put mug 1 in/on microwave 1

* [Reflection]: <code>Wait, let's check the action. Let's think step by step. With the microwave open, I can heat the mug using the microwave.</code>

* Action: heat mug 1 with microwave 1

**Task (b): put a saltshaker in drawer**

* **DeepSeek-R1:**

* Action: take saltshaker 1 from countertop 3

* Observation: You pick up the saltshaker 1 from the countertop 3.

* Action: go to drawer 1

* Observation: The drawer 1 is open. In it, you see a fork 1.

* Action: put saltshaker 1 in/on drawer 1

* [Reflection]: <code>Wait, the drawer is open, so the saltshaker might fall inside. I need to close the drawer first.</code>

* Action: close drawer 1

* Observation: You close the drawer 1.

* Action: put saltshaker 1 in/on drawer 1

* Observation: Nothing happens.

* **KnowSelf (Llama-8B):**

* Action: take saltshaker 1 from countertop 3

* Observation: You pick up the saltshaker 1 from the countertop 3.

* Action: go to drawer 1

* Observation: The drawer 1 is closed.

* Action: open drawer 1

* Observation: You open the drawer 1. In it, you see a fork 1.

* [Knowledge]: <code>When the agent encounters a situation where receptacles contain other items but only need to place the target object, the agent should proceed to place the object without removing unrelated items.</code>

* Action: put saltshaker 1 in/on drawer 1

### Key Observations

* Both OpenAI-01 and DeepSeek-R1 exhibit a failure to directly address the task's goal, getting sidetracked by other objects in the environment (egg in the microwave, fork in the drawer).

* KnowSelf (Llama-8B) demonstrates more goal-oriented behavior, attempting to directly perform the requested action.

* The "[Knowledge]" and "[Reflection]" statements provide insight into the reasoning process of the agents. The Knowledge statements appear to be pre-programmed rules, while the Reflection statements indicate the agent's internal thought process.

* The observation "Nothing happens" suggests the environment may not be fully simulating the effects of the actions.

### Interpretation

The data suggests a comparison of different agent architectures and their ability to perform simple manipulation tasks in a simulated environment. The differing behaviors highlight the importance of both pre-programmed knowledge and the ability to reason about the environment. OpenAI-01 and DeepSeek-R1 seem to be more easily distracted by irrelevant objects, while KnowSelf (Llama-8B) demonstrates a stronger focus on the task goal. The inclusion of "[Knowledge]" and "[Reflection]" statements is crucial for understanding *why* the agents are behaving in a certain way, offering a window into their internal decision-making processes. The "Nothing happens" observations indicate a limitation in the simulation's fidelity, potentially hindering the agents' ability to learn and adapt. The agents are attempting to follow instructions, but the environment is not always responding as expected. This data could be used to evaluate and improve the performance of these agent models, as well as the realism of the simulation environment.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparative Analysis of AI Agent Reasoning Processes

### Overview

The image is a technical diagram comparing the step-by-step reasoning and action sequences of different AI agent systems (OpenAI-O1, KnowSelf (Llama-8B), DeepSeek-R1) when performing two distinct household tasks in a simulated environment. The diagram is divided into two primary horizontal sections, each dedicated to a specific task, with side-by-side comparisons of agent behaviors.

### Components/Axes

The diagram is structured as follows:

- **Two Main Sections**: Labeled (a) and (b) in gray header bars.

- **Task Descriptions**: Located in the gray header bars.

- (a) Task: put a hot mug in cabinet

- (b) Task: put a saltshaker in drawer

- **Comparison Columns**: Each section has two columns comparing different AI agents.

- **Section (a)**: Left column: `OpenAI-O1`; Right column: `KnowSelf (Llama-8B)`

- **Section (b)**: Left column: `DeepSeek-R1`; Right column: `KnowSelf (Llama-8B)`

- **Content Format**: Each column contains a sequential log of `Action:` and `Observation:` lines, simulating an agent's interaction with an environment.

- **Annotation Types**: Embedded within the logs are two types of highlighted reasoning blocks:

- **Green `[Knowledge]` blocks**: Contain pre-defined rules or situational knowledge.

- **Orange `[Reflection]` blocks**: Contain the agent's internal, step-by-step reasoning or self-correction.

### Detailed Analysis

#### Section (a): Task - put a hot mug in cabinet

**Left Column (OpenAI-O1):**

1. `Action: take mug 1 from countertop 1`

2. `Observation: You pick up the mug 1 from the countertop 1.`

3. `Action: go to microwave 1`

4. `Observation: The microwave 1 is closed.`

5. `Action: open microwave 1`

6. `Observation: You open the microwave 1. In it, you see an egg 1.`

7. **[Knowledge] (Green)**: `<--The agent is in a situation where it needs to modify the temperature of an object using a receptacle (e.g., a fridge for cooling), it should directly perform the specific action like 'cool' or 'heat' instead of placing the object on it.-->`

8. `Action: take egg 1 from microwave 1` (Text in red, indicating an error or deviation).

9. `Observation: Nothing happens.`

**Right Column (KnowSelf - Llama-8B):**

1. `Action: take mug 1 from countertop 1`

2. `Observation: You pick up the mug 1 from the countertop 1.`

3. `Action: go to microwave 1`

4. `Observation: The microwave 1 is closed.`

5. `Action: open microwave 1`

6. `Observation: You open the microwave 1. In it, you see an egg 1.`

7. `Action: put mug 1 in/on microwave 1`

8. **[Reflection] (Orange)**: `<--Wait, let's check the action. Let's think step by step. With the microwave open, I can heat the mug using the microwave.-->`

9. `Action: heat mug 1 with microwave 1`

#### Section (b): Task - put a saltshaker in drawer

**Left Column (DeepSeek-R1):**

1. `Action: take saltshaker 1 from countertop 3`

2. `Observation: You pick up the saltshaker 1 from the countertop 3.`

3. `Action: go to drawer 1`

4. `Observation: The drawer 1 is open. In it, you see a fork 1.`

5. `Action: put saltshaker 1 in/on drawer 1`

6. **[Reflection] (Orange)**: `<--Wait, the drawer is open, so the saltshaker might fall inside. I need to close the drawer first.-->`

7. `Action: close drawer 1`

8. `Observation: You close the drawer 1.`

9. `Action: put saltshaker 1 in/on drawer 1`

10. `Observation: Nothing happens.`

**Right Column (KnowSelf - Llama-8B):**

1. `Action: take saltshaker 1 from countertop 3`

2. `Observation: You pick up the saltshaker 1 from the countertop 3.`

3. `Action: go to drawer 1`

4. `Observation: The drawer 1 is closed.`

5. `Action: open drawer 1`

6. `Observation: You open the drawer 1. In it, you see a fork 1.`

7. **[Knowledge] (Green)**: `<--When the agent encounters a situation where receptacles contain other items but only needs to place the target object, the agent should proceed to place the object without removing unrelated items.-->`

8. `Action: put saltshaker 1 in/on drawer 1`

### Key Observations

1. **Reasoning Mechanism Contrast**: The diagram explicitly contrasts two AI reasoning methods: `[Knowledge]` (applying pre-programmed rules) and `[Reflection]` (performing real-time, step-by-step self-evaluation).

2. **Task Success/Failure**:

- In task (a), `OpenAI-O1` fails. After applying a `[Knowledge]` rule about temperature modification, it incorrectly attempts to take an unrelated object (the egg) from the microwave, leading to a "Nothing happens" observation.

- In task (a), `KnowSelf` succeeds. It uses `[Reflection]` to realize the open microwave can be used to heat the mug, leading to the correct action.

- In task (b), `DeepSeek-R1` uses `[Reflection]` to correct its own plan (closing the open drawer before placing the saltshaker), but the final observation is "Nothing happens," suggesting a potential execution failure or environment limitation.

- In task (b), `KnowSelf` uses a `[Knowledge]` rule to correctly ignore the unrelated fork in the drawer and proceed with placing the saltshaker.

3. **Spatial Layout of Annotations**: The `[Knowledge]` and `[Reflection]` blocks are inserted inline within the action sequence, precisely at the point where the reasoning occurs. This visually maps the cognitive process to the action timeline.

4. **Color Coding**: Green is consistently used for `[Knowledge]` and orange for `[Reflection]`, providing a clear visual distinction between the two reasoning types across all agent comparisons.

### Interpretation

This diagram serves as a qualitative comparison of AI agent architectures, specifically evaluating how different systems handle common-sense reasoning and error correction in embodied, interactive tasks.

- **What the data suggests**: The performance implies that `KnowSelf (Llama-8B)` demonstrates more robust and context-aware reasoning in these specific examples. It successfully integrates both `[Knowledge]` (for handling clutter) and `[Reflection]` (for tool use) to achieve the goal. In contrast, `OpenAI-O1` appears to apply a `[Knowledge]` rule too rigidly, leading to an illogical action. `DeepSeek-R1` shows good self-correction via `[Reflection]` but may face downstream execution issues.

- **Relationship between elements**: The side-by-side structure forces a direct comparison. The identical task setup for each pair highlights how the *reasoning process* (the embedded green/orange blocks) directly dictates the subsequent action sequence and, ultimately, the task outcome.

- **Notable patterns/anomalies**: The most striking pattern is the correlation between reasoning type and task success in these samples. `[Reflection]` (self-checking) appears crucial for adapting to dynamic states (e.g., an open microwave). `[Knowledge]` is effective for procedural rules (e.g., ignoring unrelated items) but can be a source of error if the rule is misapplied to the context. The red text for `OpenAI-O1`'s action (`take egg 1`) is a clear visual marker of a reasoning failure point.

**In essence, the diagram argues for the importance of flexible, reflective reasoning in AI agents, suggesting that the ability to pause and re-evaluate a plan (`[Reflection]`) may be as critical as having a database of facts (`[Knowledge]`) for successfully completing real-world tasks.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Textual Comparison of AI Agent Reasoning in Simulated Tasks

### Overview

The image presents a comparative analysis of four AI agents (OpenAI-1, KnowSelf, DeepSeek-R1, Llama-8B) performing two simulated tasks in a kitchen environment:

1. **Task (a):** Put a hot mug in a cabinet

2. **Task (b):** Put a saltshaker in a drawer

Each agent's reasoning process is visualized through sequential **Actions**, **Observations**, and **Knowledge** components, with color-coded annotations for different reasoning types.

---

### Components/Axes

- **Tasks**: Labeled as (a) and (b) at the top of each section.

- **Agents**: Four AI models listed vertically:

- OpenAI-1 (blue text)

- KnowSelf (Llama-8B, orange text)

- DeepSeek-R1 (red text)

- Llama-8B (blue text)

- **Action/Observation Structure**:

- Actions prefixed with "Action:"

- Observations prefixed with "Observation:"

- Knowledge components in brackets (e.g., [Reflection], [Knowledge]) with color-coded text:

- Red: [Reflection] (step-by-step reasoning)

- Blue: [Knowledge] (contextual awareness)

---

### Detailed Analysis

#### Task (a): Put a hot mug in a cabinet

1. **OpenAI-1**

- **Actions**:

- Take mug from countertop 1

- Go to microwave 1

- Open microwave 1

- Take egg from microwave 1

- Close microwave 1

- **Observations**:

- Mug picked up, microwave closed, egg seen inside microwave.

- **Knowledge**:

- [Reflection] (red): "If the agent is in a situation where it needs to modify the temperature of an object using a receptacle (e.g., a fridge for cooling), it should directly perform the specific action like 'cool' or 'heat' instead of placing the object on it."

2. **KnowSelf (Llama-8B)**

- **Actions**:

- Take mug from countertop 1

- Go to microwave 1

- Open microwave 1

- Put mug in microwave 1

- Heat mug with microwave 1

- **Observations**:

- Mug picked up, microwave closed, egg seen inside microwave.

- **Knowledge**:

- [Knowledge] (blue): "Wait, let's check the step-by-step. With the microwave open, I can heat the mug using the microwave."

3. **DeepSeek-R1**

- **Actions**:

- Take mug from countertop 1

- Go to microwave 1

- Open microwave 1

- Take egg from microwave 1

- Close microwave 1

- **Observations**:

- Mug picked up, microwave closed, egg seen inside microwave.

- **Knowledge**:

- No explicit knowledge component.

4. **Llama-8B**

- **Actions**:

- Take mug from countertop 1

- Go to microwave 1

- Open microwave 1

- Take egg from microwave 1

- Close microwave 1

- **Observations**:

- Mug picked up, microwave closed, egg seen inside microwave.

- **Knowledge**:

- No explicit knowledge component.

#### Task (b): Put a saltshaker in a drawer

1. **DeepSeek-R1**

- **Actions**:

- Take saltshaker from countertop 3

- Go to drawer 1

- Open drawer 1

- Put saltshaker in drawer 1

- Close drawer 1

- **Observations**:

- Saltshaker picked up, drawer open with a fork inside.

- **Knowledge**:

- [Reflection] (red): "The drawer is open, so the saltshaker might fall inside. I need to close the drawer first."

2. **KnowSelf (Llama-8B)**

- **Actions**:

- Take saltshaker from countertop 3

- Go to drawer 1

- Open drawer 1

- Put saltshaker in drawer 1

- Close drawer 1

- **Observations**:

- Saltshaker picked up, drawer closed.

- **Knowledge**:

- [Knowledge] (blue): "When the agent encounters a situation where receptacles contain other items but only needs to place the target object, the agent should proceed to place the object without removing unrelated items."

---

### Key Observations

1. **Task (a) Divergence**:

- OpenAI-1 and DeepSeek-R1 follow a rigid sequence (pick up mug → microwave interaction → take egg → close microwave).

- KnowSelf (Llama-8B) deviates by heating the mug after placing it in the microwave, demonstrating adaptive reasoning.

2. **Task (b) Reflection**:

- DeepSeek-R1 explicitly reflects on the risk of the saltshaker falling due to an open drawer, prioritizing drawer closure before placement.

- KnowSelf emphasizes contextual awareness, avoiding unnecessary removal of unrelated items (e.g., the fork).

3. **Agent-Specific Patterns**:

- **OpenAI-1**: Minimal reasoning, focuses on task completion without contextual adjustments.

- **KnowSelf (Llama-8B)**: Balances direct action with reflective reasoning (e.g., heating the mug).

- **DeepSeek-R1**: Prioritizes safety (e.g., closing drawers) but lacks explicit knowledge annotations.

---

### Interpretation

The image highlights differences in how AI agents handle task execution:

- **Reflective Reasoning**: Agents like KnowSelf and DeepSeek-R1 incorporate step-by-step adjustments (e.g., heating the mug, closing drawers) based on observations, suggesting advanced contextual awareness.

- **Direct Action**: OpenAI-1 and Llama-8B follow a linear sequence without explicit reasoning, indicating simpler decision-making frameworks.

- **Knowledge Integration**: Color-coded annotations reveal how agents encode contextual knowledge (e.g., [Knowledge] for environmental awareness, [Reflection] for procedural adjustments).

This comparison underscores the importance of integrating reflective and knowledge-based components in AI systems to handle dynamic, real-world scenarios effectively.

DECODING INTELLIGENCE...