## Textual Data: Agent Interaction Logs - Hot Mug & Saltshaker Tasks

### Overview

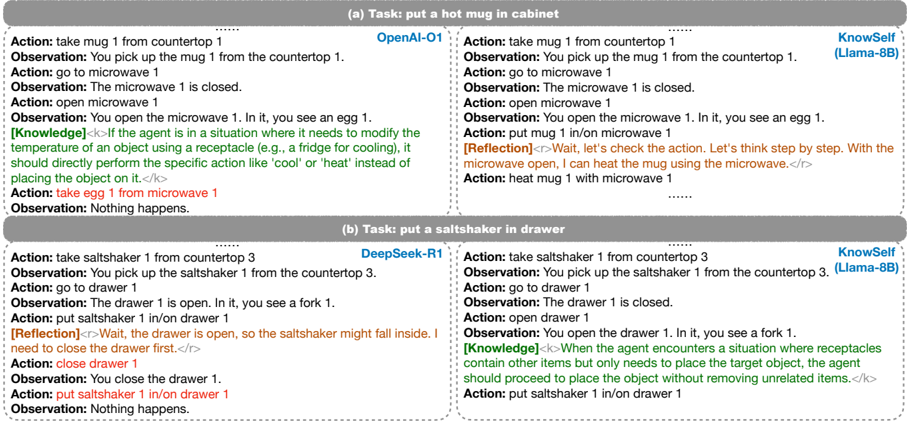

The image presents two sets of interaction logs between an agent and an environment, detailing actions taken and observations made during two tasks: (A) putting a hot mug in a cabinet and (B) putting a saltshaker in a drawer. Each task is presented with logs from two different agent models: "OpenAI-01" and "KnowSelf (Llama-8B)" for task A, and "DeepSeek-R1" and "KnowSelf (Llama-8B)" for task B. The logs consist of alternating "Action" and "Observation" lines, interspersed with "[Knowledge]" and "[Reflection]" statements.

### Components/Axes

The image is structured into two main sections, labeled "(a) Task: put a hot mug in cabinet" and "(b) Task: put a saltshaker in drawer". Within each task, there are two columns representing the logs from different agent models. The logs themselves are presented as a sequence of text lines. There are no explicit axes or scales.

### Detailed Analysis or Content Details

**Task (a): put a hot mug in cabinet**

* **OpenAI-01:**

* Action: take mug 1 from countertop 1

* Observation: You pick up the mug 1 from the countertop 1.

* Action: go to microwave 1

* Observation: The microwave 1 is closed.

* Action: open microwave 1

* Observation: You open the microwave 1. In it, you see an egg 1.

* [Knowledge]: <code>If the agent is in a situation where it needs to modify the temperature of an object using a receptacle (e.g., a fridge for cooling), it should directly perform the specific action like 'cool' or 'heat' instead of placing the object on it.</code>

* Action: take egg 1 from microwave 1

* Observation: Nothing happens.

* **KnowSelf (Llama-8B):**

* Action: take mug 1 from countertop 1

* Observation: You pick up the mug 1 from the countertop 1.

* Action: go to microwave 1

* Observation: The microwave 1 is closed.

* Action: open microwave 1

* Observation: You open the microwave 1. In it, you see an egg 1.

* Action: put mug 1 in/on microwave 1

* [Reflection]: <code>Wait, let's check the action. Let's think step by step. With the microwave open, I can heat the mug using the microwave.</code>

* Action: heat mug 1 with microwave 1

**Task (b): put a saltshaker in drawer**

* **DeepSeek-R1:**

* Action: take saltshaker 1 from countertop 3

* Observation: You pick up the saltshaker 1 from the countertop 3.

* Action: go to drawer 1

* Observation: The drawer 1 is open. In it, you see a fork 1.

* Action: put saltshaker 1 in/on drawer 1

* [Reflection]: <code>Wait, the drawer is open, so the saltshaker might fall inside. I need to close the drawer first.</code>

* Action: close drawer 1

* Observation: You close the drawer 1.

* Action: put saltshaker 1 in/on drawer 1

* Observation: Nothing happens.

* **KnowSelf (Llama-8B):**

* Action: take saltshaker 1 from countertop 3

* Observation: You pick up the saltshaker 1 from the countertop 3.

* Action: go to drawer 1

* Observation: The drawer 1 is closed.

* Action: open drawer 1

* Observation: You open the drawer 1. In it, you see a fork 1.

* [Knowledge]: <code>When the agent encounters a situation where receptacles contain other items but only need to place the target object, the agent should proceed to place the object without removing unrelated items.</code>

* Action: put saltshaker 1 in/on drawer 1

### Key Observations

* Both OpenAI-01 and DeepSeek-R1 exhibit a failure to directly address the task's goal, getting sidetracked by other objects in the environment (egg in the microwave, fork in the drawer).

* KnowSelf (Llama-8B) demonstrates more goal-oriented behavior, attempting to directly perform the requested action.

* The "[Knowledge]" and "[Reflection]" statements provide insight into the reasoning process of the agents. The Knowledge statements appear to be pre-programmed rules, while the Reflection statements indicate the agent's internal thought process.

* The observation "Nothing happens" suggests the environment may not be fully simulating the effects of the actions.

### Interpretation

The data suggests a comparison of different agent architectures and their ability to perform simple manipulation tasks in a simulated environment. The differing behaviors highlight the importance of both pre-programmed knowledge and the ability to reason about the environment. OpenAI-01 and DeepSeek-R1 seem to be more easily distracted by irrelevant objects, while KnowSelf (Llama-8B) demonstrates a stronger focus on the task goal. The inclusion of "[Knowledge]" and "[Reflection]" statements is crucial for understanding *why* the agents are behaving in a certain way, offering a window into their internal decision-making processes. The "Nothing happens" observations indicate a limitation in the simulation's fidelity, potentially hindering the agents' ability to learn and adapt. The agents are attempting to follow instructions, but the environment is not always responding as expected. This data could be used to evaluate and improve the performance of these agent models, as well as the realism of the simulation environment.