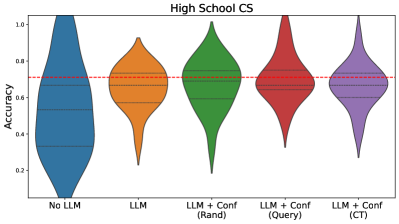

## Violin Plot: High School CS Accuracy Comparison

### Overview

The image is a statistical visualization (violin plot) titled "High School CS" that compares the distribution of "Accuracy" scores across five different experimental conditions related to the use of Large Language Models (LLMs). The plot displays the probability density of the data at different values, with the width of each "violin" representing the frequency of data points at that accuracy level.

### Components/Axes

* **Chart Title:** "High School CS" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy" (rotated vertically on the left side).

* **Scale:** Linear scale from 0.2 to 1.0, with major tick marks at 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Represents five categorical conditions. The labels are positioned below each corresponding violin plot.

1. **No LLM** (far left, blue violin)

2. **LLM** (orange violin)

3. **LLM + Conf (Rand)** (green violin)

4. **LLM + Conf (Query)** (red violin)

5. **LLM + Conf (CT)** (far right, purple violin)

* **Reference Line:** A horizontal red dashed line is drawn across the entire chart at an accuracy value of approximately **0.7**. This likely serves as a benchmark or baseline for comparison.

### Detailed Analysis

Each violin plot shows the distribution of accuracy scores for its condition. The internal horizontal lines within each violin typically represent quartiles (e.g., median, 25th, 75th percentiles).

1. **No LLM (Blue):**

* **Trend/Shape:** The distribution is heavily skewed towards lower accuracy. It is widest (most dense) between approximately 0.2 and 0.5, with a long, thin tail extending up to ~0.9.

* **Key Values:** The median appears to be around **0.45**. The bulk of the data (interquartile range) lies between ~0.3 and ~0.6.

2. **LLM (Orange):**

* **Trend/Shape:** The distribution is more symmetric and centered higher than "No LLM." It is widest around 0.6-0.7.

* **Key Values:** The median is approximately **0.65**. The main density is concentrated between ~0.5 and ~0.8.

3. **LLM + Conf (Rand) (Green):**

* **Trend/Shape:** The distribution is similar in shape to the "LLM" condition but shifted slightly upward. It is widest between 0.7 and 0.8.

* **Key Values:** The median is near **0.72**, sitting just above the red reference line. The dense region spans from ~0.6 to ~0.85.

4. **LLM + Conf (Query) (Red):**

* **Trend/Shape:** This distribution shows a clear upward shift. It is widest in the high-accuracy region between 0.8 and 0.9, indicating a high concentration of top scores.

* **Key Values:** The median is the highest among all groups, at approximately **0.82**. The interquartile range is roughly 0.7 to 0.9.

5. **LLM + Conf (CT) (Purple):**

* **Trend/Shape:** Very similar in profile to the "Query" condition, with a high-density peak between 0.8 and 0.9. It may be slightly narrower, suggesting marginally less variance.

* **Key Values:** The median is also very high, around **0.81**. The distribution is concentrated between ~0.7 and ~0.9.

### Key Observations

* **Clear Performance Hierarchy:** There is a visible, stepwise improvement in accuracy distributions from left to right: `No LLM` < `LLM` < `LLM + Conf (Rand)` < `LLM + Conf (Query)` ≈ `LLM + Conf (CT)`.

* **Impact of Confidence Mechanisms:** All conditions using an LLM with a confidence mechanism ("Conf") outperform the plain "LLM" condition. The "Query" and "CT" methods show the most significant gains.

* **Benchmark Comparison:** The red dashed line at ~0.7 accuracy is exceeded by the median of the top three conditions (`Rand`, `Query`, `CT`). The `No LLM` and plain `LLM` conditions have medians below this line.

* **Variability:** The "No LLM" condition shows the greatest spread (from ~0.1 to ~0.9), indicating highly inconsistent performance. The top-performing conditions (`Query`, `CT`) have a tighter spread in the high-accuracy range, indicating more reliable high performance.

### Interpretation

This chart presents compelling evidence for the efficacy of using LLMs, particularly when augmented with confidence estimation strategies, in the context of high school computer science tasks.

* **The Core Finding:** Relying on no LLM (`No LLM`) leads to low and highly variable accuracy. Introducing a base LLM (`LLM`) provides a substantial and consistent boost.

* **The Value of Confidence:** The key insight is that not all LLM integrations are equal. Adding a confidence mechanism—whether random (`Rand`), query-based (`Query`), or using a method abbreviated as `CT`—further improves both the median accuracy and the consistency of high performance. The `Query` and `CT` methods appear to be the most sophisticated and effective.

* **Practical Implication:** For educational technology or assessment tools in high school CS, employing an LLM with an advanced confidence-filtering system (like `Query` or `CT`) is likely to yield the most accurate and reliable results, consistently surpassing the ~70% accuracy benchmark shown. The data suggests these systems can help students achieve higher accuracy outcomes more reliably.