\n

## Process Diagram: Machine Learning Training Pipeline with Temperature Scheduling and Distillation

### Overview

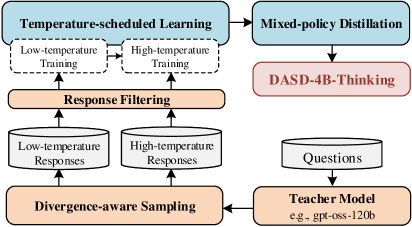

The image is a technical flowchart illustrating a machine learning training pipeline. It depicts a multi-stage process involving temperature-controlled training, response filtering, divergence-aware sampling, and knowledge distillation from a large teacher model. The diagram uses a combination of rectangular process blocks, cylindrical data stores, and directional arrows to show the flow of data and operations.

### Components/Axes

The diagram is organized into two primary vertical sections (left and right) with interconnected flows.

**Left Section (Training & Sampling Loop):**

1. **Top Block:** "Temperature-scheduled Learning"

* Contains two sub-blocks: "Low-temperature Training" and "High-temperature Training".

2. **Middle Block:** "Response Filtering"

* Receives input from the "Temperature-scheduled Learning" block.

3. **Data Stores (Cylinders):**

* "Low-temperature Responses" (left cylinder)

* "High-temperature Responses" (right cylinder)

* Both are connected to the "Response Filtering" block above and the "Divergence-aware Sampling" block below.

4. **Bottom Block:** "Divergence-aware Sampling"

* Receives input from the two response data stores.

* Sends output to the "Teacher Model" in the right section.

**Right Section (Distillation & Inference):**

1. **Top Block:** "Mixed-policy Distillation"

* Receives input from the "Temperature-scheduled Learning" block on the left.

2. **Middle Block:** "DASD-4B-Thinking"

* Receives input from the "Mixed-policy Distillation" block.

3. **Data Store (Cylinder):** "Questions"

* Positioned below "DASD-4B-Thinking".

4. **Bottom Block:** "Teacher Model"

* Contains the text "e.g., gpt-oss-120b".

* Receives input from the "Questions" data store and the "Divergence-aware Sampling" block from the left section.

* Sends output back to the "Divergence-aware Sampling" block, forming a feedback loop.

**Flow Arrows:**

* Arrows indicate the direction of data or process flow between all components.

* A key feedback loop exists from the "Teacher Model" back to "Divergence-aware Sampling".

### Detailed Analysis

The diagram outlines a sophisticated, iterative training methodology:

1. **Core Training Loop (Left Side):** The process begins with "Temperature-scheduled Learning," which involves two parallel training regimes: one at low temperature (likely for more deterministic, confident outputs) and one at high temperature (for more random, exploratory outputs). The outputs from these training phases are filtered ("Response Filtering") and stored separately as "Low-temperature Responses" and "High-temperature Responses." These stored responses are then processed by "Divergence-aware Sampling," which presumably selects data points that are diverse or informative.

2. **Knowledge Distillation Pathway (Right Side):** The "Temperature-scheduled Learning" block also feeds into "Mixed-policy Distillation." This suggests that the knowledge or policies learned under different temperature regimes are combined and distilled. The output of this distillation is a model or component named "DASD-4B-Thinking."

3. **Teacher-Student Interaction:** The "Teacher Model" (exemplified as "gpt-oss-120b") is central to the pipeline. It receives "Questions" (from a dedicated store) and data selected by "Divergence-aware Sampling." The teacher model's outputs are fed back into the "Divergence-aware Sampling" block, creating a closed loop where the teacher's responses inform the selection of new data for training or evaluation.

### Key Observations

* **Explicit Temperature Control:** The pipeline explicitly separates and manages training under low and high temperature conditions, indicating a focus on controlling the entropy or randomness of the model's outputs during learning.

* **Diversity Emphasis:** The component named "Divergence-aware Sampling" highlights a core objective: to actively seek out and utilize diverse data points or model responses, likely to improve robustness and generalization.

* **Large Teacher Model:** The specified example "gpt-oss-120b" suggests the teacher model is a very large-scale language model (120 billion parameters), used to guide the training of a potentially smaller or differently structured student model ("DASD-4B-Thinking").

* **Iterative Refinement:** The feedback loop from the "Teacher Model" to "Divergence-aware Sampling" indicates an iterative process where the teacher's knowledge continuously refines the data selection for subsequent training cycles.

* **Policy Combination:** The "Mixed-policy Distillation" block implies that strategies or behaviors learned under different conditions (temperatures) are integrated into a single, cohesive policy.

### Interpretation

This diagram represents a advanced framework for training a language model (likely the "DASD-4B-Thinking" model) by leveraging a much larger teacher model. The core innovation appears to be the structured use of **temperature scheduling** to generate a spectrum of training data—from conservative to exploratory—and the **divergence-aware sampling** mechanism to intelligently select the most valuable data points from this spectrum for the teacher model to evaluate.

The process aims to create a student model that is not just a compressed version of the teacher, but one that has learned to balance confident reasoning (low-temperature behavior) with creative exploration (high-temperature behavior) in a controlled manner. The "Mixed-policy Distillation" step is crucial for fusing these different behavioral modes into the final model. This approach could lead to a model that is more robust, less prone to repetitive or generic outputs, and better at handling ambiguous or novel tasks by effectively managing its own uncertainty. The entire pipeline emphasizes **data quality and diversity** over sheer data quantity, using the teacher model as a guide to filter and refine the learning process.