## Diagram: Temperature-scheduled Learning and Distillation

### Overview

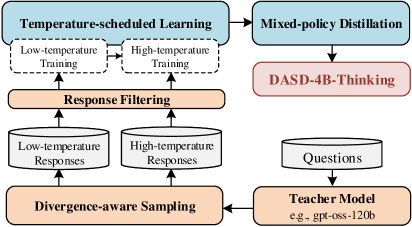

The image is a flowchart illustrating a process involving temperature-scheduled learning, mixed-policy distillation, and a teacher model. The diagram outlines the flow of data and operations from divergence-aware sampling to a final "DASD-4B-Thinking" stage.

### Components/Axes

* **Boxes:** Represent processes or modules.

* **Arrows:** Indicate the flow of data or operations.

* **Text Labels:** Describe the function or content of each component.

* **Colors:** Blue, orange, and red are used to differentiate stages or types of components.

### Detailed Analysis

1. **Temperature-scheduled Learning (Top-Left, Blue Box):**

* Contains two sub-processes: "Low-temperature Training" and "High-temperature Training".

* These sub-processes are represented by white boxes with dashed borders.

* An arrow connects "Low-temperature Training" to "High-temperature Training", indicating a sequential flow.

2. **Mixed-policy Distillation (Top-Right, Blue Box):**

* Receives input from "Temperature-scheduled Learning".

3. **DASD-4B-Thinking (Center-Right, Red Box):**

* Receives input from "Mixed-policy Distillation".

4. **Response Filtering (Center, Orange Box):**

* Receives input from "Low-temperature Responses" and "High-temperature Responses".

* Feeds into both "Low-temperature Training" and "High-temperature Training" within the "Temperature-scheduled Learning" module.

5. **Low-temperature Responses & High-temperature Responses (Center-Left, White Cylinders):**

* Represent data stores or datasets.

* Feed into "Response Filtering".

6. **Divergence-aware Sampling (Bottom-Left, Orange Box):**

* Feeds into both "Low-temperature Responses" and "High-temperature Responses".

* Receives input from the "Teacher Model".

7. **Teacher Model (Bottom-Right, Orange Box):**

* Labeled as "e.g., gpt-oss-120b".

* Receives input from "Questions" (White Cylinder).

* Feeds into "Divergence-aware Sampling".

8. **Questions (Center-Right, White Cylinder):**

* Represents a dataset of questions.

* Feeds into the "Teacher Model".

### Key Observations

* The diagram illustrates a cyclical process, with "Response Filtering" feeding back into the "Temperature-scheduled Learning" module.

* The "Teacher Model" provides the initial input for the learning process, which is then refined through divergence-aware sampling and response filtering.

* Temperature-scheduled learning is divided into low and high temperature training stages.

### Interpretation

The diagram depicts a knowledge distillation process where a "Teacher Model" (e.g., gpt-oss-120b) is used to train a student model through a temperature-scheduled learning approach. The "Divergence-aware Sampling" module likely selects relevant data points for training. "Response Filtering" likely refines the model's responses. The cyclical nature of the process suggests an iterative refinement of the student model's knowledge. The final "DASD-4B-Thinking" stage likely represents the application or evaluation of the distilled knowledge. The use of both low and high temperature training suggests an attempt to balance exploration and exploitation during the learning process.