## CPU Pipeline Diagram: In-order Frontend with Out-of-order Execution

### Overview

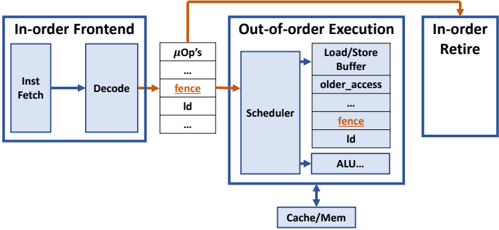

The image is a technical block diagram illustrating a modern CPU microarchitecture pipeline. It depicts the flow of instructions from fetch through execution to retirement, highlighting a hybrid design that combines an in-order frontend with an out-of-order execution core and an in-order retirement stage. The diagram uses blue-bordered boxes for major units, black text for labels, and orange highlights for specific control elements ("fence") and key data flow arrows.

### Components/Axes

The diagram is organized into three primary, horizontally-aligned blocks, with supporting components below and between them.

1. **Left Block: "In-order Frontend"**

* Contains two sub-blocks: "Inst Fetch" (left) and "Decode" (right).

* A blue arrow points from "Inst Fetch" to "Decode", indicating the initial in-order instruction flow.

2. **Central Block: "Out-of-order Execution"**

* This is the largest block, containing several key components:

* **Scheduler**: A large vertical box on the left side of this block.

* **Functional Units/Queues**: A stack of boxes to the right of the Scheduler:

* "Load/Store Buffer" (top)

* "older_access"

* "fence" (text in orange)

* "ld"

* "ALU..." (the ellipsis indicates additional, unspecified units like FPUs, Branch Units, etc.)

* A double-headed blue arrow connects the bottom of this block to a separate "Cache/Mem" box below it, indicating load/store communication with the memory hierarchy.

3. **Right Block: "In-order Retire"**

* A simple, empty box representing the final stage where instructions are committed in program order.

4. **Connecting Elements:**

* **μOp's Queue**: Positioned between the "In-order Frontend" and "Out-of-order Execution" blocks. It is a vertical stack containing:

* "μOp's" (at the top)

* "..."

* "fence" (text in orange)

* "ld"

* "..."

* **Data Flow Arrows**:

* A blue arrow flows from the "Decode" stage into the "μOp's" queue.

* A prominent **orange arrow** flows from the "μOp's" queue (specifically from the "fence" entry) into the "Scheduler" within the Out-of-order Execution block.

* Another **orange arrow** flows from the "Out-of-order Execution" block (originating near the "fence" entry within it) to the "In-order Retire" block.

### Detailed Analysis

The diagram explicitly labels the instruction flow and key micro-operations (μOps).

* **Instruction Flow Path**: `Inst Fetch` -> `Decode` -> `μOp's Queue` -> `Scheduler` -> (Various Execution Units: Load/Store, ALU, etc.) -> `In-order Retire`.

* **Memory Interaction**: The `Out-of-order Execution` unit has a dedicated, bidirectional link to `Cache/Mem`, crucial for load/store operations (`ld`, `Load/Store Buffer`).

* **Special Control Instruction - "fence"**: The "fence" instruction is highlighted in orange in two critical locations:

1. In the `μOp's` queue, indicating it has been decoded and is waiting for execution.

2. Within the `Out-of-order Execution` block's list of units/operations, indicating it is being processed by the scheduler or a dedicated unit to enforce memory ordering.

* **Ambiguity/Placeholder Text**: The ellipsis ("...") in the `μOp's` queue and after "ALU" indicates the list is not exhaustive. "older_access" is a label likely referring to a mechanism for tracking and enforcing memory dependency ordering.

### Key Observations

1. **Hybrid Pipeline Design**: The architecture explicitly separates ordering. The frontend (fetch/decode) and the backend (retire) are **in-order**, while the middle execution core is **out-of-order**. This is a classic design to maximize instruction-level parallelism while maintaining precise exceptions and state.

2. **Fence Instruction Prominence**: The "fence" instruction is the only specific μOp (besides "ld") called out in both the queue and the execution block, and it is color-coded. This emphasizes its critical role in serializing memory operations within an otherwise out-of-order engine.

3. **Scheduler-Centric Execution**: The `Scheduler` is the central hub within the out-of-order block, responsible for dispatching ready μOps to the appropriate functional units (`Load/Store Buffer`, `ALU`, etc.).

4. **Memory Hierarchy Integration**: The `Cache/Mem` block is shown as a separate entity directly connected to the execution core, underscoring that memory access latency is a primary concern managed by the `Load/Store Buffer` and `older_access` logic.

### Interpretation

This diagram is a conceptual model of a high-performance CPU core, likely from a modern superscalar processor. It visually explains how the CPU achieves high throughput:

* **The "Why" of the Design**: The in-order frontend provides a simple, fast initial decode. The out-of-order execution engine hides memory latency and maximizes utilization of execution units by running independent instructions ahead of stalled ones. The in-order retire unit ensures the architectural state is updated correctly and exceptions are handled precisely.

* **The Role of the "Fence"**: The highlighted "fence" represents a memory barrier instruction. Its placement shows it must be scheduled and executed to order memory accesses (`ld`, `Load/Store Buffer` operations) around it, preventing incorrect results in multi-threaded or DMA scenarios. The orange arrows trace its special control path.

* **Implied Complexity**: The ellipses ("...") and labels like "older_access" hint at the vast underlying complexity not shown, including branch prediction, register renaming, reorder buffers, and sophisticated dependency checking logic that enables the out-of-order magic.

* **Overall Message**: The diagram communicates that modern performance relies on a careful balance: breaking program order for speed (out-of-order execution) while meticulously restoring it for correctness (in-order retirement and fences).