## Diagram: AI Explainability Enhancement Flowchart

### Overview

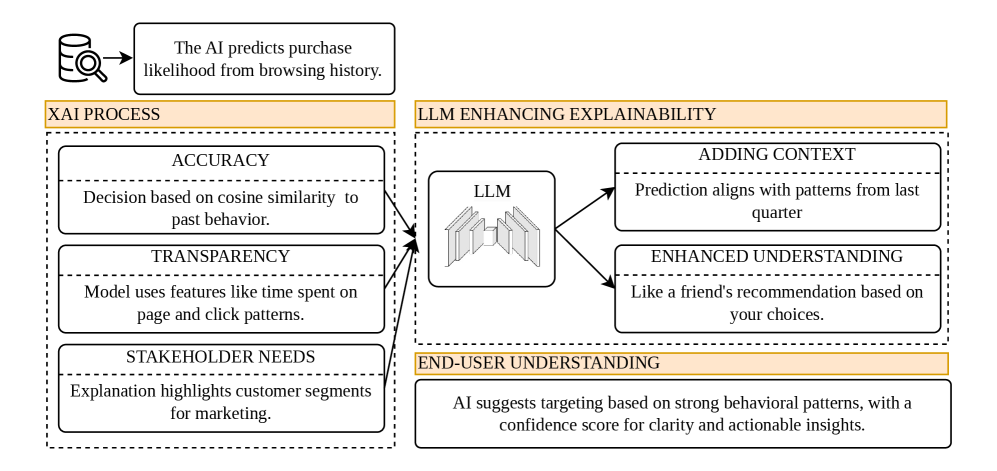

This image is a flowchart diagram illustrating a process for enhancing the explainability of an AI system that predicts purchase likelihood. The diagram shows how a core "XAI (Explainable AI) Process" is augmented by a Large Language Model (LLM) to produce outputs that are understandable to end-users. The flow moves from left to right, starting with an AI prediction task and culminating in actionable insights for a user.

### Components/Axes

The diagram is structured into three main horizontal sections, with a clear left-to-right flow indicated by arrows.

1. **Initial Input (Top-Left):**

* **Icon:** A database cylinder with a magnifying glass.

* **Text Box:** "The AI predicts purchase likelihood from browsing history."

2. **Left Section - "XAI PROCESS" (Orange Header):**

* This section is enclosed in a dashed-line box and contains three stacked components.

* **Component 1 (Top):**

* **Title:** ACCURACY

* **Description:** "Decision based on cosine similarity to past behavior."

* **Component 2 (Middle):**

* **Title:** TRANSPARENCY

* **Description:** "Model uses features like time spent on page and click patterns."

* **Component 3 (Bottom):**

* **Title:** STAKEHOLDER NEEDS

* **Description:** "Explanation highlights customer segments for marketing."

3. **Right Section - "LLM ENHANCING EXPLAINABILITY" (Orange Header):**

* This section is also enclosed in a dashed-line box and shows the LLM's role.

* **Central Component:** A box labeled "LLM" containing a stylized icon of stacked books or documents.

* **Arrows:** Two arrows point from the "LLM" box to two output boxes on its right.

* **Output Box 1 (Top):**

* **Title:** ADDING CONTEXT

* **Description:** "Prediction aligns with patterns from last quarter"

* **Output Box 2 (Bottom):**

* **Title:** ENHANCED UNDERSTANDING

* **Description:** "Like a friend's recommendation based on your choices."

4. **Final Output (Bottom-Right) - "END-USER UNDERSTANDING" (Orange Header):**

* A single, wide text box at the bottom of the diagram.

* **Text:** "AI suggests targeting based on strong behavioral patterns, with a confidence score for clarity and actionable insights."

5. **Flow Connections:**

* Three arrows originate from the right side of the "XAI PROCESS" box (one from each of its three components) and converge, pointing into the left side of the "LLM" box.

* Two arrows originate from the right side of the "LLM" box, pointing to the "ADDING CONTEXT" and "ENHANCED UNDERSTANDING" boxes.

* A final arrow flows from the "LLM ENHANCING EXPLAINABILITY" section down to the "END-USER UNDERSTANDING" box.

### Detailed Analysis

The diagram presents a conceptual pipeline, not a data chart. The information is entirely textual and relational.

* **Process Flow:** The core XAI process (focused on Accuracy, Transparency, and Stakeholder Needs) provides the foundational explainability. This output is then processed by an LLM.

* **LLM Function:** The LLM acts as an enhancer and translator. It takes the technical explanations from the XAI process and adds two key layers:

1. **Contextualization:** It frames the prediction within recent historical patterns ("last quarter").

2. **Analogy/Relatability:** It translates the logic into a human-understandable analogy ("like a friend's recommendation").

* **Final Transformation:** The combined output is synthesized into a final, user-centric form: a targeting suggestion that includes a confidence score, making it both clear and actionable.

### Key Observations

* **Dual-Layer Explanation:** The diagram explicitly separates the technical, model-centric explanation (XAI Process) from the user-centric, contextualized explanation (LLM Enhancement).

* **Stakeholder Focus:** The "STAKEHOLDER NEEDS" component within the XAI process indicates the initial explanation is already tailored for a specific audience (marketing), which the LLM then further refines for an end-user.

* **Action-Oriented Output:** The final box emphasizes "actionable insights," moving beyond mere explanation to prescribed action.

### Interpretation

This diagram argues that raw explainability from an AI model (the XAI Process) is insufficient for effective human understanding and decision-making. It posits that a Large Language Model is a crucial intermediary layer that can bridge the gap between technical model behavior and practical, business-ready insight.

The flow suggests a value chain: **Data → Prediction → Technical Explanation → Contextualized & Analogical Explanation → Actionable Insight.** The LLM's role is not to explain the model's inner workings more accurately, but to make the existing explanation more **comprehensible, relevant, and useful** for a non-technical end-user. The inclusion of a "confidence score" in the final output is a key detail, as it adds a layer of metacognition—explaining not just *what* the AI recommends, but *how sure* it is, which is critical for trust and decision-making. The overall message is that effective AI deployment requires not just predictive accuracy, but a deliberate pipeline for transforming model outputs into human-centric understanding.