\n

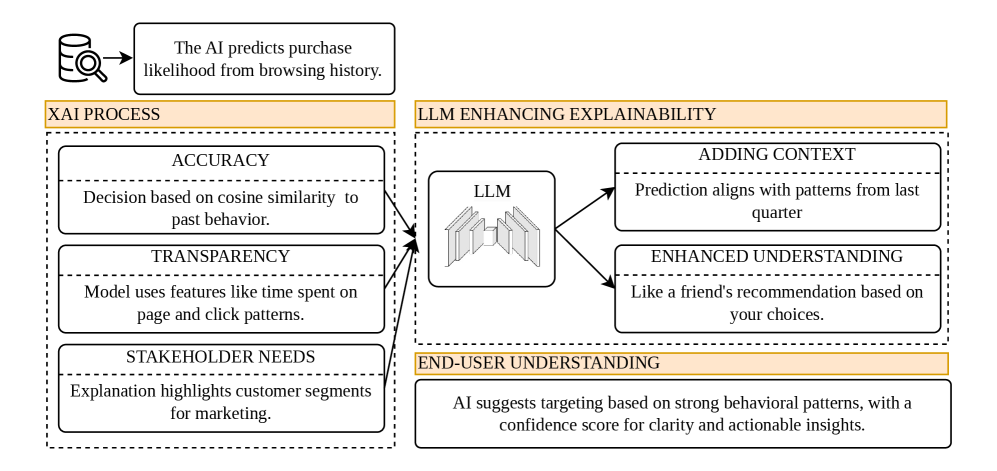

## Diagram: XAI Process with LLM Enhancement

### Overview

This diagram illustrates a process for Explainable AI (XAI) enhanced by a Large Language Model (LLM). The diagram is divided into two main sections: "XAI PROCESS" on the left and "LLM ENHANCING EXPLAINABILITY" on the right. Arrows indicate the flow of information from the XAI process to the LLM, and then to enhanced understanding and context.

### Components/Axes

The diagram consists of rectangular blocks representing different stages or aspects of the process. Key labels include:

* **XAI PROCESS:** The overall process of making AI decisions explainable.

* **LLM ENHANCING EXPLAINABILITY:** The role of the LLM in improving the clarity of AI explanations.

* **ACCURACY:** Described as "Decision based on cosine similarity to past behavior."

* **TRANSPARENCY:** Described as "Model uses features like time spent on page and click patterns."

* **STAKEHOLDER NEEDS:** Described as "Explanation highlights customer segments for marketing."

* **ADDING CONTEXT:** Described as "Prediction aligns with patterns from last quarter."

* **ENHANCED UNDERSTANDING:** Described as "Like a friend's recommendation based on your choices."

* **END-USER UNDERSTANDING:** Described as "AI suggests targeting based on strong behavioral patterns, with a confidence score for clarity and actionable insights."

* **LLM:** Represented by a stacked block diagram.

* An icon of a magnifying glass over a data point, with the text "The AI predicts purchase likelihood from browsing history."

### Detailed Analysis or Content Details

The diagram shows a flow of information from the XAI process to the LLM, and then to the end-user.

* **XAI PROCESS:** This section contains three blocks:

* **ACCURACY:** The decision-making process relies on cosine similarity to past behavior.

* **TRANSPARENCY:** The model utilizes features such as time spent on a page and click patterns.

* **STAKEHOLDER NEEDS:** The explanation focuses on customer segments for marketing purposes.

* **LLM ENHANCING EXPLAINABILITY:** This section contains:

* An LLM block, visually represented as a stacked structure.

* **ADDING CONTEXT:** The LLM adds context by aligning predictions with patterns from the last quarter.

* **ENHANCED UNDERSTANDING:** The LLM provides understanding similar to a friend's recommendation.

* **END-USER UNDERSTANDING:** The LLM delivers insights for targeting based on behavioral patterns, including a confidence score.

* Arrows connect the XAI process blocks to the LLM block, and then from the LLM block to the "ADDING CONTEXT" and "ENHANCED UNDERSTANDING" blocks. A final arrow connects these to "END-USER UNDERSTANDING".

### Key Observations

The diagram emphasizes the importance of not only making AI decisions accurate and transparent but also tailoring explanations to stakeholder needs and providing end-user understanding. The LLM acts as a bridge between the technical details of the AI model and the practical application of its insights. The use of phrases like "like a friend's recommendation" suggests a focus on making AI explanations more relatable and intuitive.

### Interpretation

The diagram illustrates a workflow for improving the interpretability of AI predictions. The XAI process focuses on the technical aspects of explainability (accuracy, transparency), while the LLM enhances this by adding context and making the explanations more understandable for stakeholders and end-users. The LLM doesn't just *explain* the AI's decision; it *translates* it into a form that is more meaningful and actionable. The inclusion of a "confidence score" in the end-user understanding suggests a desire to quantify the reliability of the AI's insights. This diagram suggests a shift from simply building accurate AI models to building AI systems that can effectively communicate their reasoning to humans. The diagram is conceptual and does not contain numerical data, but rather a description of a process.