## Flowchart: AI Purchase Likelihood Prediction and Explainability Enhancement System

### Overview

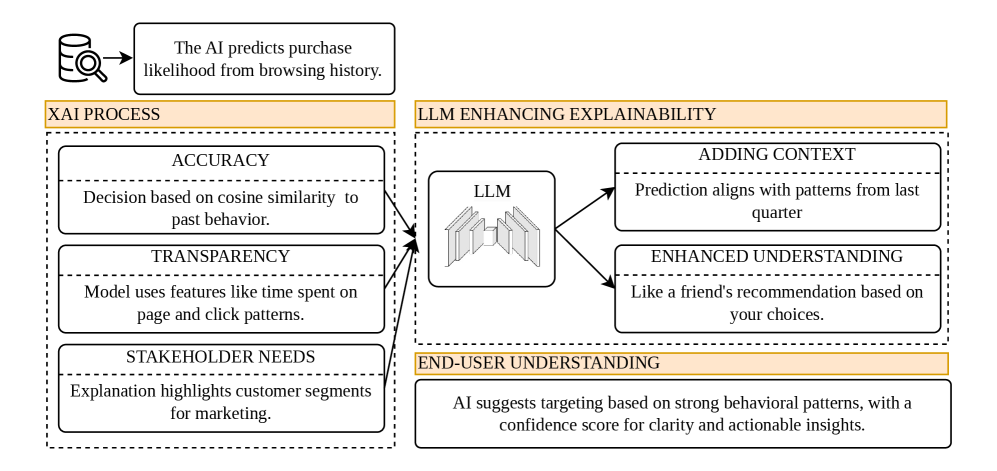

The diagram illustrates a two-stage process for an AI system that predicts purchase likelihood from browsing history and enhances explainability through Large Language Models (LLM). It emphasizes technical accuracy, transparency, and end-user understanding.

### Components/Axes

1. **XAI PROCESS** (Left Column)

- **ACCURACY**: "Decision based on cosine similarity to past behavior."

- **TRANSPARENCY**: "Model uses features like time spent on page and click patterns."

- **STAKEHOLDER NEEDS**: "Explanation highlights customer segments for marketing."

2. **LLM ENHANCING EXPLAINABILITY** (Right Column)

- **ADDING CONTEXT**: "Prediction aligns with patterns from last quarter."

- **ENHANCED UNDERSTANDING**: "Like a friend’s recommendation based on your choices."

3. **END-USER UNDERSTANDING** (Bottom Right)

- "AI suggests targeting based on strong behavioral patterns, with a confidence score for clarity and actionable insights."

4. **Visual Elements**

- **Arrows**: Connect XAI PROCESS → LLM → END-USER UNDERSTANDING, indicating workflow.

- **Icons**:

- Magnifying glass (search) → AI prediction.

- Stacked papers (LLM) → Contextual enhancement.

- **Dashed Boxes**: Highlight key evaluation criteria (Accuracy, Transparency, Stakeholder Needs).

### Detailed Analysis

- **XAI PROCESS**: Focuses on technical rigor (cosine similarity) and interpretability (time-on-page, click patterns). Stakeholder needs are tied to marketing segmentation.

- **LLM Integration**: Adds temporal context (last quarter patterns) and analogical reasoning (friend’s recommendation) to improve model transparency.

- **End-User Output**: Combines behavioral patterns with a confidence score to ensure actionable insights.

### Key Observations

- **Flow Direction**: Linear progression from technical model evaluation (XAI) to contextual enhancement (LLM) to user-facing output.

- **No Numerical Data**: The diagram emphasizes qualitative explanations over quantitative metrics.

- **Confidence Score**: Explicitly mentioned as a clarity metric for end-users, though no numerical range is provided.

### Interpretation

The system prioritizes **explainability** at every stage:

1. **Technical Accuracy**: Uses cosine similarity to ensure predictions align with historical behavior.

2. **Contextual Relevance**: LLM adds temporal context (e.g., quarterly patterns) to avoid overfitting to short-term trends.

3. **User-Centric Design**: Translates technical outputs into relatable analogies (friend’s recommendation) and quantifies confidence to build trust.

This architecture suggests a hybrid approach where traditional explainable AI (XAI) methods are augmented with LLM capabilities to bridge the gap between model complexity and stakeholder comprehension. The absence of numerical thresholds implies flexibility for domain-specific calibration.