## Line Graphs: BFCL V3 Training and Evaluation Reward

### Overview

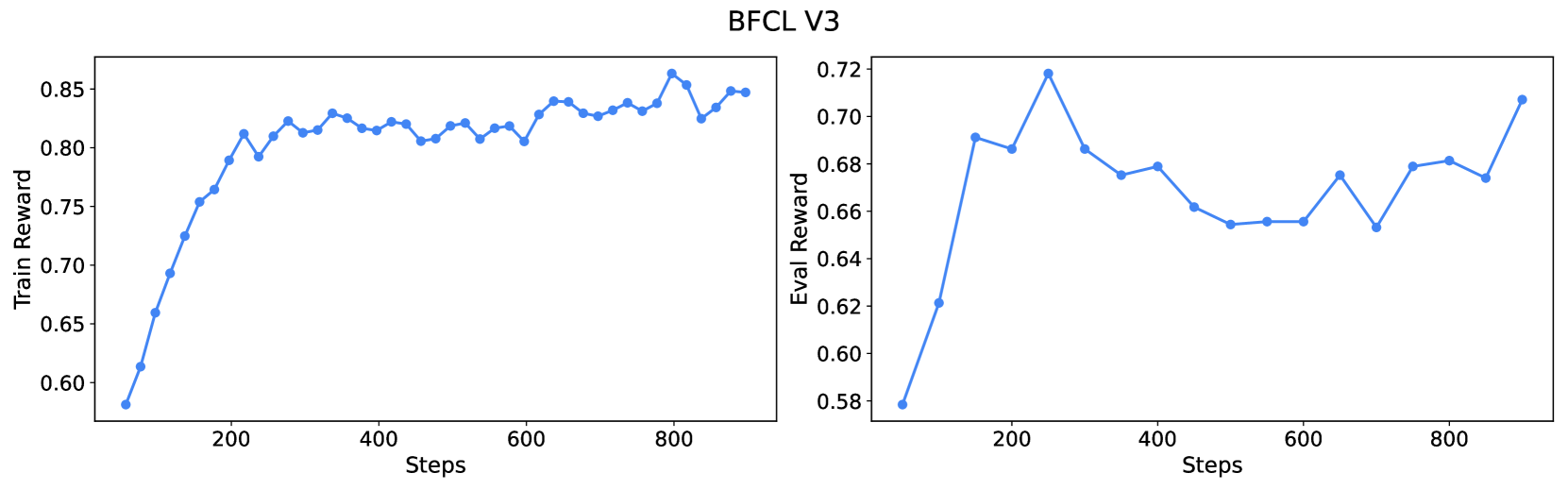

The image contains two side-by-side line graphs titled "BFCL V3". The left graph tracks "Train Reward" over steps, while the right graph tracks "Eval Reward". Both graphs show performance metrics across 800 steps, with distinct trends in reward values.

### Components/Axes

- **Left Graph (Train Reward)**:

- **Y-axis**: "Train Reward" (range: 0.58–0.85)

- **X-axis**: "Steps" (0–800)

- **Line**: Blue, steady upward trend with minor fluctuations.

- **Right Graph (Eval Reward)**:

- **Y-axis**: "Eval Reward" (range: 0.58–0.72)

- **X-axis**: "Steps" (0–800)

- **Line**: Blue, volatile with sharp peaks and troughs.

### Detailed Analysis

- **Left Graph (Train Reward)**:

- Starts at ~0.58 at step 0.

- Gradually increases to ~0.85 by step 800.

- Minor dips observed around steps 300–400 and 600–700, but overall upward trajectory.

- **Right Graph (Eval Reward)**:

- Begins at ~0.58 at step 0.

- Sharp spike to ~0.72 at step 200.

- Fluctuates between ~0.64 and ~0.72 until step 800, ending at ~0.71.

### Key Observations

1. **Train Reward**: Consistent improvement over time, suggesting effective model learning.

2. **Eval Reward**: Initial overperformance at step 200 (~0.72), followed by instability. Final value (~0.71) lags behind training reward (~0.85), indicating potential overfitting or evaluation noise.

3. **Divergence**: By step 800, training reward exceeds evaluation reward by ~0.14, highlighting a gap between training and generalization performance.

### Interpretation

The data suggests the BFCL V3 model improves steadily during training but struggles with consistent evaluation performance. The sharp early spike in evaluation reward may reflect an outlier or temporary alignment with the evaluation metric. The persistent gap between training and evaluation rewards by step 800 implies the model may be overfitting to the training data or facing challenges in generalizing to unseen data. Further investigation into evaluation dataset complexity or regularization techniques could address this divergence.