## Bar Chart: Model Performance Comparison (Generation vs. Multiple-choice)

### Overview

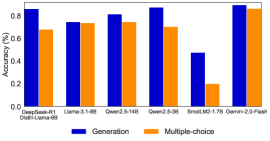

This image displays a bar chart comparing the performance of six different language models across two distinct tasks: "Generation" and "Multiple-choice". Performance is measured as "Accuracy (%)" on the y-axis, ranging from 0.0 to approximately 0.9. Each model is represented by a pair of bars, with blue indicating "Generation" performance and orange indicating "Multiple-choice" performance.

### Components/Axes

* **Chart Type**: Vertical Bar Chart.

* **Y-axis**:

* **Title**: Accuracy (%)

* **Scale**: Ranges from 0.0 to 0.9.

* **Major Tick Markers**: 0.0, 0.2, 0.4, 0.6, 0.8.

* **X-axis**:

* **Title**: None explicitly stated, but represents different language models.

* **Categories (Models)**:

1. DeepSeek-R1 (with "Distil-Llama-8B" text directly below it, possibly a related model or detail)

2. Uana-3.1-8B

3. Qwen2.5-14B

4. Qwen2.5-3B

5. SmolLM2-1.7B

6. Gemini-2.0-Flash

* **Legend**: Located at the bottom-center of the chart.

* **Blue square**: Generation

* **Orange square**: Multiple-choice

### Detailed Analysis

The chart presents pairs of bars for each model, showing their "Generation" (blue) and "Multiple-choice" (orange) accuracy.

1. **DeepSeek-R1 (and Distil-Llama-8B)**:

* Generation (Blue): Approximately 0.85 Accuracy (85%)

* Multiple-choice (Orange): Approximately 0.68 Accuracy (68%)

* *Trend*: Generation performance is notably higher than Multiple-choice performance for this model.

2. **Uana-3.1-8B**:

* Generation (Blue): Approximately 0.75 Accuracy (75%)

* Multiple-choice (Orange): Approximately 0.74 Accuracy (74%)

* *Trend*: Performance for both tasks is very similar, with Generation slightly higher.

3. **Qwen2.5-14B**:

* Generation (Blue): Approximately 0.81 Accuracy (81%)

* Multiple-choice (Orange): Approximately 0.75 Accuracy (75%)

* *Trend*: Generation performance is higher than Multiple-choice performance.

4. **Qwen2.5-3B**:

* Generation (Blue): Approximately 0.86 Accuracy (86%)

* Multiple-choice (Orange): Approximately 0.70 Accuracy (70%)

* *Trend*: Generation performance is significantly higher than Multiple-choice performance.

5. **SmolLM2-1.7B**:

* Generation (Blue): Approximately 0.48 Accuracy (48%)

* Multiple-choice (Orange): Approximately 0.20 Accuracy (20%)

* *Trend*: Both performances are the lowest among all models, with Generation being more than double the Multiple-choice score.

6. **Gemini-2.0-Flash**:

* Generation (Blue): Approximately 0.88 Accuracy (88%)

* Multiple-choice (Orange): Approximately 0.85 Accuracy (85%)

* *Trend*: This model shows the highest performance for both tasks, with Generation slightly exceeding Multiple-choice.

### Key Observations

* **Overall Performance**: Gemini-2.0-Flash consistently achieves the highest accuracy in both Generation (~0.88) and Multiple-choice (~0.85) tasks.

* **Lowest Performance**: SmolLM2-1.7B shows the lowest accuracy for both Generation (~0.48) and Multiple-choice (~0.20), indicating it performs significantly worse than the other models presented.

* **Generation vs. Multiple-choice**: For all models, "Generation" accuracy (blue bars) is either higher than or very close to "Multiple-choice" accuracy (orange bars). There is no instance where "Multiple-choice" performance surpasses "Generation".

* **Performance Gap**: The largest performance gap between Generation and Multiple-choice is observed in SmolLM2-1.7B (0.48 vs 0.20) and DeepSeek-R1 (0.85 vs 0.68), where Generation significantly outperforms Multiple-choice.

* **Closest Performance**: Uana-3.1-8B (0.75 vs 0.74) and Gemini-2.0-Flash (0.88 vs 0.85) exhibit the closest performance between the two tasks.

* **Ambiguous Label**: The presence of "Distil-Llama-8B" directly below "DeepSeek-R1" on the X-axis is unique to that model group and its exact relationship to "DeepSeek-R1" is not explicitly defined by the chart.

### Interpretation

The data suggests that, for the evaluated language models, the ability to generate content ("Generation") generally results in higher or comparable accuracy compared to selecting from multiple choices ("Multiple-choice"). This could imply that the models are either more proficient in open-ended generation tasks or that the "Generation" task itself might be evaluated differently or present a different kind of challenge where their strengths are more apparent.

Gemini-2.0-Flash stands out as the top-performing model across both metrics, demonstrating strong capabilities in both generative and discriminative tasks. Conversely, SmolLM2-1.7B consistently underperforms, indicating it may be a smaller or less capable model compared to the others in this benchmark.

The relatively small difference between Generation and Multiple-choice scores for models like Uana-3.1-8B and Gemini-2.0-Flash might suggest a more balanced proficiency across different types of language understanding and production tasks. In contrast, models like DeepSeek-R1 and Qwen2.5-3B show a more pronounced advantage in generation, which could point to architectural or training differences that favor creative output over precise selection. The consistent trend of "Generation" being at least as good as "Multiple-choice" is a notable pattern across this set of models.