## Process Diagram: AI Interpretability Improvement

### Overview

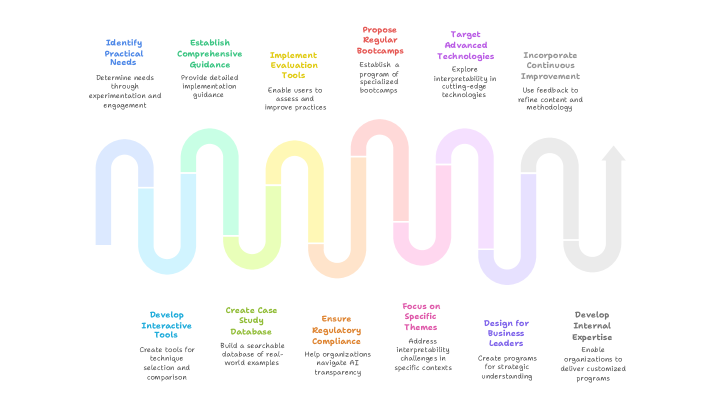

The image is a process diagram illustrating a series of steps to improve AI interpretability. The process is represented as a winding path, starting with identifying practical needs and ending with developing internal expertise. Each step is associated with a specific action and a brief description.

### Components/Axes

The diagram consists of the following components:

1. **Title:** There is no overall title for the diagram.

2. **Steps:** The process is divided into ten steps, each represented by a colored section along the winding path.

3. **Step Titles:** Each step has a title, indicating the action to be taken.

4. **Step Descriptions:** Each step has a brief description, providing more detail about the action.

5. **Winding Path:** A winding path connects the steps, visually representing the flow of the process. The path starts at the top-left and ends with an arrow pointing upwards on the right.

6. **Colors:** Each step is associated with a specific color. The colors are: blue, green, yellow, orange, pink, purple, light blue, light green, light orange, and light purple.

### Detailed Analysis or ### Content Details

The process steps, their descriptions, and associated colors are as follows:

1. **Identify Practical Needs** (Blue): "Determine needs through experimentation and engagement." Located at the top-left.

2. **Establish Comprehensive Guidance** (Green): "Provide detailed implementation guidance." Located at the top, to the right of the first step.

3. **Implement Evaluation Tools** (Yellow): "Enable users to assess and improve practices." Located at the top, to the right of the second step.

4. **Propose Regular Bootcamps** (Orange): "Establish a program of specialized bootcamps." Located at the top, to the right of the third step.

5. **Target Advanced Technologies** (Pink): "Explore interpretability in cutting-edge technologies." Located at the top, to the right of the fourth step.

6. **Incorporate Continuous Improvement** (Purple): "Use feedback to refine content and methodology." Located at the top-right.

7. **Develop Interactive Tools** (Light Blue): "Create tools for technique selection and comparison." Located at the bottom-left.

8. **Create Case Study Database** (Light Green): "Build a searchable database of real-world examples." Located at the bottom, to the right of the seventh step.

9. **Ensure Regulatory Compliance** (Light Orange): "Help organizations navigate AI transparency." Located at the bottom, to the right of the eighth step.

10. **Focus on Specific Themes** (Light Pink): "Address interpretability challenges in specific contexts." Located at the bottom, to the right of the ninth step.

11. **Design for Business Leaders** (Light Purple): "Create programs for strategic understanding." Located at the bottom, to the right of the tenth step.

12. **Develop Internal Expertise** (Grey): "Enable organizations to deliver customized programs." Located at the bottom-right.

### Key Observations

* The process starts with identifying needs and ends with developing expertise, suggesting a cyclical or iterative approach.

* The steps alternate between top and bottom positions, creating a visual flow that emphasizes the interconnectedness of the actions.

* The color scheme provides a visual cue for distinguishing the steps and understanding their sequence.

### Interpretation

The diagram outlines a structured approach to improving AI interpretability within an organization. It emphasizes the importance of understanding practical needs, providing guidance, implementing tools, and continuously improving the process. The inclusion of steps like "Ensure Regulatory Compliance" and "Focus on Specific Themes" highlights the need to address both technical and contextual aspects of AI interpretability. The winding path visually represents the iterative nature of the process, suggesting that organizations should continuously revisit and refine their approach to AI interpretability.