## Diagram: Comparison of Knowledge Graphs (KGs) and Large Language Models (LLMs)

### Overview

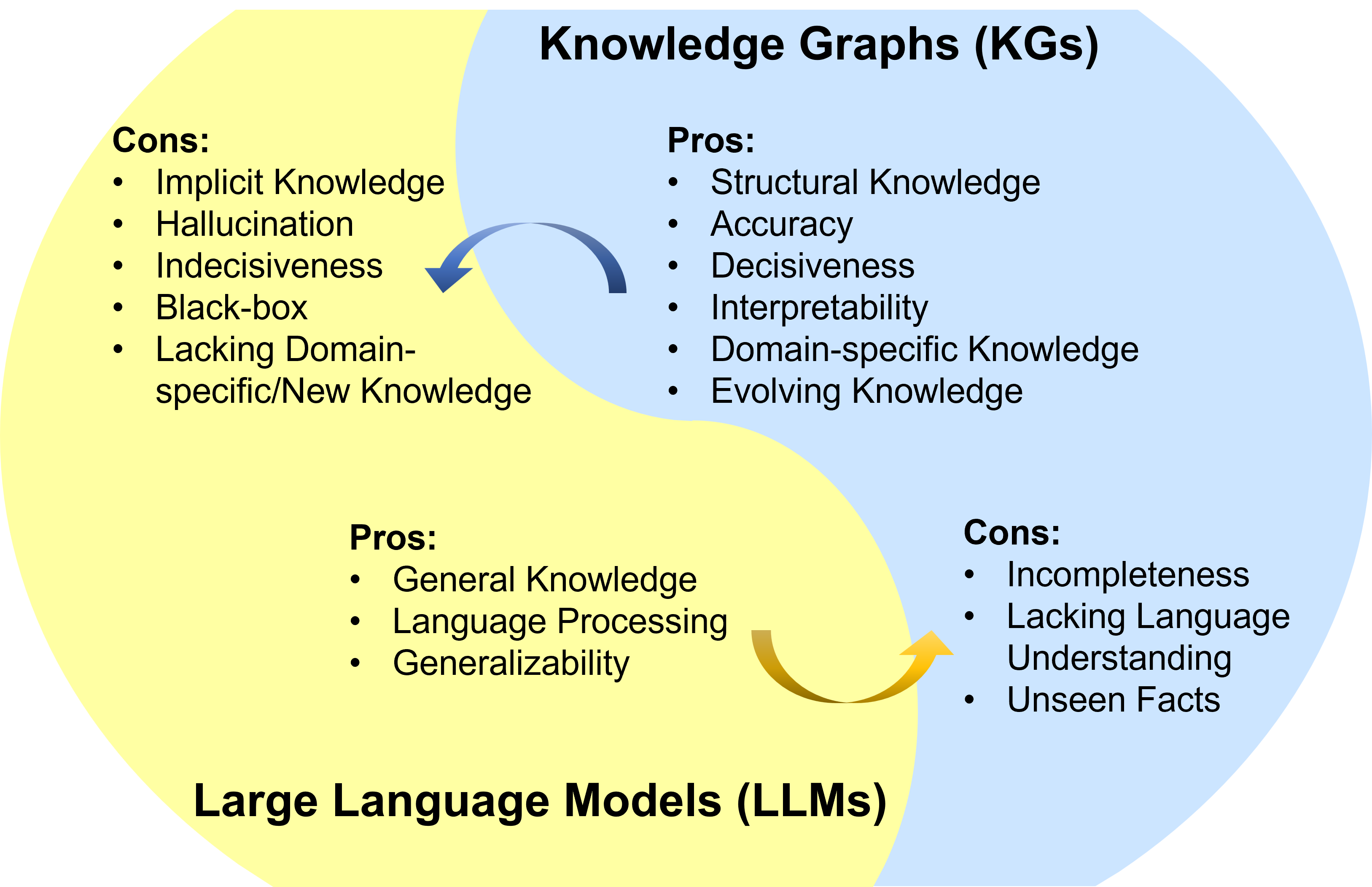

The image is a Venn diagram comparing the characteristics of Knowledge Graphs (KGs) and Large Language Models (LLMs). It visually presents the pros and cons of each technology and suggests a complementary relationship between them through directional arrows.

### Components/Axes

The diagram consists of two large, overlapping circles on a light gray background.

* **Top Circle (Light Blue):** Labeled **"Knowledge Graphs (KGs)"** at the top center.

* **Bottom Circle (Yellow):** Labeled **"Large Language Models (LLMs)"** at the bottom center.

* **Overlapping Region:** The intersection of the two circles is a lighter blue shade.

* **Arrows:**

* A **blue curved arrow** originates from the KGs circle (near its "Pros" list) and points towards the LLMs circle (near its "Cons" list).

* A **yellow curved arrow** originates from the LLMs circle (near its "Pros" list) and points towards the KGs circle (near its "Cons" list).

### Detailed Analysis

The textual content is organized into "Pros" and "Cons" lists within each circle.

**1. Knowledge Graphs (KGs) - Blue Circle (Top)**

* **Pros (Listed in the upper-right section of the blue circle):**

* Structural Knowledge

* Accuracy

* Decisiveness

* Interpretability

* Domain-specific Knowledge

* Evolving Knowledge

* **Cons (Listed in the lower-right section of the blue circle, within the overlap):**

* Incompleteness

* Lacking Language Understanding

* Unseen Facts

**2. Large Language Models (LLMs) - Yellow Circle (Bottom)**

* **Pros (Listed in the lower-left section of the yellow circle, within the overlap):**

* General Knowledge

* Language Processing

* Generalizability

* **Cons (Listed in the upper-left section of the yellow circle):**

* Implicit Knowledge

* Hallucination

* Indecisiveness

* Black-box

* Lacking Domain-specific/New Knowledge

### Key Observations

* **Spatial Organization:** The "Cons" for KGs and the "Pros" for LLMs are placed within the overlapping region of the Venn diagram, suggesting these are the areas where the two technologies intersect or where one's characteristics are most relevant to the other's weaknesses.

* **Directional Relationship:** The arrows explicitly map a relationship of potential mitigation. The blue arrow suggests KGs' strengths (e.g., Structural Knowledge, Accuracy) can address LLMs' weaknesses (e.g., Hallucination, Black-box). The yellow arrow suggests LLMs' strengths (e.g., Language Processing, Generalizability) can address KGs' weaknesses (e.g., Incompleteness, Lacking Language Understanding).

* **Contrasting Pairs:** Direct opposites are highlighted, such as KGs' "Accuracy" vs. LLMs' "Hallucination," and KGs' "Interpretability" vs. LLMs' "Black-box."

### Interpretation

This diagram presents a **comparative and synergistic analysis** of two foundational AI technologies. It argues that KGs and LLMs have fundamentally different, often inverse, strengths and weaknesses.

* **Core Argument:** The data suggests that neither technology is a complete solution on its own. KGs excel at providing structured, verifiable, and explainable knowledge but are rigid and lack linguistic nuance. LLMs excel at flexible language understanding and general reasoning but are prone to generating incorrect information (hallucinations) and operate as opaque "black boxes."

* **Proposed Synergy:** The arrows are the most critical interpretive element. They propose a **bidirectional integration** where each technology can compensate for the other's flaws. A hybrid system could use a KG to ground an LLM's responses in factual, structured data, reducing hallucinations and improving interpretability. Conversely, an LLM could be used to populate, query, and natural-language interface with a KG, helping to overcome its incompleteness and lack of language understanding.

* **Underlying Message:** The diagram advocates for a **Peircean investigative approach**—moving beyond viewing these models in isolation. The "truth" or effective application lies in their relationship and combination. The visual layout implies that the future of robust AI systems may reside in the overlapping region, leveraging the decisive, structured knowledge of graphs with the generalizable, linguistic prowess of large models.