## Venn Diagram: Comparison of Knowledge Graphs (KGs) and Large Language Models (LLMs)

### Overview

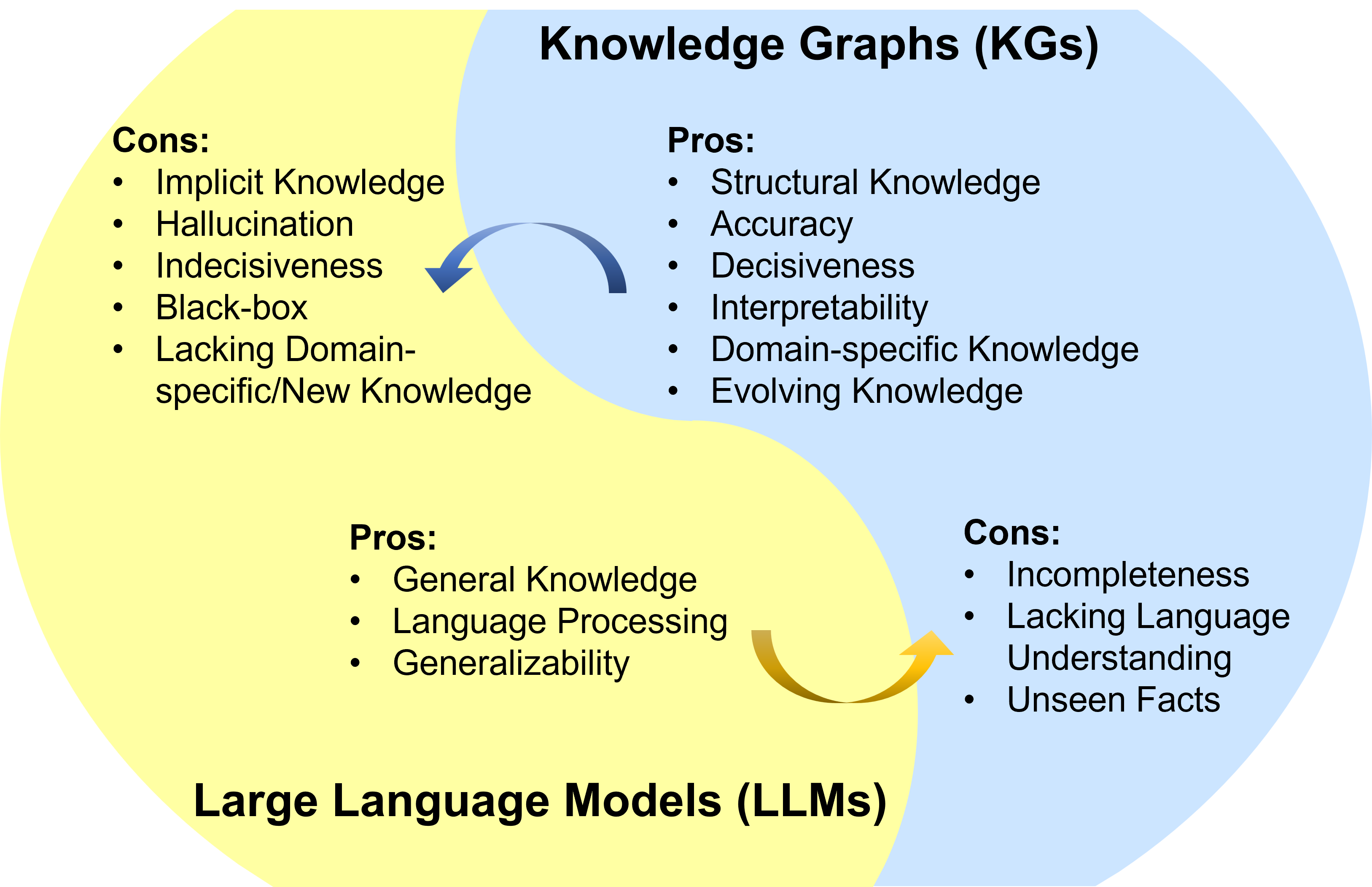

The image is a Venn diagram comparing the **pros** and **cons** of **Knowledge Graphs (KGs)** and **Large Language Models (LLMs)**. Two overlapping circles represent the two technologies, with shared and unique attributes.

### Components/Axes

- **Left Circle (KGs)**:

- **Pros**: Structural Knowledge, Accuracy, Decisiveness, Interpretability, Domain-specific Knowledge, Evolving Knowledge.

- **Cons**: Implicit Knowledge, Hallucination, Indecisiveness, Black-box, Lacking Domain-specific/New Knowledge.

- **Right Circle (LLMs)**:

- **Pros**: General Knowledge, Language Processing, Generalizability.

- **Cons**: Incompleteness, Lacking Language Understanding, Unseen Facts.

- **Overlap (Shared Space)**:

- Arrows indicate bidirectional relationships:

- A **blue arrow** points from KGs to LLMs, labeled "Domain-specific/New Knowledge."

- A **yellow arrow** points from LLMs to KGs, labeled "General Knowledge."

### Detailed Analysis

- **KGs Pros**:

- Focus on structured, accurate, and interpretable knowledge.

- Evolving knowledge implies dynamic updates.

- **KGs Cons**:

- Hallucination and black-box nature suggest reliability issues.

- Lacking domain-specific knowledge highlights gaps in specialization.

- **LLMs Pros**:

- Strengths in general knowledge and language processing.

- Generalizability indicates adaptability across tasks.

- **LLMs Cons**:

- Incompleteness and unseen facts point to knowledge gaps.

- Lacking language understanding suggests limitations in nuanced comprehension.

### Key Observations

1. **Complementary Relationships**:

- KGs provide structured, domain-specific knowledge to address LLMs' incompleteness.

- LLMs offer general knowledge and language processing to mitigate KGs' black-box limitations.

2. **Trade-offs**:

- KGs excel in accuracy and interpretability but struggle with new/implicit knowledge.

- LLMs handle broad language tasks but lack precision and domain specificity.

3. **Bidirectional Arrows**:

- Highlight interdependence: KGs supply domain-specific data to LLMs, while LLMs generalize knowledge for KGs.

### Interpretation

The diagram illustrates a **symbiotic relationship** between KGs and LLMs:

- **KGs** act as a **structured foundation**, ensuring accuracy and domain specificity but requiring external input (e.g., LLMs) for adaptability.

- **LLMs** provide **broad, general knowledge** and language fluency but rely on KGs to ground outputs in verified facts.

- **Hallucination** (KGs) and **unseen facts** (LLMs) represent critical limitations that could be addressed through integration.

- The **evolving knowledge** of KGs and **generalizability** of LLMs suggest potential for hybrid systems that combine structured reasoning with flexible language understanding.

This analysis underscores the need for **collaborative frameworks** where KGs and LLMs compensate for each other’s weaknesses, enabling more robust AI systems.